Introduction

Apache NiFi is a dataflow system based on the concepts of flow-based programming. It supports powerful and scalable directed graphs of data routing, transformation, and system mediation logic. NiFi has a web-based user interface for design, control, feedback, and monitoring of dataflows. It is highly configurable along several dimensions of quality of service, such as loss-tolerant versus guaranteed delivery, low latency versus high throughput, and priority-based queuing. NiFi provides fine-grained data provenance for all data received, forked, joined cloned, modified, sent, and ultimately dropped upon reaching its configured end-state.

See the System Administrator’s Guide for information about system requirements, installation, and configuration. Once NiFi is installed, use a supported web browser to view the UI.

Browser Support

| Browser | Version |

|---|---|

Chrome |

Current and Current - 1 |

FireFox |

Current and Current - 1 |

Edge |

Current and Current - 1 |

Safari |

Current and Current - 1 |

Current and Current - 1 indicates that the UI is supported in the current stable release of that browser and the preceding one. For instance, if the current stable release is 45.X then the officially supported versions will be 45.X and 44.X.

For Safari, which releases major versions much less frequently, Current and Current - 1 simply represent the two latest releases.

The supported browser versions are driven by the capabilities the UI employs and the dependencies it uses. UI features will be developed and tested against the supported browsers. Any problem using a supported browser should be reported to Apache NiFi.

Unsupported Browsers

While the UI may run successfully in unsupported browsers, it is not actively tested against them. Additionally, the UI is designed as a desktop experience and is not currently supported in mobile browsers.

Viewing the UI in Variably Sized Browsers

In most environments, all of the UI is visible in your browser. However, the UI has a responsive design that allows you to scroll through screens as needed, in smaller sized browsers or tablet environments.

In environments where your browser width is less than 800 pixels and the height less than 600 pixels, portions of the UI may become unavailable.

Terminology

DataFlow Manager: A DataFlow Manager (DFM) is a NiFi user who has permissions to add, remove, and modify components of a NiFi dataflow.

FlowFile: The FlowFile represents a single piece of data in NiFi. A FlowFile is made up of two components: FlowFile Attributes and FlowFile Content. Content is the data that is represented by the FlowFile. Attributes are characteristics that provide information or context about the data; they are made up of key-value pairs. All FlowFiles have the following Standard Attributes:

-

uuid: A Universally Unique Identifier that distinguishes the FlowFile from other FlowFiles in the system.

-

filename: A human-readable filename that may be used when storing the data to disk or in an external service

-

path: A hierarchically structured value that can be used when storing data to disk or an external service so that the data is not stored in a single directory

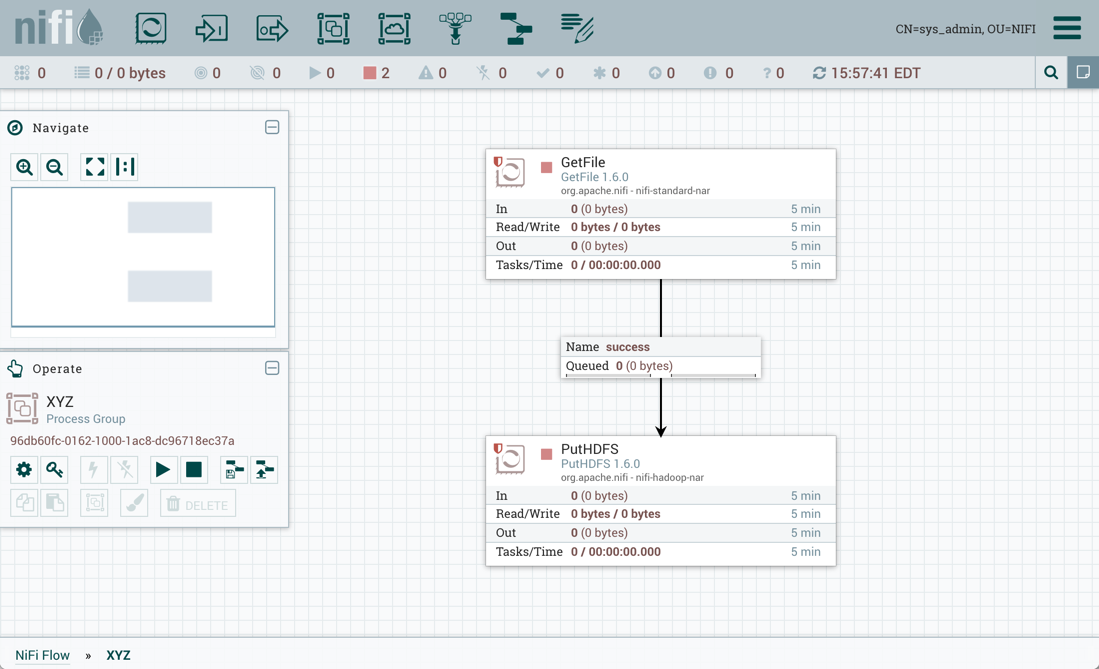

Processor: The Processor is the NiFi component that is used to listen for incoming data; pull data from external sources; publish data to external sources; and route, transform, or extract information from FlowFiles.

Relationship: Each Processor has zero or more Relationships defined for it. These Relationships are named to indicate the result of processing a FlowFile. After a Processor has finished processing a FlowFile, it will route (or “transfer”) the FlowFile to one of the Relationships. A DFM is then able to connect each of these Relationships to other components in order to specify where the FlowFile should go next under each potential processing result.

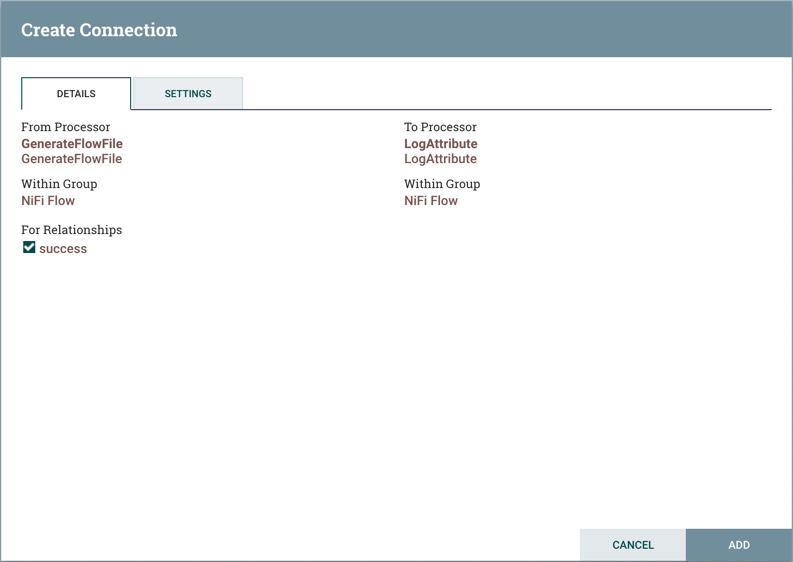

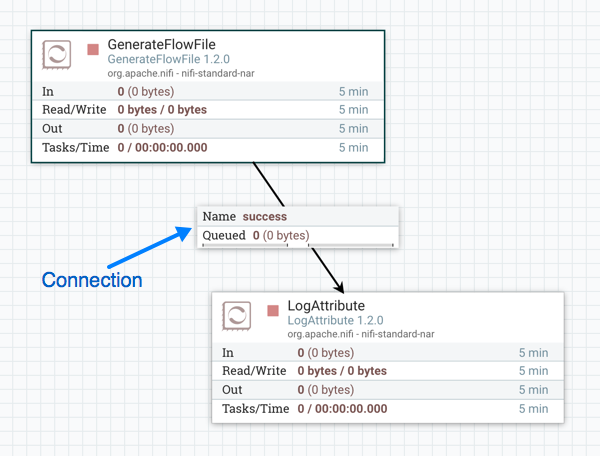

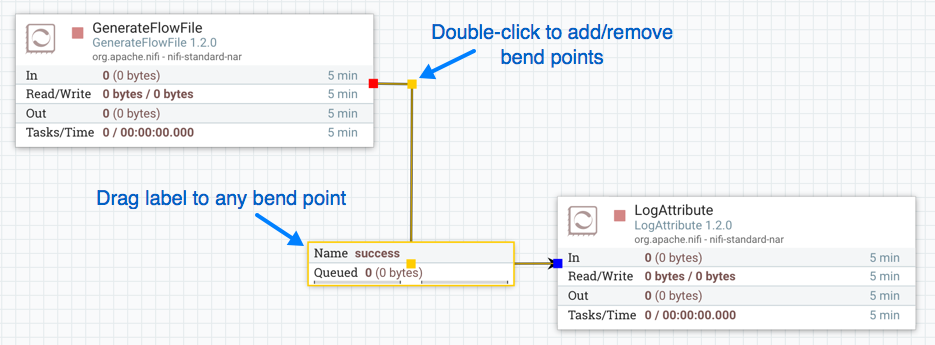

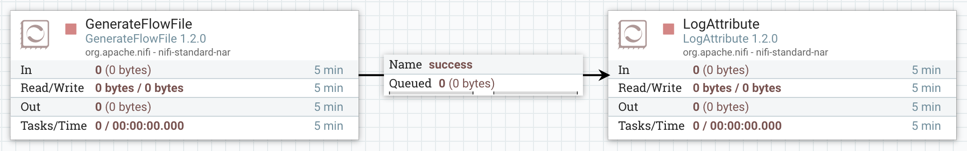

Connection: A DFM creates an automated dataflow by dragging components from the Components part of the NiFi toolbar to the canvas and then connecting the components together via Connections. Each connection consists of one or more Relationships. For each Connection that is drawn, a DFM can determine which Relationships should be used for the Connection. This allows data to be routed in different ways based on its processing outcome. Each connection houses a FlowFile Queue. When a FlowFile is transferred to a particular Relationship, it is added to the queue belonging to the associated Connection.

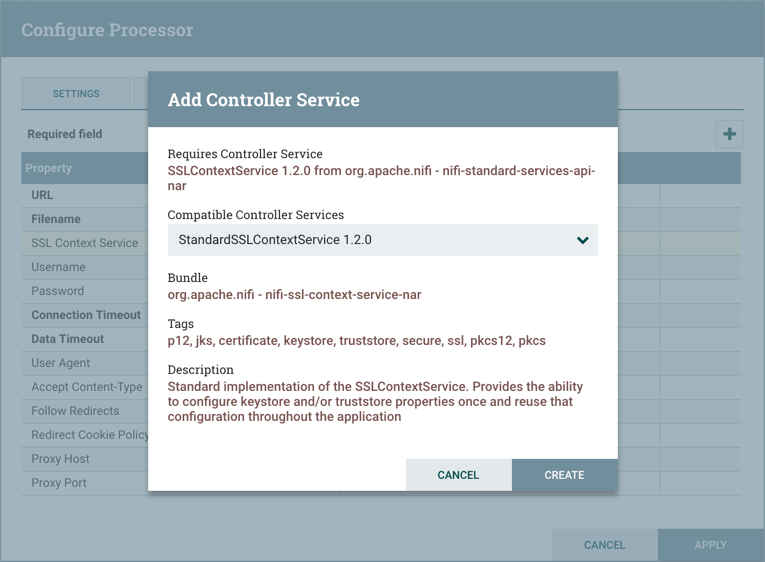

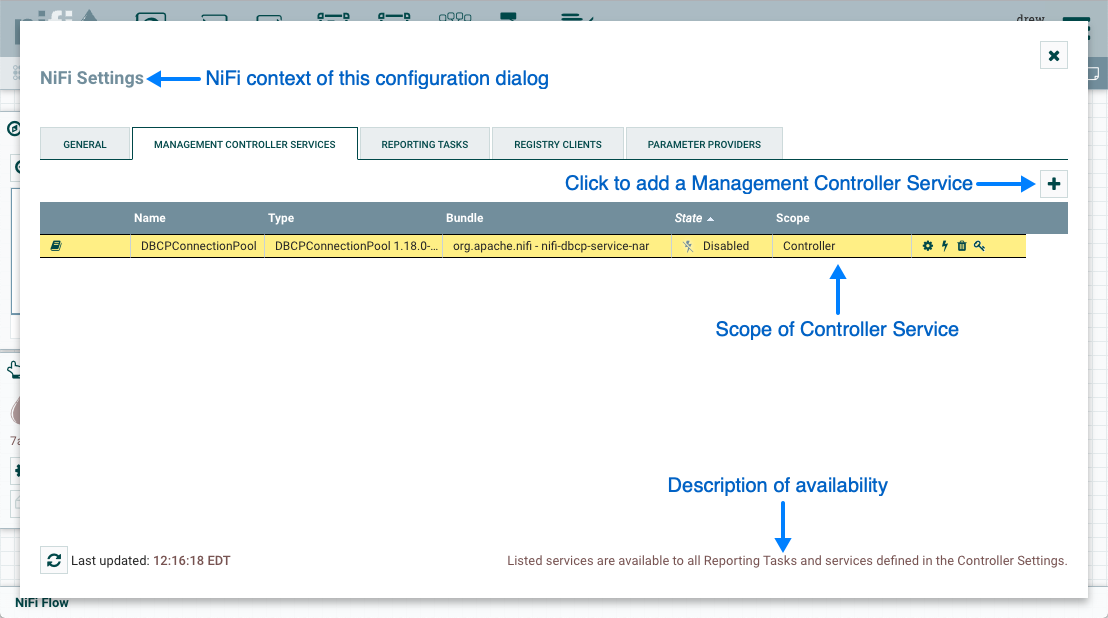

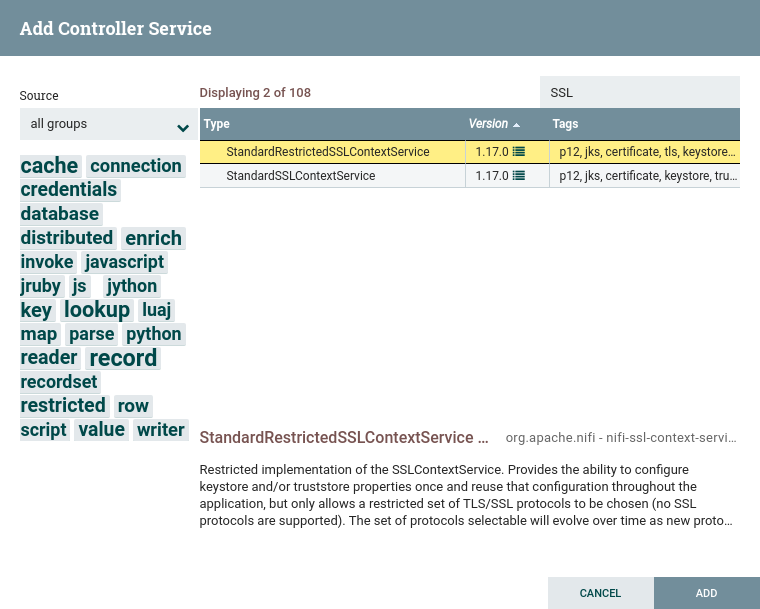

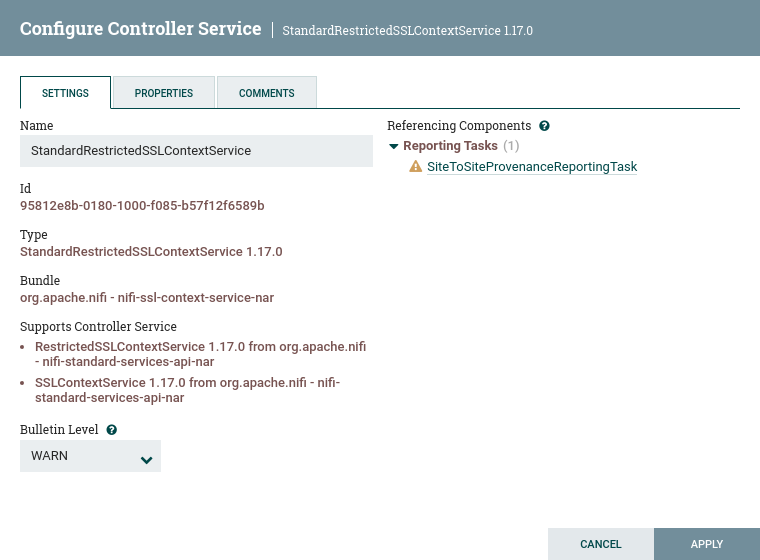

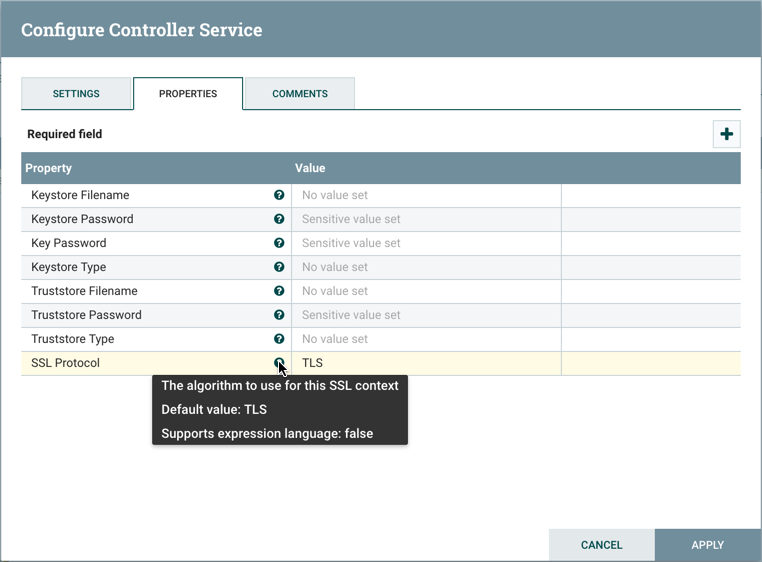

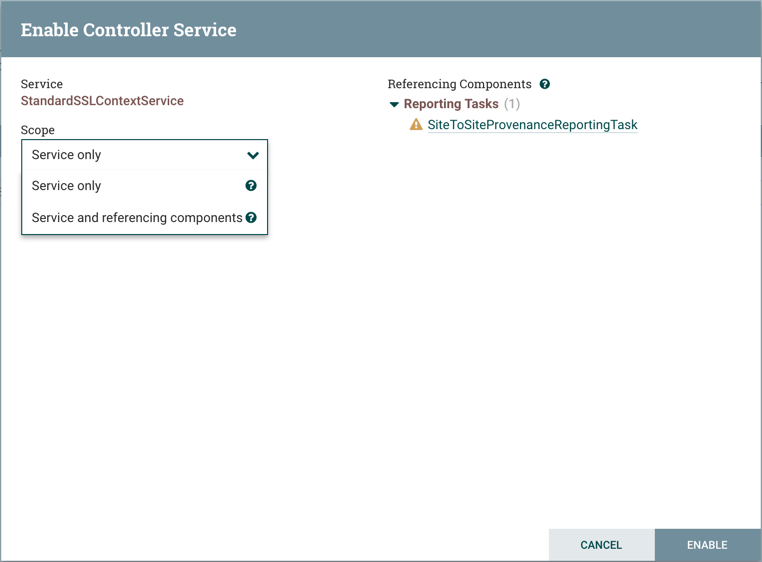

Controller Service: Controller Services are extension points that, after being added and configured by a DFM in the User Interface, will start up when NiFi starts up and provide information for use by other components (such as processors or other controller services). A common Controller Service used by several components is the StandardSSLContextService. It provides the ability to configure keystore and/or truststore properties once and reuse that configuration throughout the application. The idea is that, rather than configure this information in every processor that might need it, the controller service provides it for any processor to use as needed.

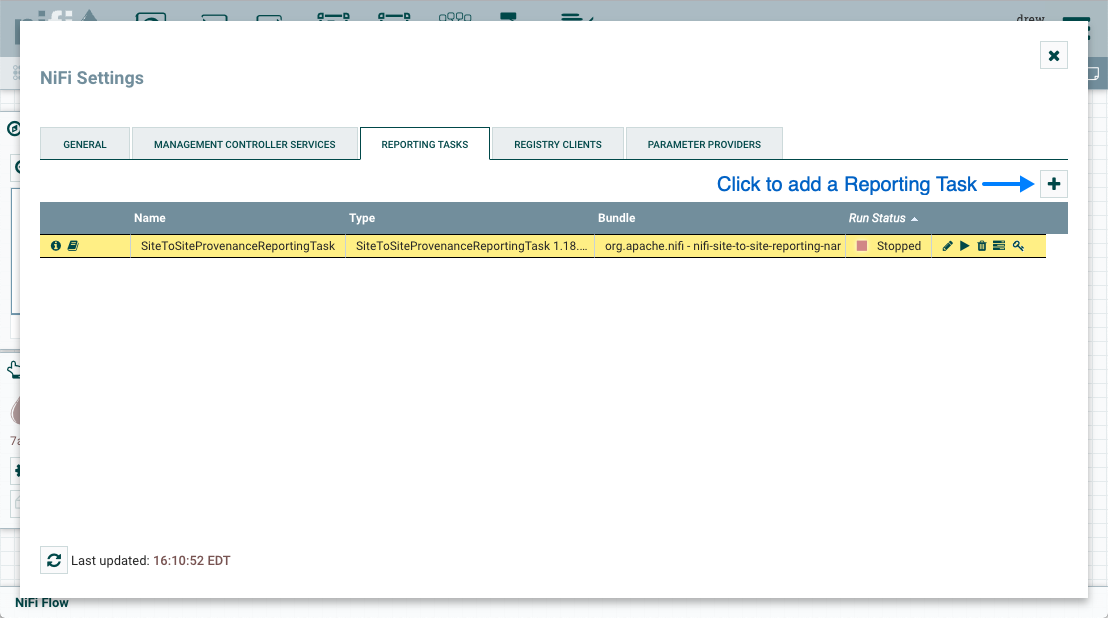

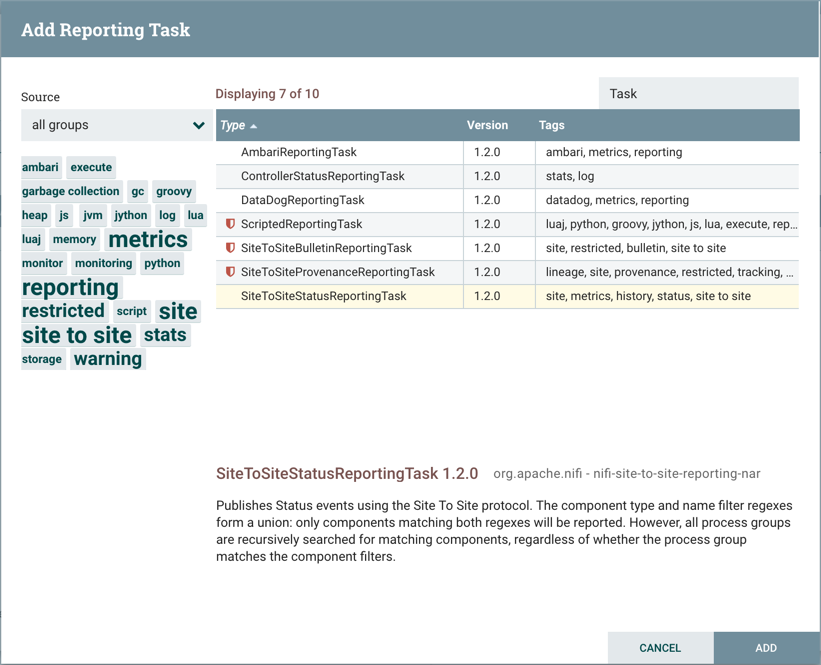

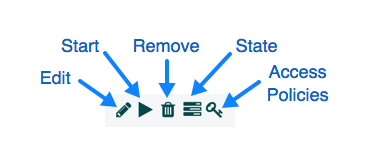

Reporting Task: Reporting Tasks run in the background to provide statistical reports about what is happening in the NiFi instance. The DFM adds and configures Reporting Tasks in the User Interface as desired. Common reporting tasks include the ControllerStatusReportingTask, MonitorDiskUsage reporting task, MonitorMemory reporting task, and the StandardGangliaReporter.

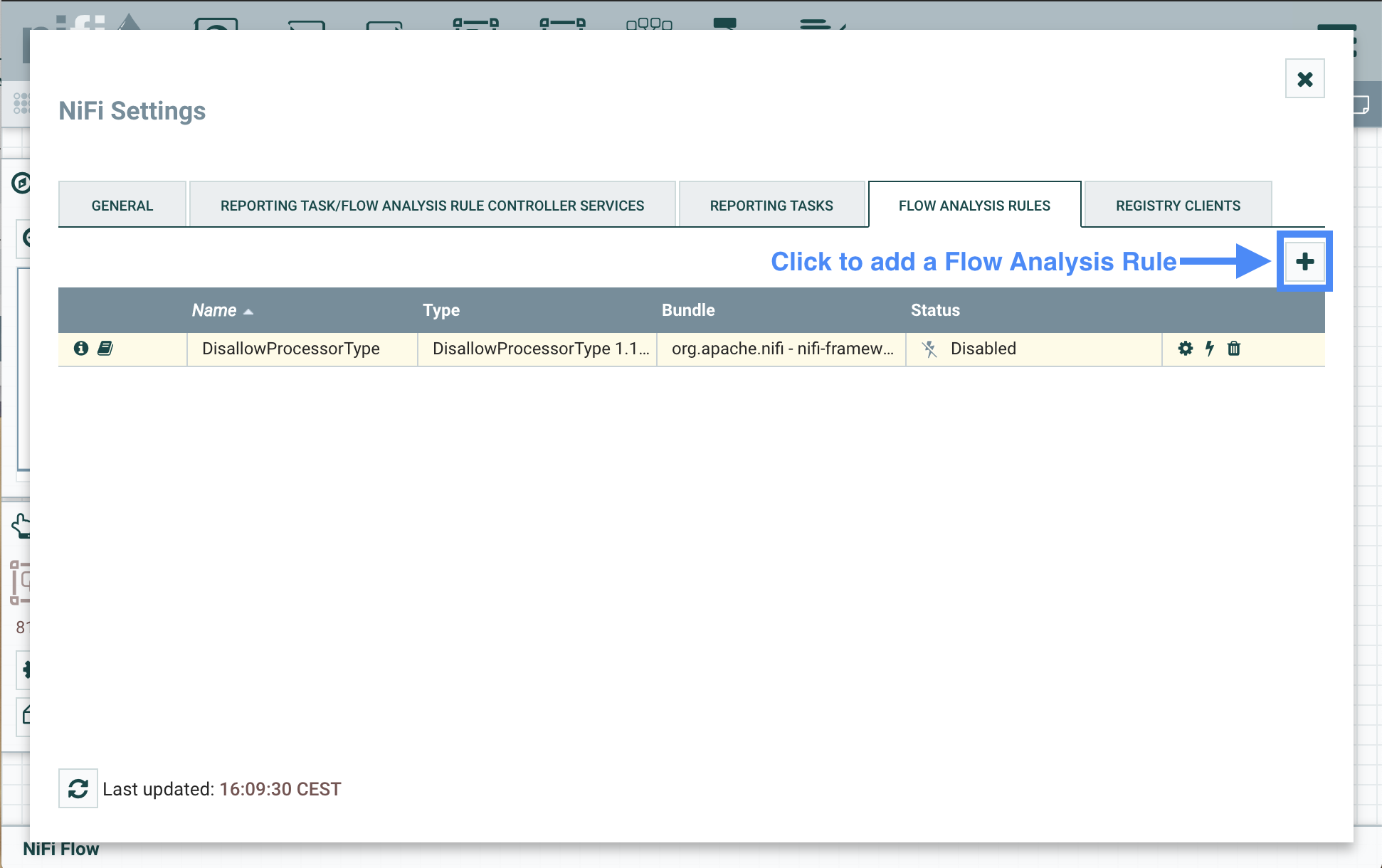

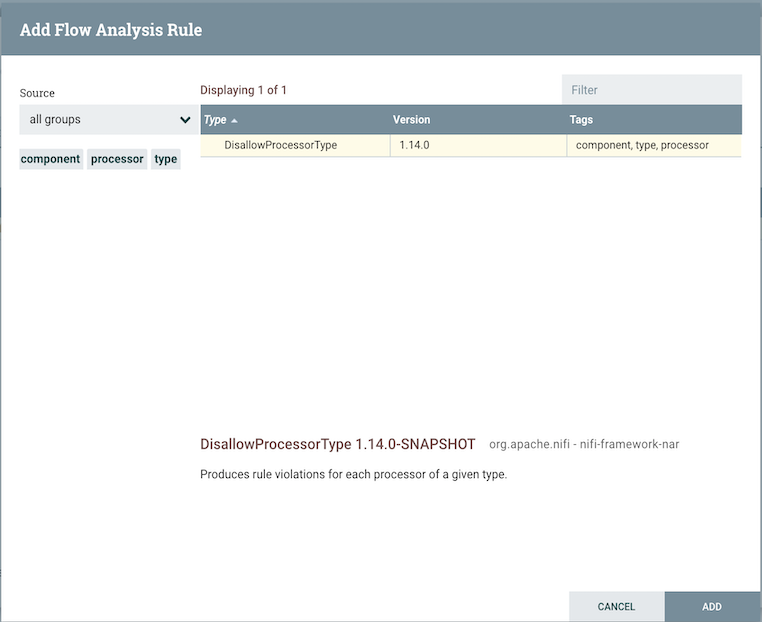

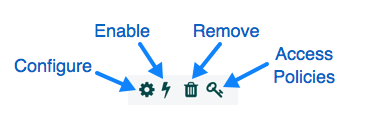

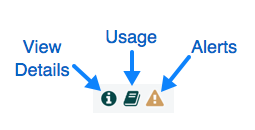

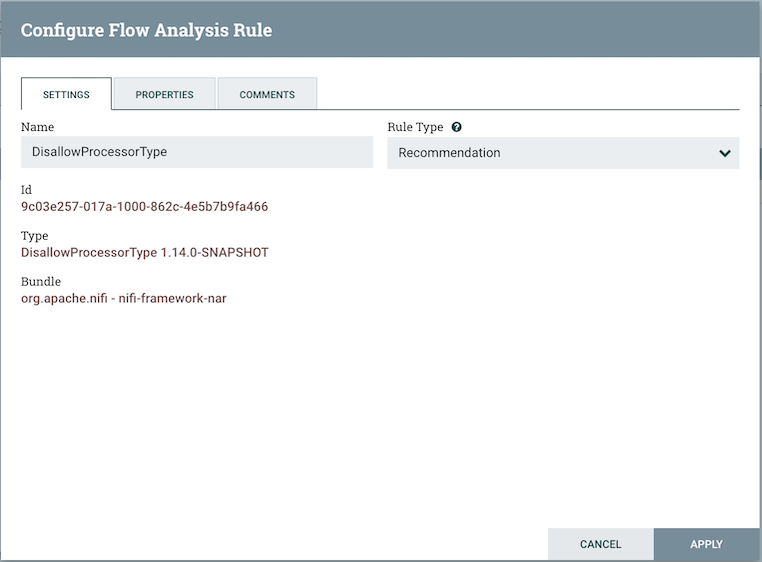

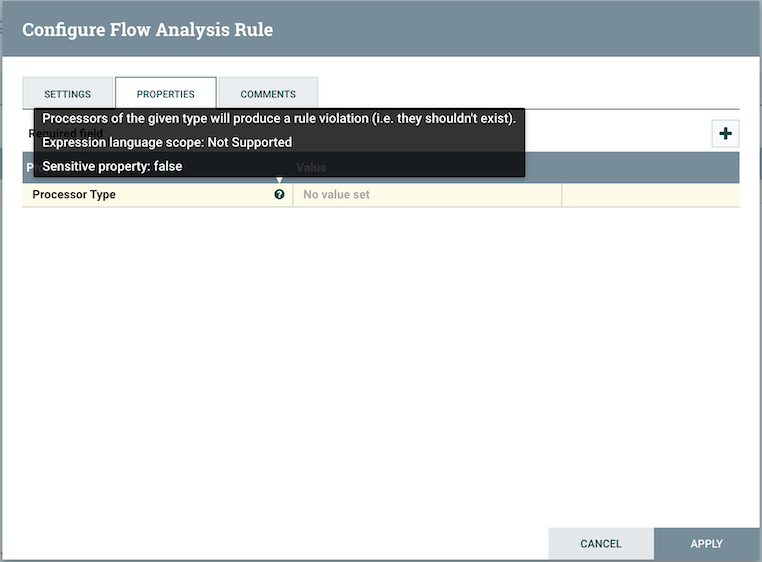

Flow Analysis Rules: Flow Analysis Rules can analyze components or (parts of) the flow. They may produce rule violations which can help adjust or maintain optimal flow design. The DFM adds and configures Flow Analysis Rules in the User Interface as desired.

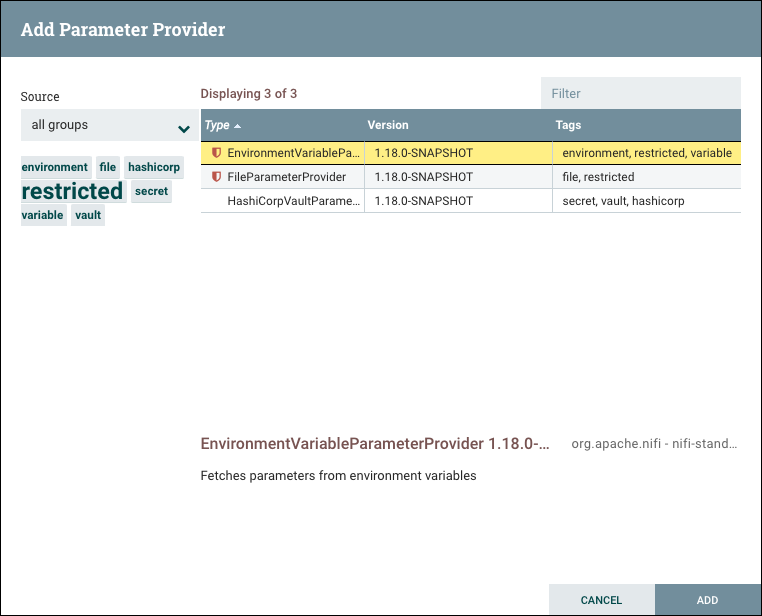

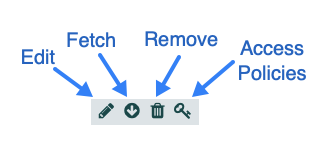

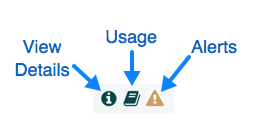

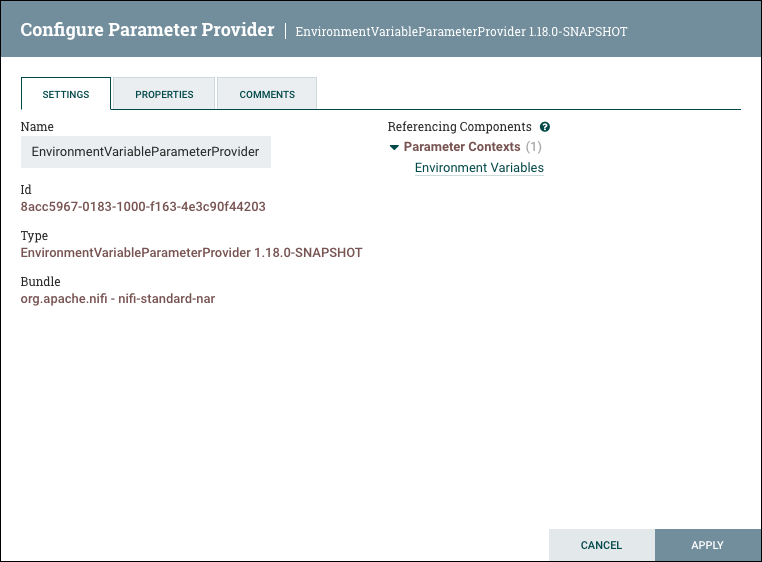

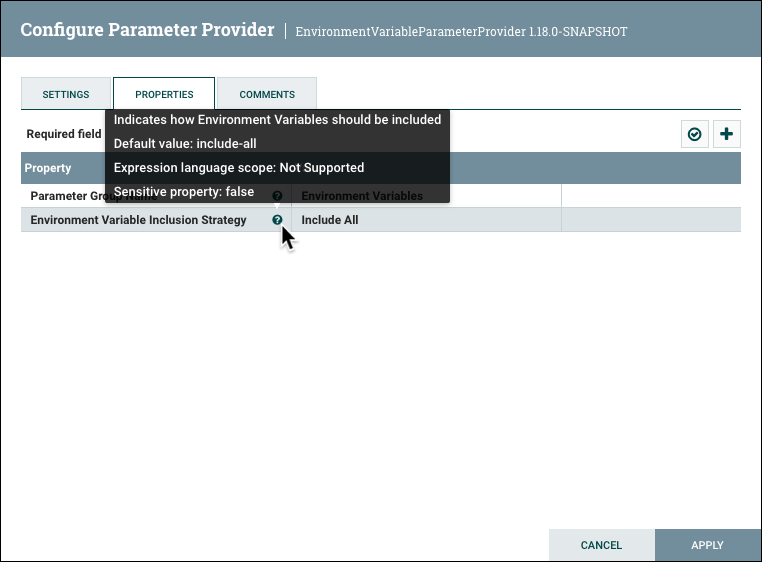

Parameter Provider: Parameter Providers can provide parameters from an external source to Parameter Contexts. The parameters of a Parameter Provider may be fetched and applied to all referencing Parameter Contexts.

Funnel: A funnel is a NiFi component that is used to combine the data from several Connections into a single Connection.

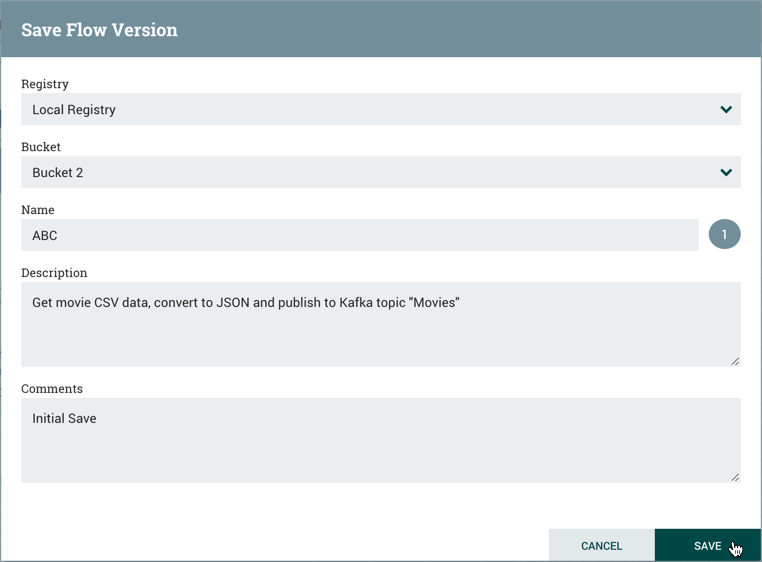

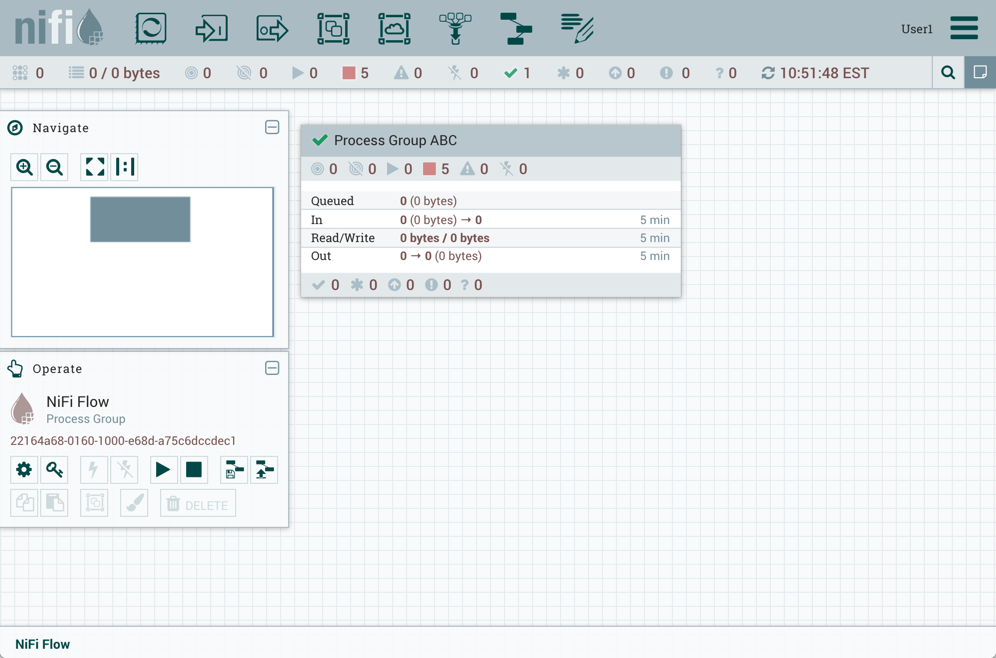

Process Group: When a dataflow becomes complex, it often is beneficial to reason about the dataflow at a higher, more abstract level. NiFi allows multiple components, such as Processors, to be grouped together into a Process Group. The NiFi User Interface then makes it easy for a DFM to connect together multiple Process Groups into a logical dataflow, as well as allowing the DFM to enter a Process Group in order to see and manipulate the components within the Process Group.

Port: Dataflows that are constructed using one or more Process Groups need a way to connect a Process Group to other dataflow components. This is achieved by using Ports. A DFM can add any number of Input Ports and Output Ports to a Process Group and name these ports appropriately.

Remote Process Group: Just as data is transferred into and out of a Process Group, it is sometimes necessary to transfer data from one instance of NiFi to another. While NiFi provides many different mechanisms for transferring data from one system to another, Remote Process Groups are often the easiest way to accomplish this if transferring data to another instance of NiFi.

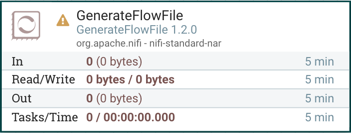

Bulletin: The NiFi User Interface provides a significant amount of monitoring and feedback about the current status of the application. In addition to rolling statistics and the current status provided for each component, components are able to report Bulletins. Whenever a component reports a Bulletin, a bulletin icon is displayed on that component. System-level bulletins are displayed on the Status bar near the top of the page. Using the mouse to hover over that icon will provide a tool-tip that shows the time and severity (Debug, Info, Warning, Error) of the Bulletin, as well as the message of the Bulletin. Bulletins from all components can also be viewed and filtered in the Bulletin Board Page, available in the Global Menu.

flow.xml.gz: Everything the DFM puts onto the NiFi User Interface canvas is written, in real time, to one file called the flow.xml.gz. This file is located in the nifi/conf directory by default.

Any change made on the canvas is automatically saved to this file, without the user needing to click a "Save" button.

In addition, NiFi automatically creates a backup copy of this file in the archive directory when it is updated.

You can use these archived files to rollback flow configuration. To do so, stop NiFi, replace flow.xml.gz with a desired backup copy, then restart NiFi.

In a clustered environment, stop the entire NiFi cluster, replace the flow.xml.gz of one of nodes, and restart the node. Remove flow.xml.gz from other nodes.

Once you confirmed the node starts up as a one-node cluster, start the other nodes. The replaced flow configuration will be synchronized across the cluster.

The name and location of flow.xml.gz, and auto archive behavior are configurable. See the System Administrator’s Guide for further details.

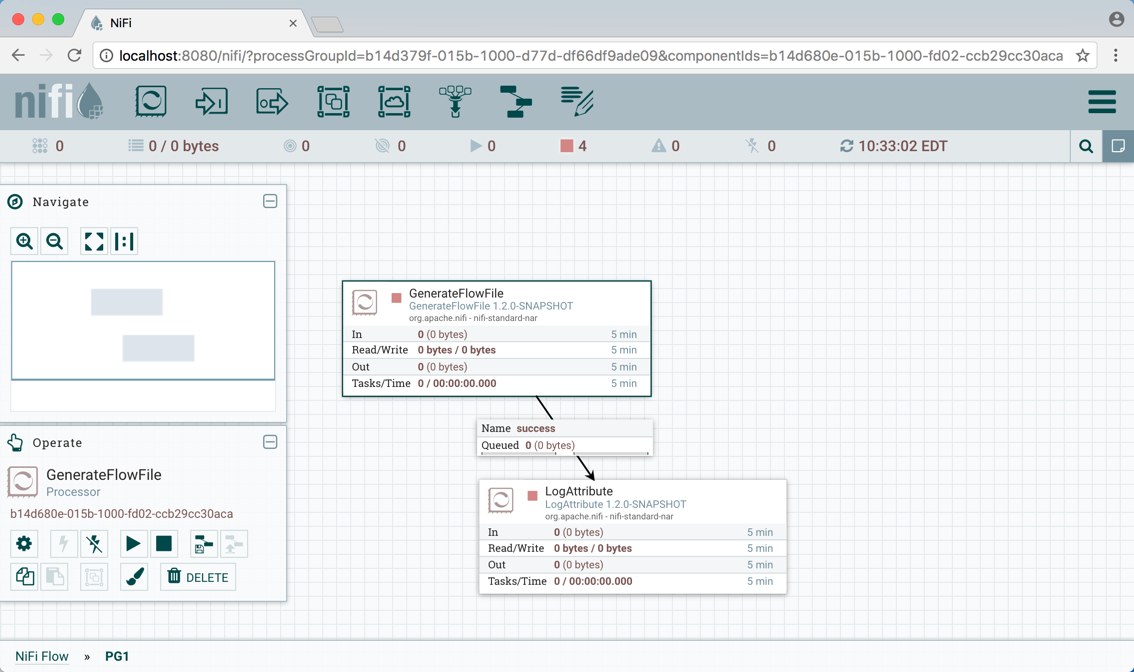

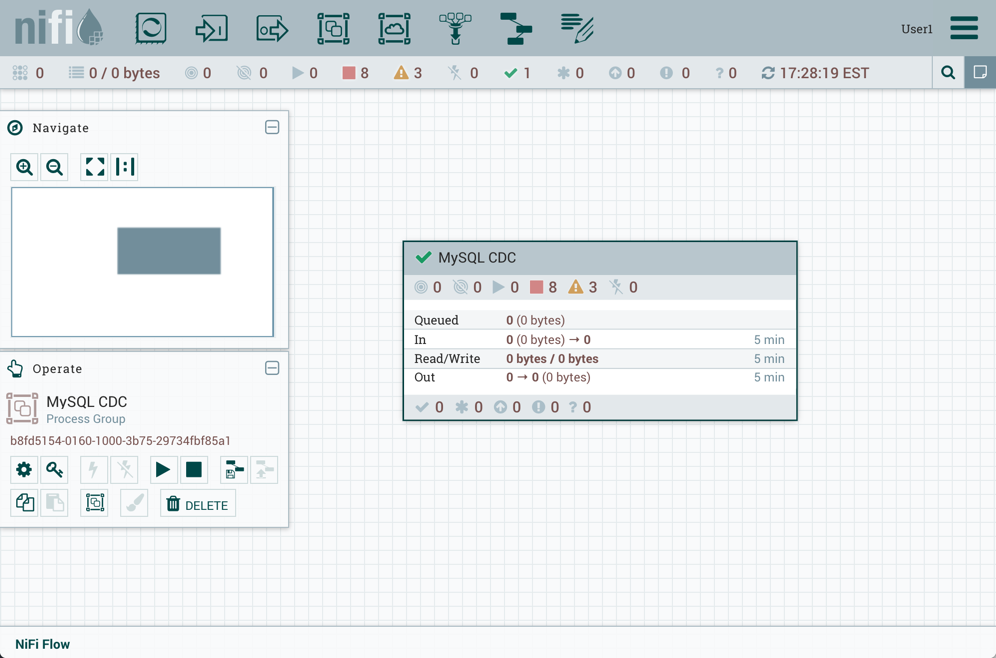

NiFi User Interface

The NiFi UI provides mechanisms for creating automated dataflows, as well as visualizing, editing, monitoring, and administering those dataflows. The UI can be broken down into several segments, each responsible for different functionality of the application. This section provides screenshots of the application and highlights the different segments of the UI. Each segment is discussed in further detail later in the document.

When the application is started, the user is able to navigate to the UI by going to the default address of

https://localhost:8443/nifi in a web browser. The default configuration generates a username and password

with full system administration privileges.

For information on securing the system, see the System Administrator’s Guide.

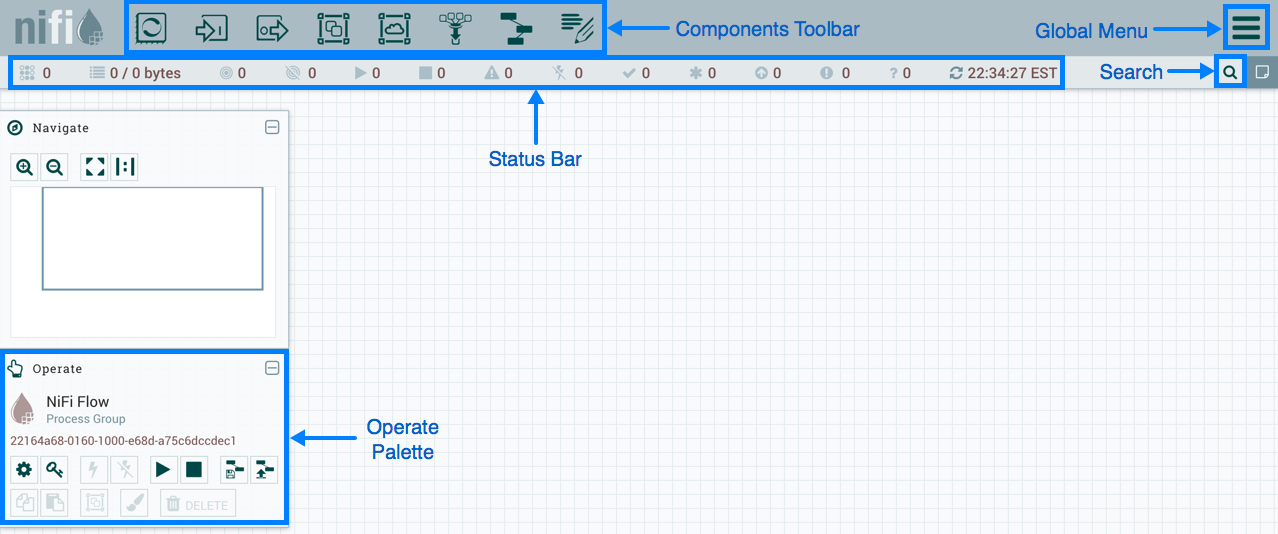

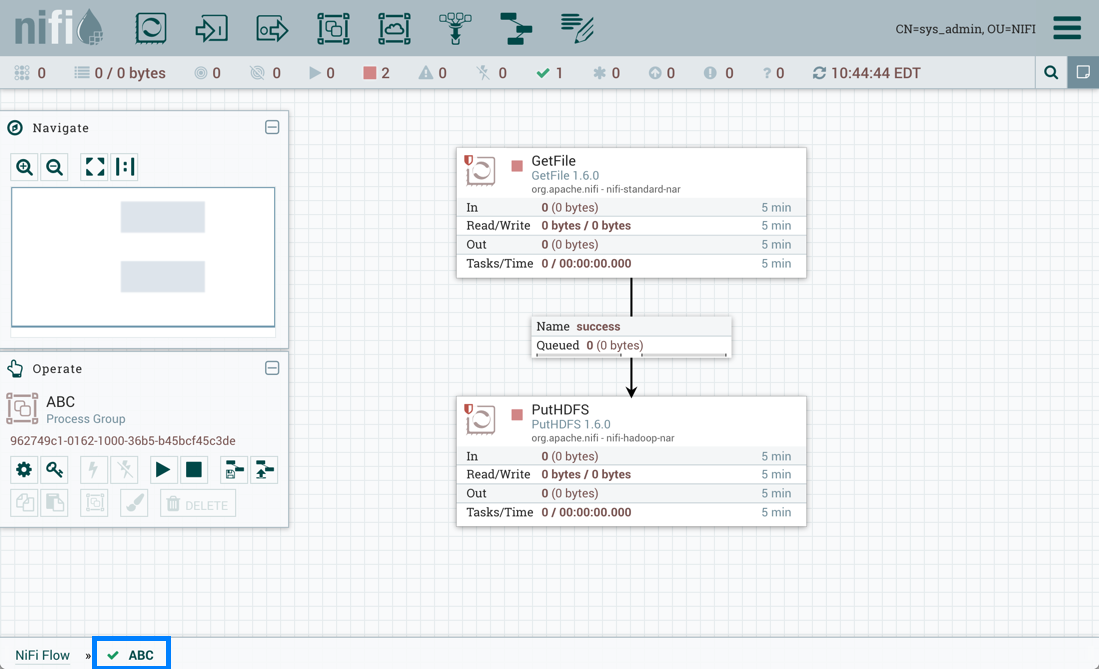

When a DFM navigates to the UI for the first time, a blank canvas is provided on which a dataflow can be built:

The Components Toolbar runs across the top left portion of your screen. It consists of the components you can drag onto the canvas to build your dataflow. Each component is described in more detail in Building a DataFlow.

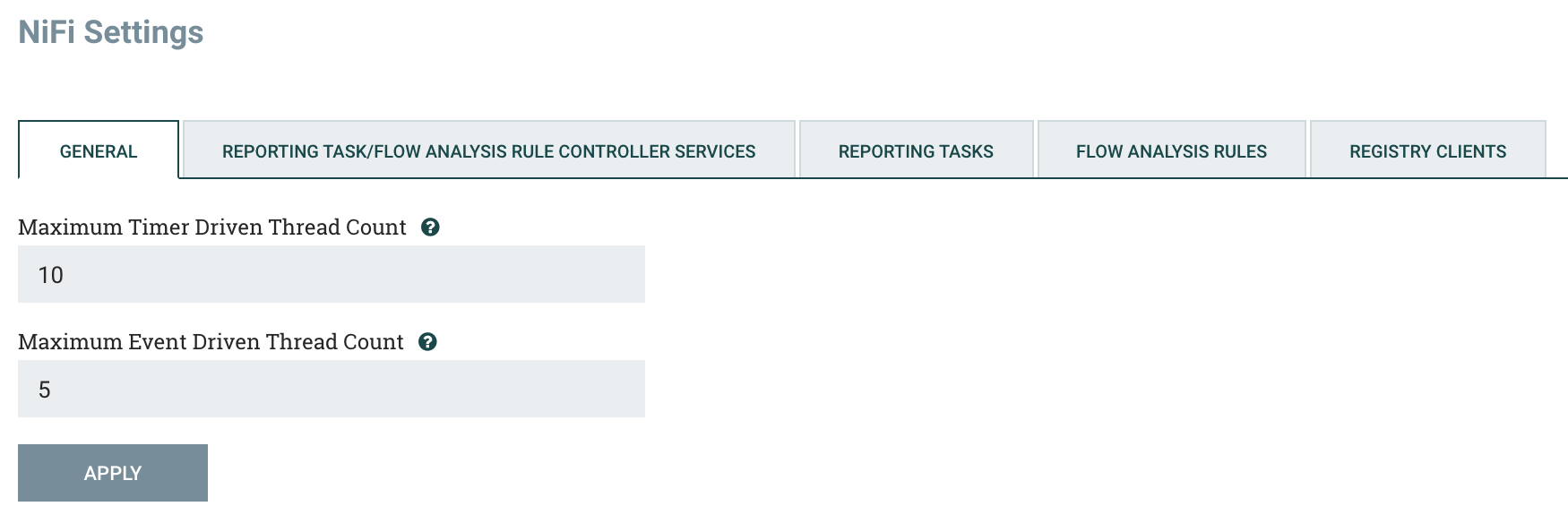

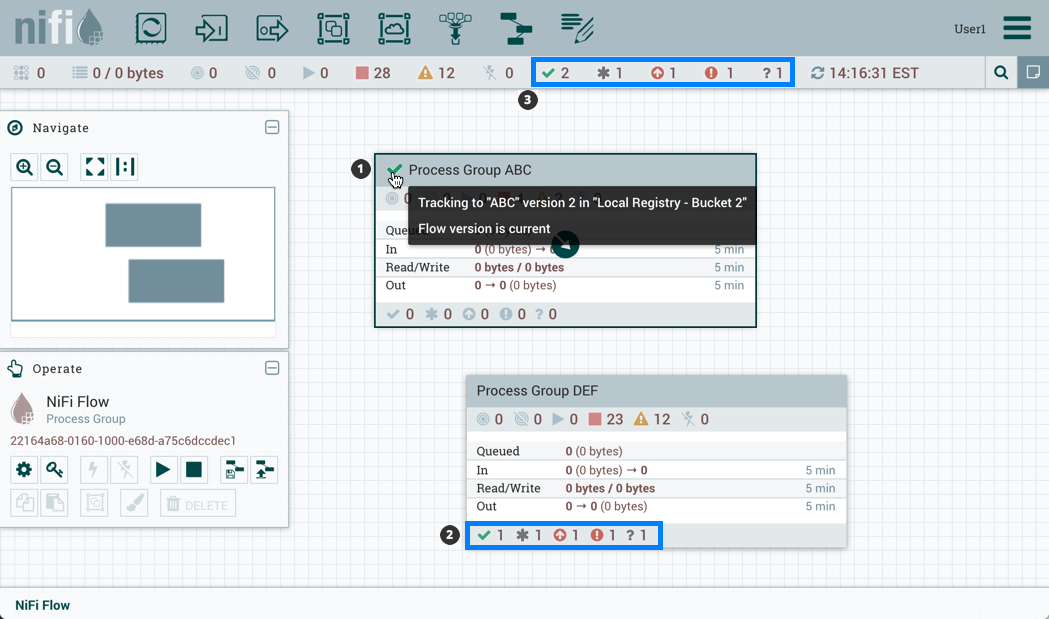

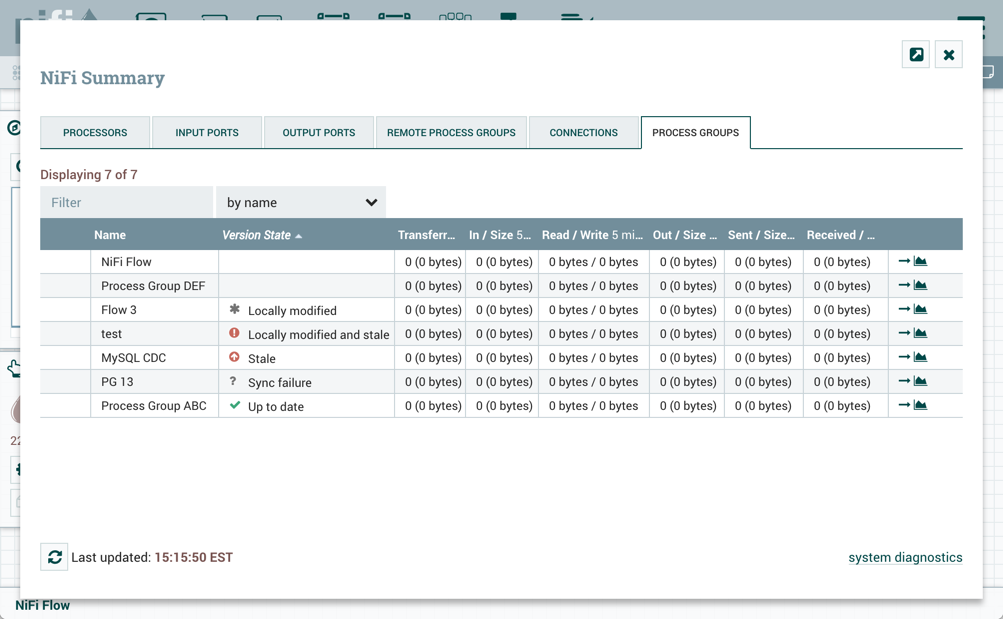

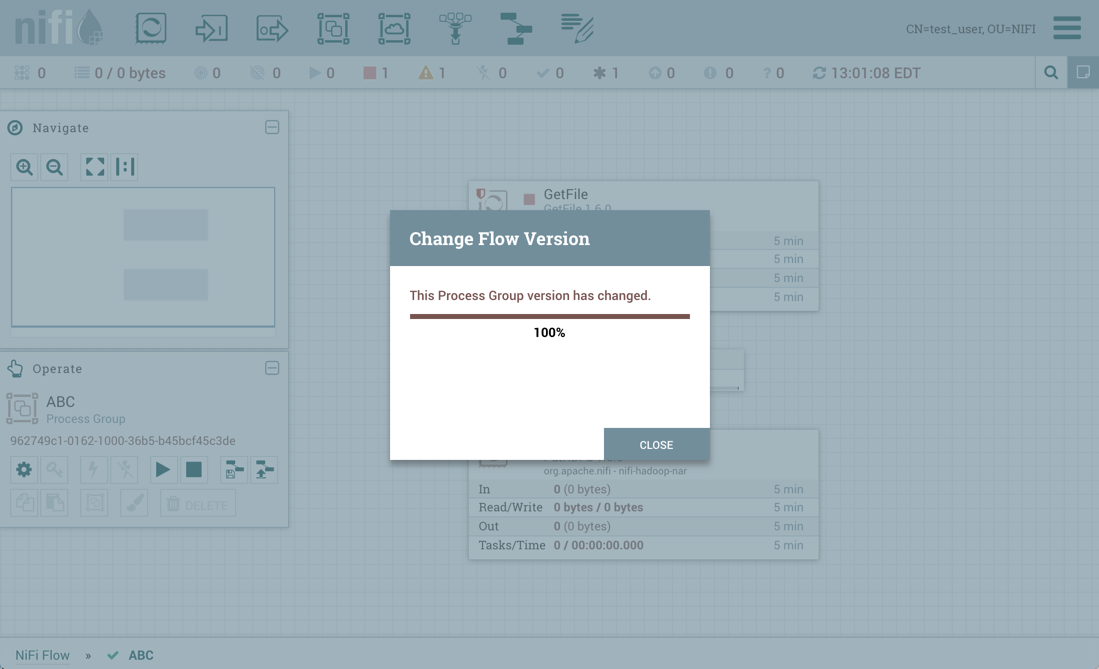

The Status Bar is under the Components Toolbar. The Status bar provides information about the number of threads that are currently active in the flow, the amount of data that currently exists in the flow, how many Remote Process Groups exist on the canvas in each state (Transmitting, Not Transmitting), how many Processors exist on the canvas in each state (Stopped, Running, Invalid, Disabled), how many versioned Process Groups exist on the canvas in each state (Up to date, Locally modified, Stale, Locally modified and stale, Sync failure) and the timestamp at which all of this information was last refreshed. Additionally, if the instance of NiFi is clustered, the Status bar shows how many nodes are in the cluster and how many are currently connected.

The Operate Palette sits to the left-hand side of the screen. It consists of buttons that are used by DFMs to manage the flow, as well as by administrators who manage user access and configure system properties, such as how many system resources should be provided to the application.

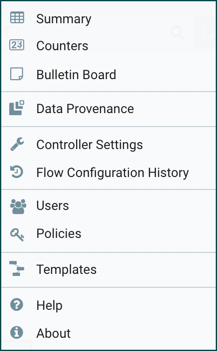

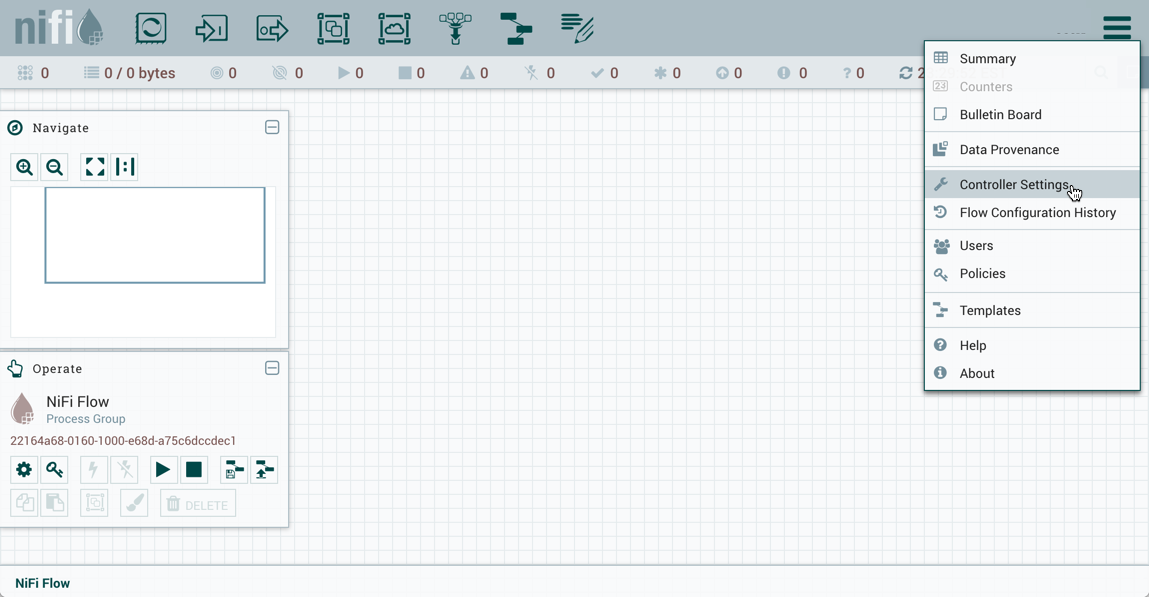

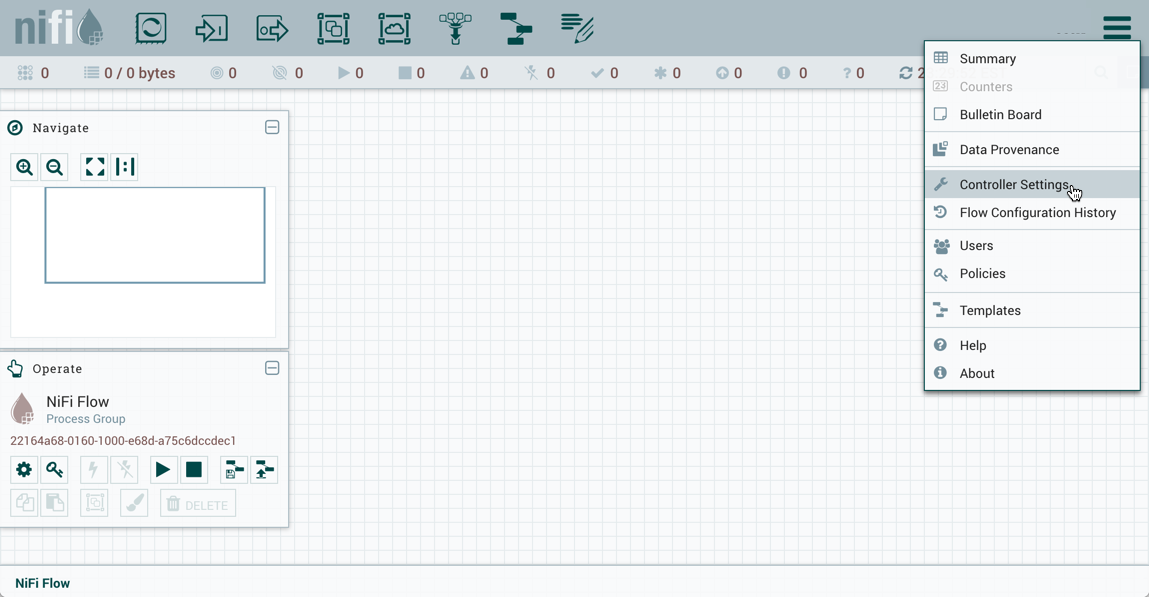

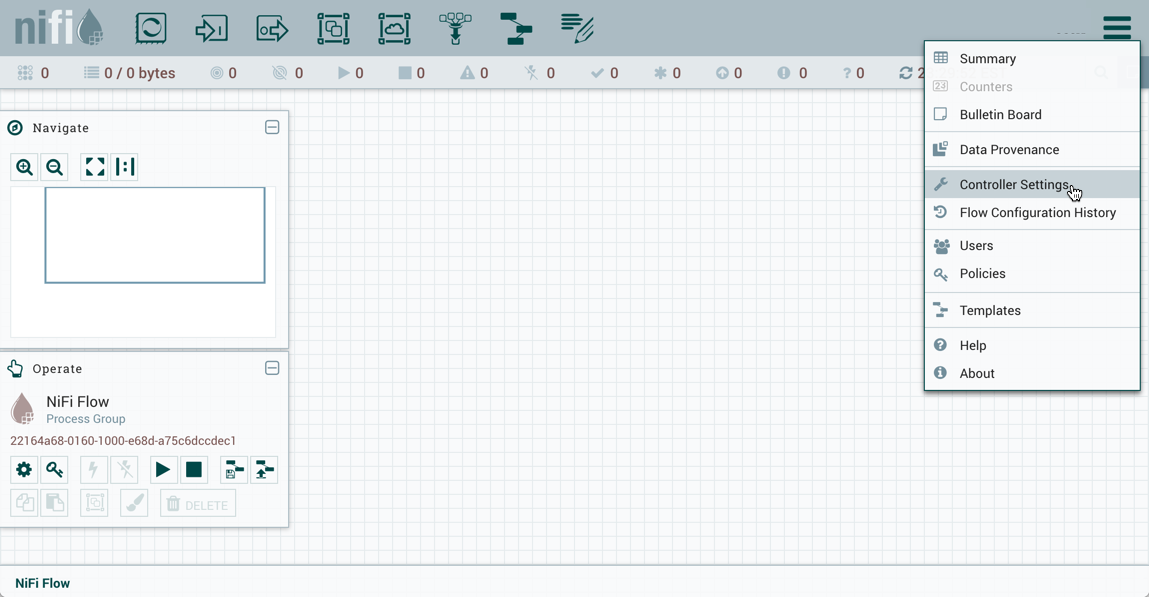

On the right side of the canvas is Search, and the Global Menu. For more information on search refer to Search Components in DataFlow. The Global Menu contains options that allow you to manipulate existing components on the canvas:

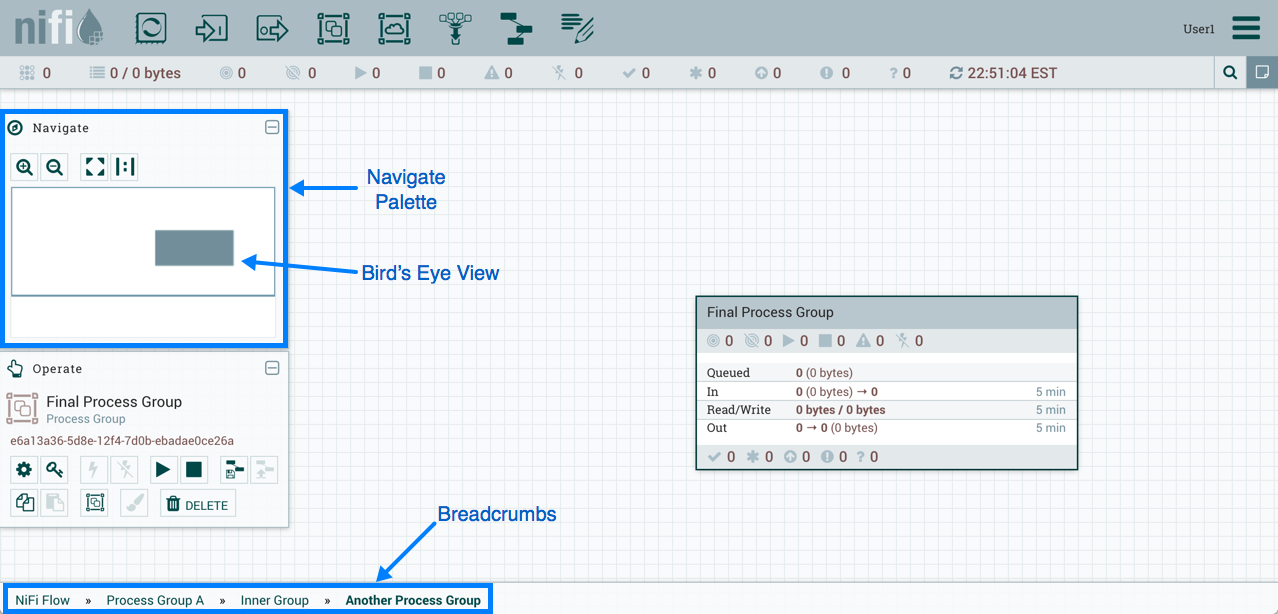

Additionally, the UI has some features that allow you to easily navigate around the canvas. You can use the Navigate Palette to pan around the canvas, and to zoom in and out. The “Birds Eye View” of the dataflow provides a high-level view of the dataflow and allows you to pan across large portions of the dataflow. You can also find breadcrumbs along the bottom of the screen. As you navigate into and out of Process Groups, the breadcrumbs show the depth in the flow, and each Process Group that you entered to reach this depth. Each of the Process Groups listed in the breadcrumbs is a link that will take you back up to that level in the flow.

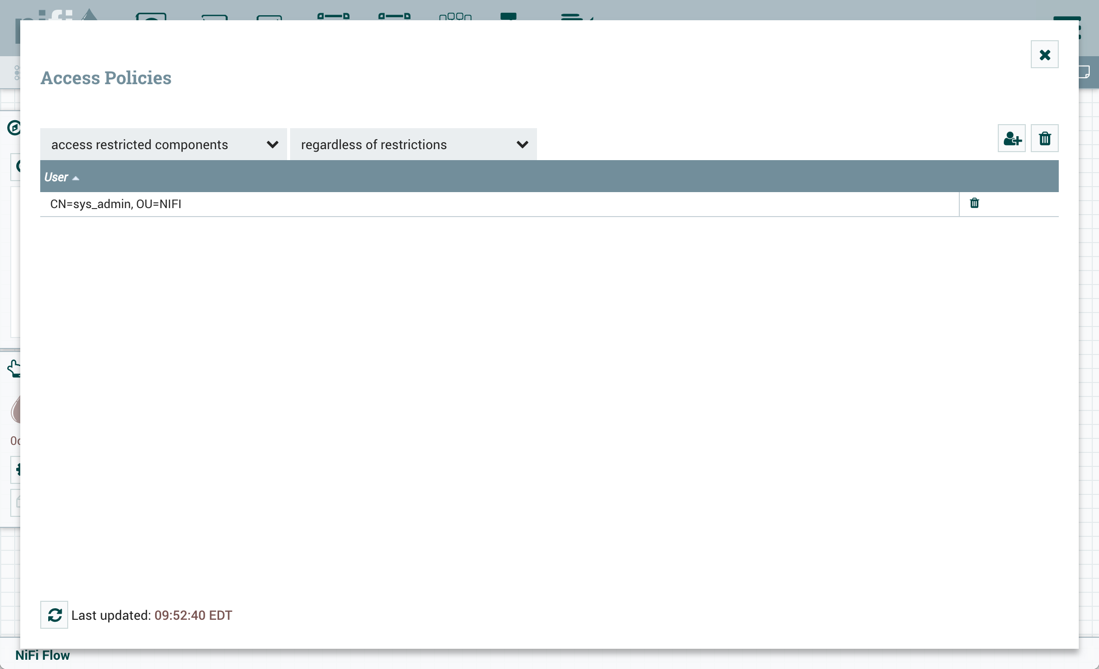

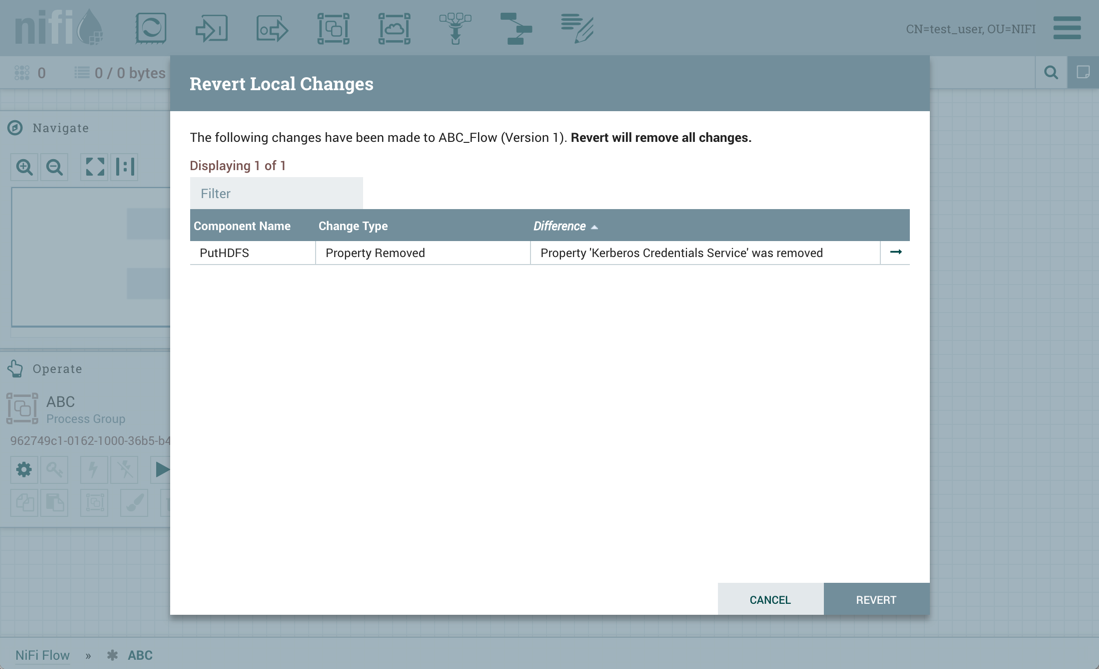

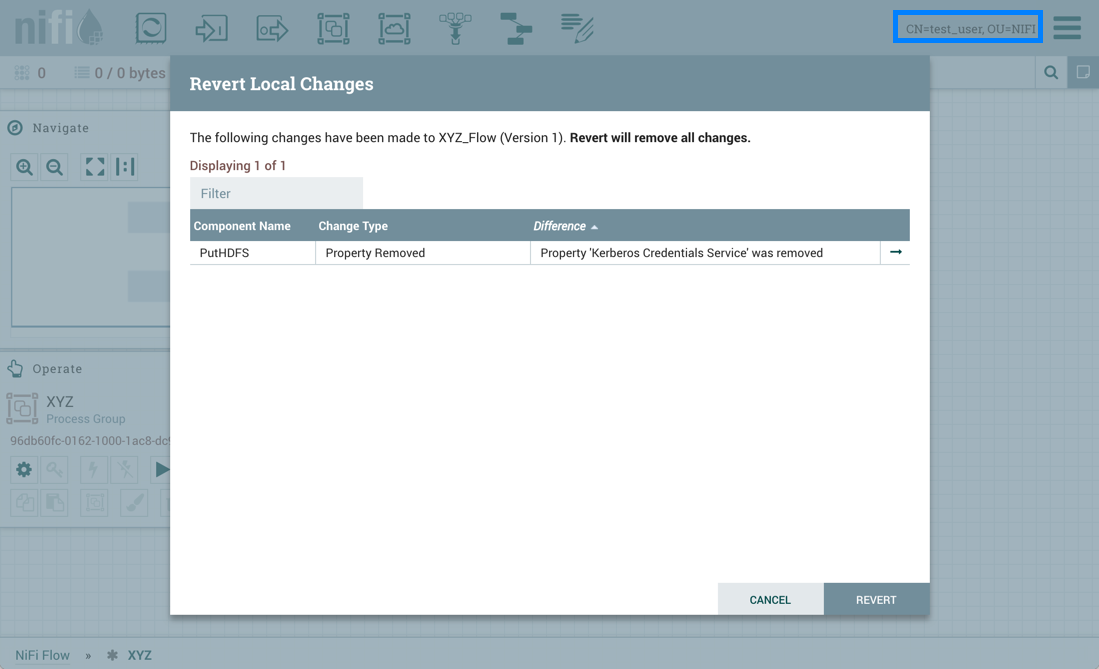

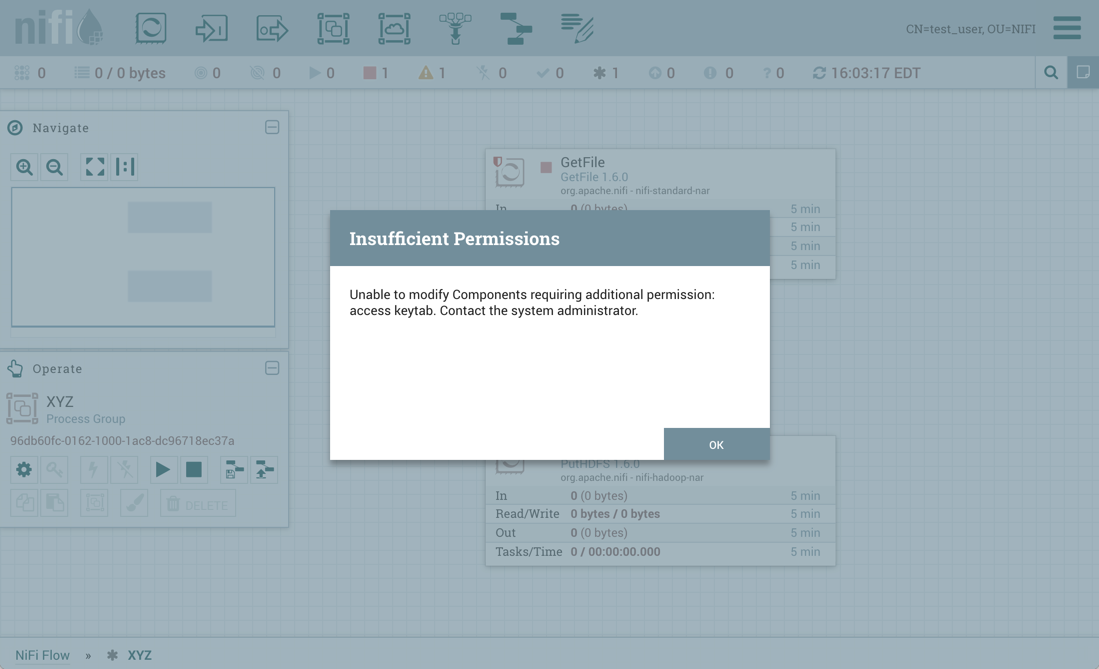

Accessing the UI with Multi-Tenant Authorization

Multi-tenant authorization enables multiple groups of users (tenants) to command, control, and observe different parts of the dataflow, with varying levels of authorization. When an authenticated user attempts to view or modify a NiFi resource, the system checks whether the user has privileges to perform that action. These privileges are defined by policies that you can apply system wide or to individual components. What this means from a Dataflow Manager perspective is that once you have access to the NiFi canvas, a range of functionality is visible and available to you, depending on the privileges assigned to you.

The available global access policies are:

| Policy | Privilege |

|---|---|

view the UI |

Allows users to view the UI |

access the controller |

Allows users to view and modify the controller including Management Controller Services, Reporting Tasks, Flow Analysis Rules, Registry Clients, Parameter Providers and nodes in the cluster |

query provenance |

Allows users to submit a provenance search and request even lineage |

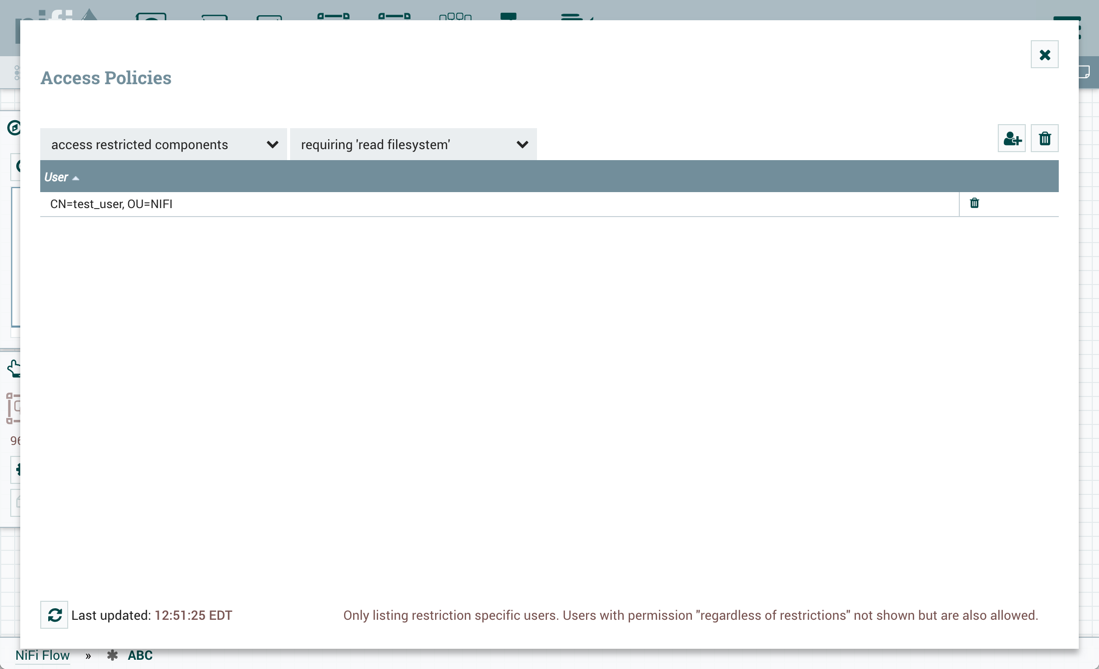

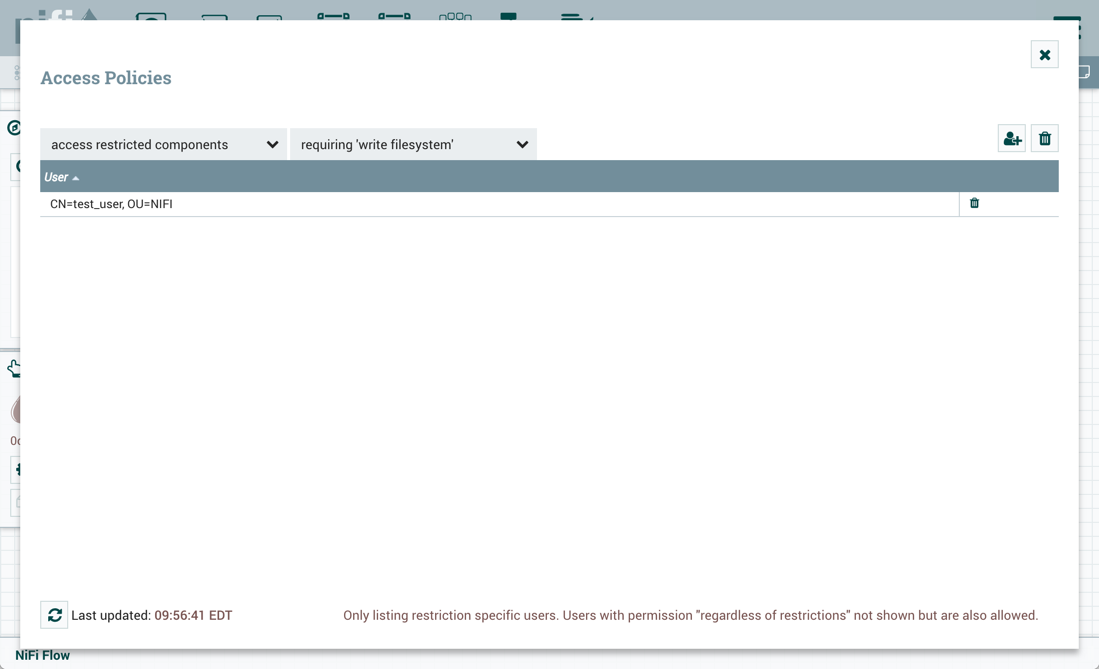

access restricted components |

Allows users to create/modify restricted components assuming other permissions are sufficient. The restricted components may indicate which specific permissions are required. Permissions can be granted for specific restrictions or be granted regardless of restrictions. If permission is granted regardless of restrictions, the user can create/modify all restricted components. |

access all policies |

Allows users to view and modify the policies for all components |

access users/groups |

Allows users to view and modify the users and user groups |

retrieve site-to-site details |

Allows other NiFi instances to retrieve Site-To-Site details |

view system diagnostics |

Allows users to view System Diagnostics |

proxy user requests |

Allows proxy machines to send requests on the behalf of others |

access counters |

Allows users to view and modify counters |

The available component-level access policies are:

| Policy | Privilege |

|---|---|

view the component |

Allows users to view component configuration details |

modify the component |

Allows users to modify component configuration details |

view provenance |

Allows users to view provenance events generated by this component |

view the data |

Allows users to view metadata and content for this component in FlowFile queues in outbound connections and through provenance events |

modify the data |

Allows users to empty FlowFile queues in outbound connections and submit replays through provenance events |

view the policies |

Allows users to view the list of users who can view and modify a component |

modify the policies |

Allows users to modify the list of users who can view and modify a component |

retrieve data via site-to-site |

Allows a port to receive data from NiFi instances |

send data via site-to-site |

Allows a port to send data from NiFi instances |

If you are unable to view or modify a NiFi resource, contact your System Administrator or see Configuring Users and Access Policies in the System Administrator’s Guide for more information.

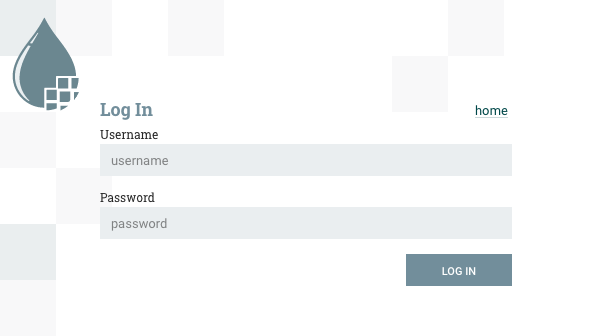

Logging In

If NiFi is configured to run securely, users will be able to request access to the DataFlow. For information on configuring NiFi to run securely, see the System Administrator’s Guide. If NiFi supports anonymous access, users will be given access accordingly and given an option to log in.

Clicking the 'login' link will open the log in page. If the user is logging in with their username/password they will be presented with a form to do so. If NiFi is not configured to support anonymous access and the user is logging in with their username/password, they will be immediately sent to the login form bypassing the canvas.

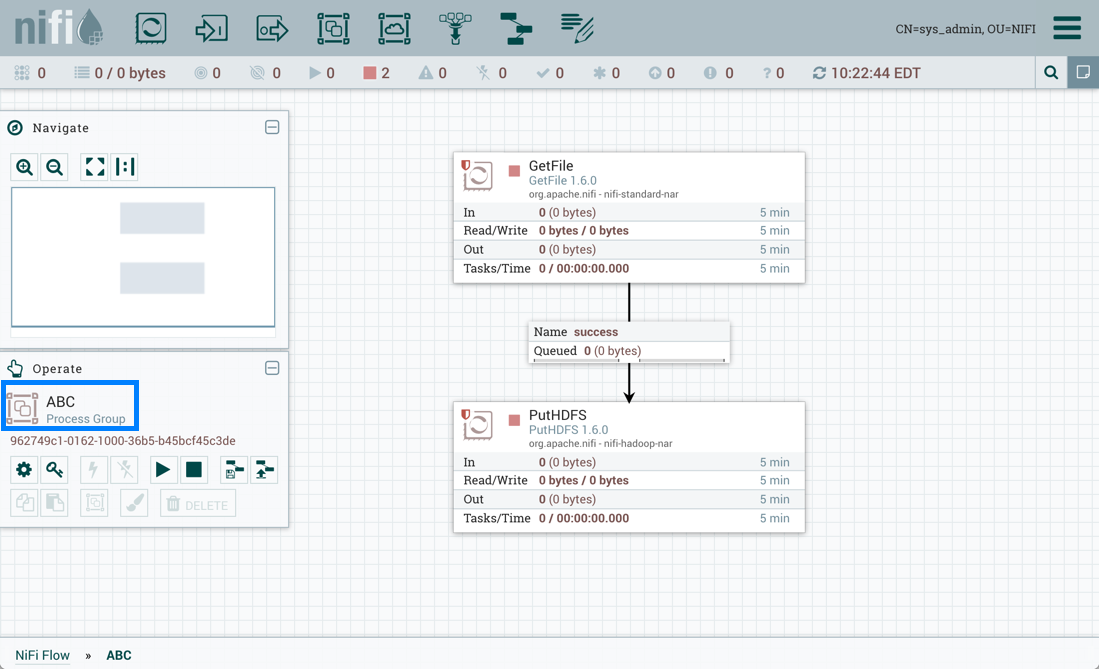

Building a DataFlow

A DFM is able to build an automated dataflow using the NiFi UI. Simply drag components from the toolbar to the canvas, configure the components to meet specific needs, and connect the components together.

Adding Components to the Canvas

The User Interface section above outlined the different segments of the UI and pointed out a Components Toolbar. This section looks at each of the Components in that toolbar:

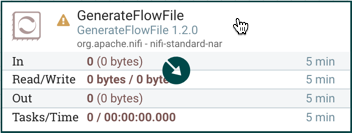

![]() Processor: The Processor is the most commonly used component, as it is responsible for data ingress, egress, routing, and

manipulating. There are many different types of Processors. In fact, this is a very common Extension Point in NiFi,

meaning that many vendors may implement their own Processors to perform whatever functions are necessary for their use case.

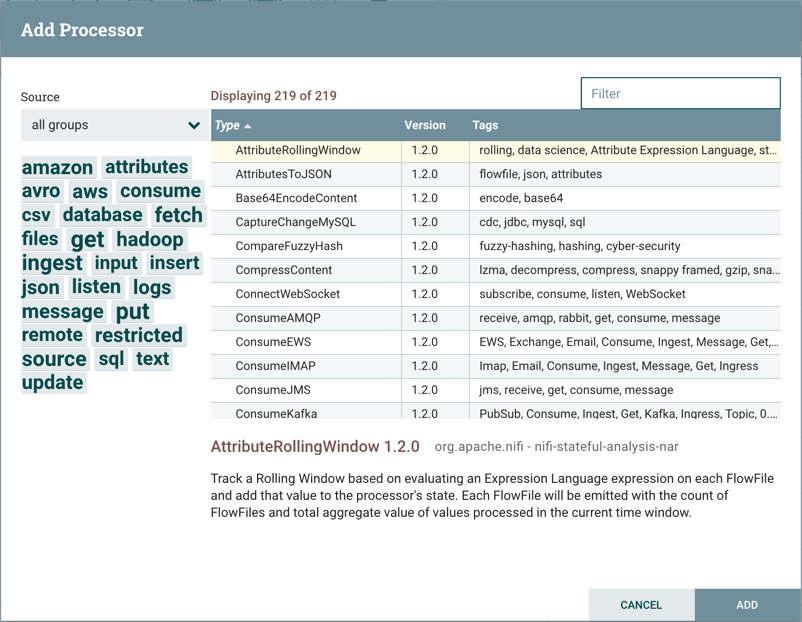

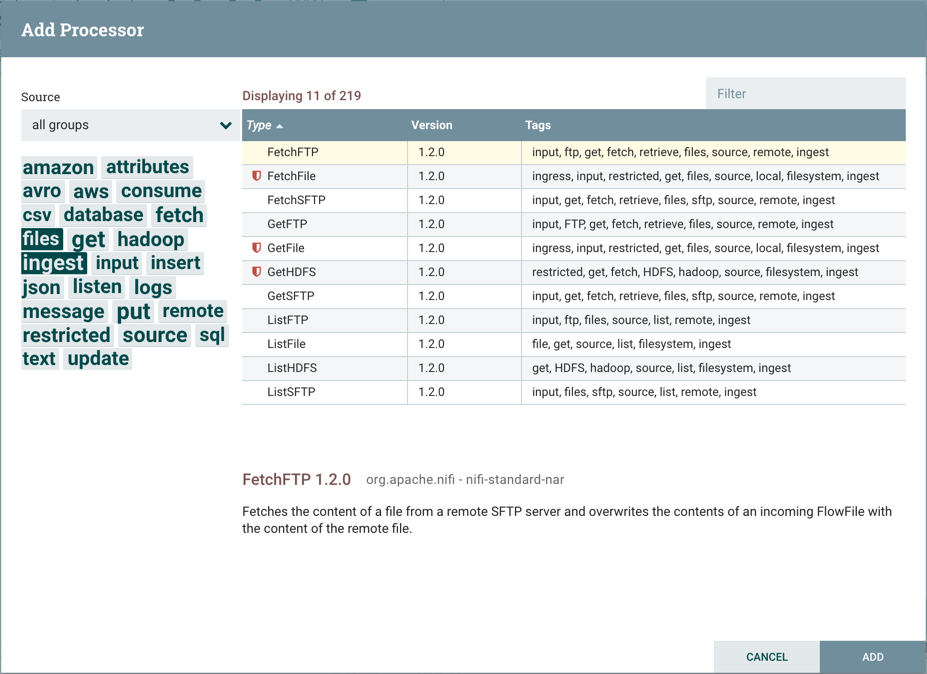

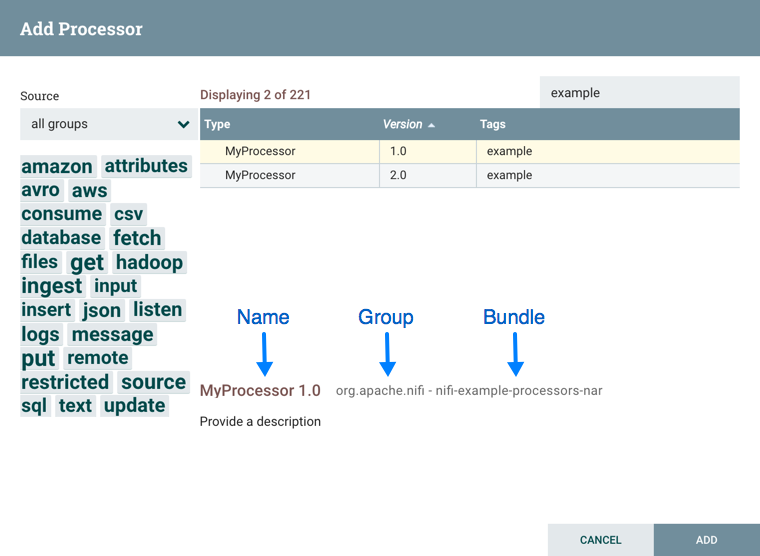

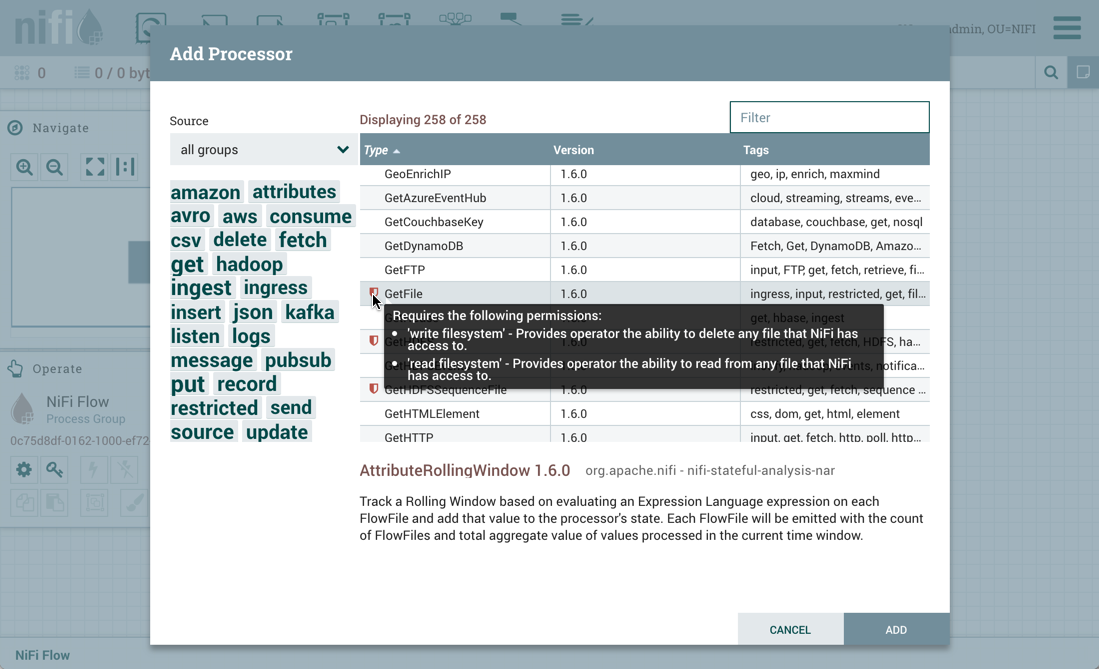

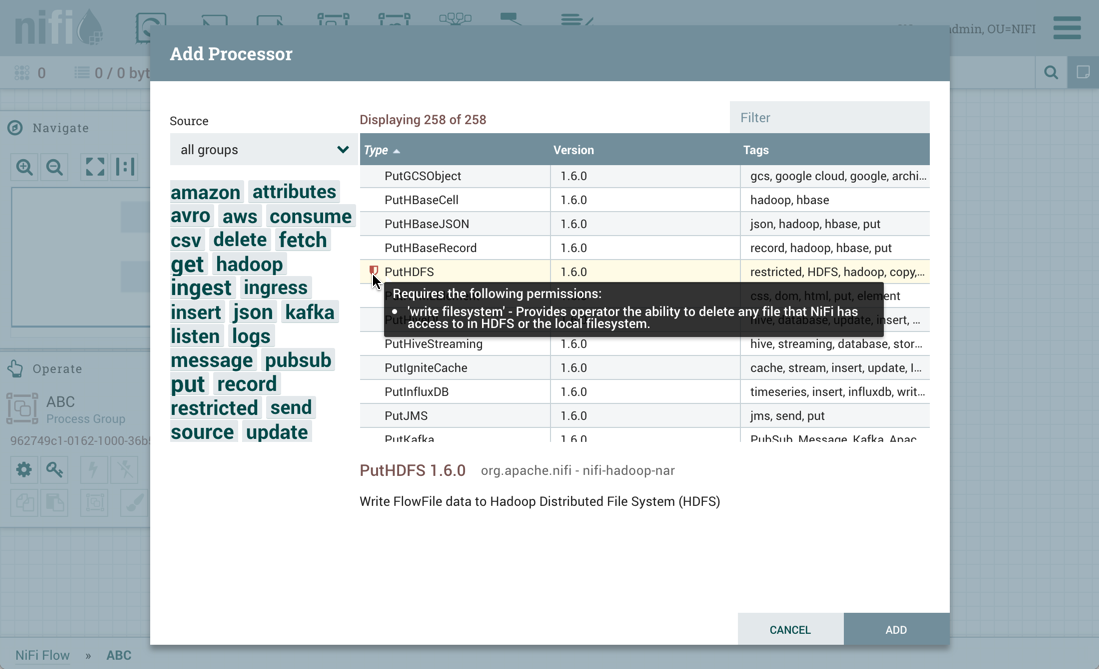

When a Processor is dragged onto the canvas, the user is presented with a dialog to choose which type of Processor to use:

Processor: The Processor is the most commonly used component, as it is responsible for data ingress, egress, routing, and

manipulating. There are many different types of Processors. In fact, this is a very common Extension Point in NiFi,

meaning that many vendors may implement their own Processors to perform whatever functions are necessary for their use case.

When a Processor is dragged onto the canvas, the user is presented with a dialog to choose which type of Processor to use:

In the top-right corner, the user is able to filter the list based on the Processor Type or the Tags associated with a Processor.

Processor developers have the ability to add Tags to their Processors. These tags are used in this dialog for filtering and are

displayed on the left-hand side in a Tag Cloud. The more Processors that exist with a particular Tag, the larger the Tag appears

in the Tag Cloud. Clicking a Tag in the Cloud will filter the available Processors to only those that contain that Tag. If multiple

Tags are selected, only those Processors that contain all of those Tags are shown. For example, if we want to show only those

Processors that allow us to ingest files, we can select both the files Tag and the ingest Tag:

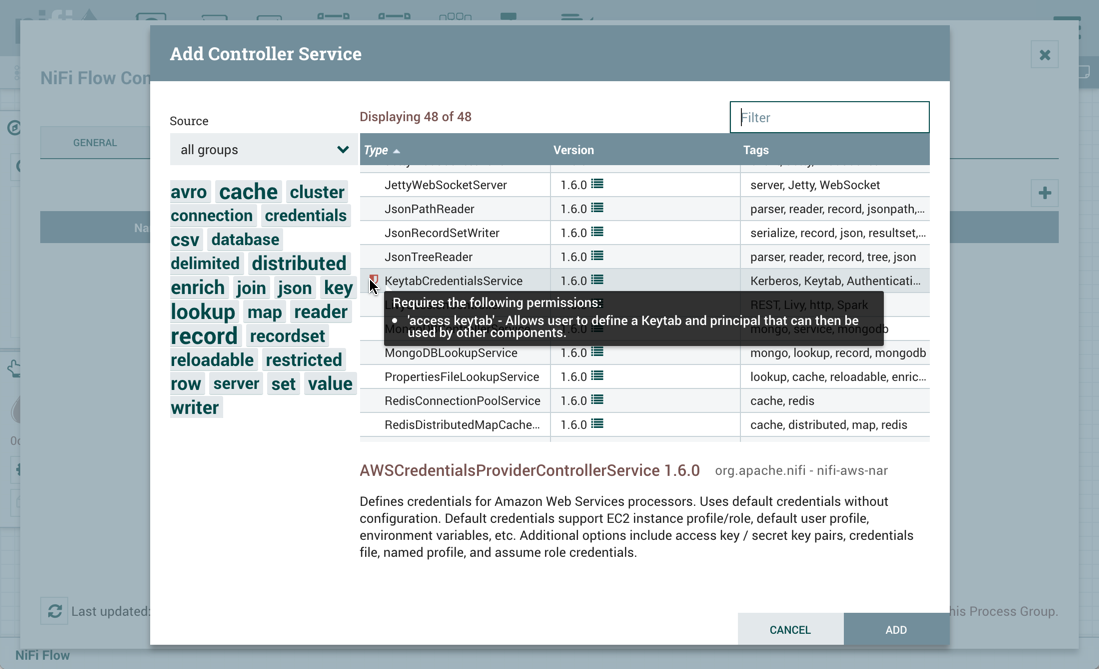

Restricted components will be marked with a

icon next to their name. These are components

that can be used to execute arbitrary unsanitized code provided by the operator through the NiFi REST API/UI or can be used to obtain

or alter data on the NiFi host system using the NiFi OS credentials. These components could be used by an otherwise authorized NiFi

user to go beyond the intended use of the application, escalate privilege, or could expose data about the internals of the NiFi process

or the host system. All of these capabilities should be considered privileged, and admins should be aware of these capabilities and

explicitly enable them for a subset of trusted users. Before a user is allowed to create and modify restricted components they must be granted access. Hovering over the

icon next to their name. These are components

that can be used to execute arbitrary unsanitized code provided by the operator through the NiFi REST API/UI or can be used to obtain

or alter data on the NiFi host system using the NiFi OS credentials. These components could be used by an otherwise authorized NiFi

user to go beyond the intended use of the application, escalate privilege, or could expose data about the internals of the NiFi process

or the host system. All of these capabilities should be considered privileged, and admins should be aware of these capabilities and

explicitly enable them for a subset of trusted users. Before a user is allowed to create and modify restricted components they must be granted access. Hovering over the  icon will display the specific permissions a restricted component requires. Permissions can be

assigned regardless of restrictions. In this case, the user will have access to all restricted components. Alternatively, users can

be assigned access to specific restrictions. If the user has been granted access to all restrictions a component requires, they will

have access to that component assuming otherwise sufficient permissions. For more information refer to

Accessing the UI with Multi-Tenant Authorization and Restricted Components in Versioned Flows.

icon will display the specific permissions a restricted component requires. Permissions can be

assigned regardless of restrictions. In this case, the user will have access to all restricted components. Alternatively, users can

be assigned access to specific restrictions. If the user has been granted access to all restrictions a component requires, they will

have access to that component assuming otherwise sufficient permissions. For more information refer to

Accessing the UI with Multi-Tenant Authorization and Restricted Components in Versioned Flows.

Clicking the "Add" button or double-clicking on a Processor Type will add the selected Processor to the canvas at the location that it was dropped.

| For any component added to the canvas, it is possible to select it with the mouse and move it anywhere on the canvas. Also, it is possible to select multiple items at once by either holding down the Shift key and selecting each item or by holding down the Shift key and dragging a selection box around the desired components. |

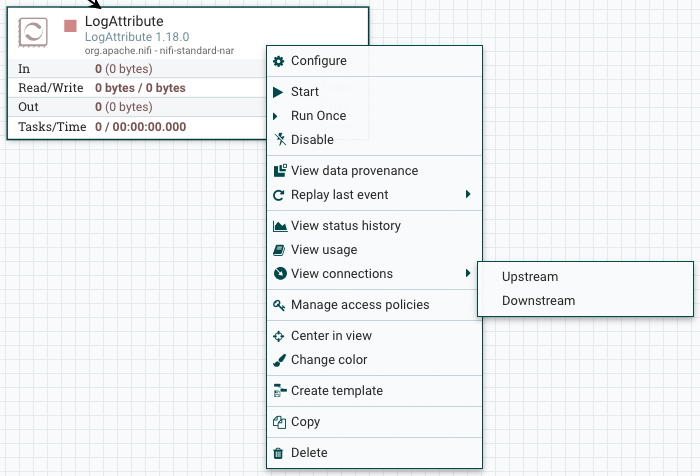

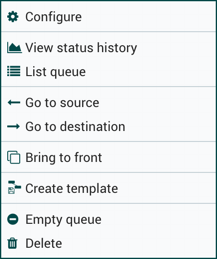

Once you have dragged a Processor onto the canvas, you can interact with it by right-clicking on the Processor and selecting an option from the context menu. The options available to you from the context menu vary, depending on the privileges assigned to you.

While the options available from the context menu vary, the following options are typically available when you have full privileges to work with a Processor:

-

Configure: This option allows the user to establish or change the configuration of the Processor (see Configuring a Processor).

| For Processors, Ports, Remote Process Groups, Connections and Labels, it is possible to open the configuration dialog by double-clicking on the desired component. |

-

Start or Stop: This option allows the user to start or stop a Processor; the option will be either Start or Stop, depending on the current state of the Processor.

-

Run Once: This option allows the user to run a selected Processor exactly once. If the Processor is prevented from executing (e.g., there are no incoming FlowFiles or the outgoing connection has back pressure applied) the Processor won’t get triggered. Execution settings apply (i.e., Primary Node and All Nodes settings will result in running the Processor only once on the Primary Node or one time on each of the nodes, respectively). Works only with Timer driven and CRON driven scheduling strategies.

-

Enable or Disable: This option allows the user to enable or disable a Processor; the option will be either Enable or Disable, depending on the current state of the Processor.

-

View data provenance: This option displays the NiFi Data Provenance table, with information about data provenance events for the FlowFiles routed through that Processor (see Data Provenance).

-

Replay last event: This option will replay the last Provenance event, effectively requeuing the last FlowFile that was processed by the Processor (see Replaying a FlowFile).

-

View status history: This option opens a graphical representation of the Processor’s statistical information over time.

-

View usage: This option takes the user to the Processor’s usage documentation.

-

View connections→Upstream: This option allows the user to see and "jump to" upstream connections that are coming into the Processor. This is particularly useful when processors connect into and out of other Process Groups.

-

View connections→Downstream: This option allows the user to see and "jump to" downstream connections that are going out of the Processor. This is particularly useful when processors connect into and out of other Process Groups.

-

Center in view: This option centers the view of the canvas on the given Processor.

-

Change color: This option allows the user to change the color of the Processor, which can make the visual management of large flows easier.

-

Copy: This option places a copy of the selected Processor on the clipboard, so that it may be pasted elsewhere on the canvas by right-clicking on the canvas and selecting Paste. The Copy/Paste actions also may be done using the keystrokes Ctrl-C (Command-C) and Ctrl-V (Command-V).

-

Delete: This option allows the DFM to delete a Processor from the canvas.

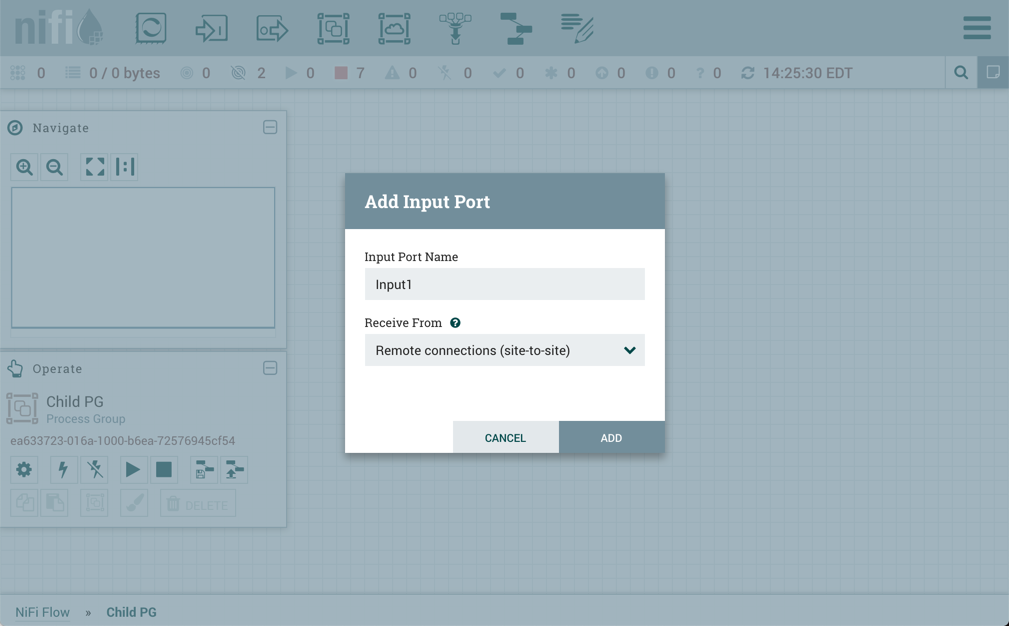

![]() Input Port: Input Ports provide a mechanism for transferring data into a Process Group. When an Input Port is dragged

onto the canvas, the DFM is prompted to name the Port. All Ports within a Process Group must have unique names.

Input Port: Input Ports provide a mechanism for transferring data into a Process Group. When an Input Port is dragged

onto the canvas, the DFM is prompted to name the Port. All Ports within a Process Group must have unique names.

All components exist only within a Process Group. When a user initially navigates to the NiFi page, the user is placed in the Root Process Group. If the Input Port is dragged onto the Root Process Group, the Input Port provides a mechanism to receive data from remote instances of NiFi via Site-to-Site. In this case, the Input Port can be configured to restrict access to appropriate users, if NiFi is configured to run securely. For information on configuring NiFi to run securely, see the System Administrator’s Guide.

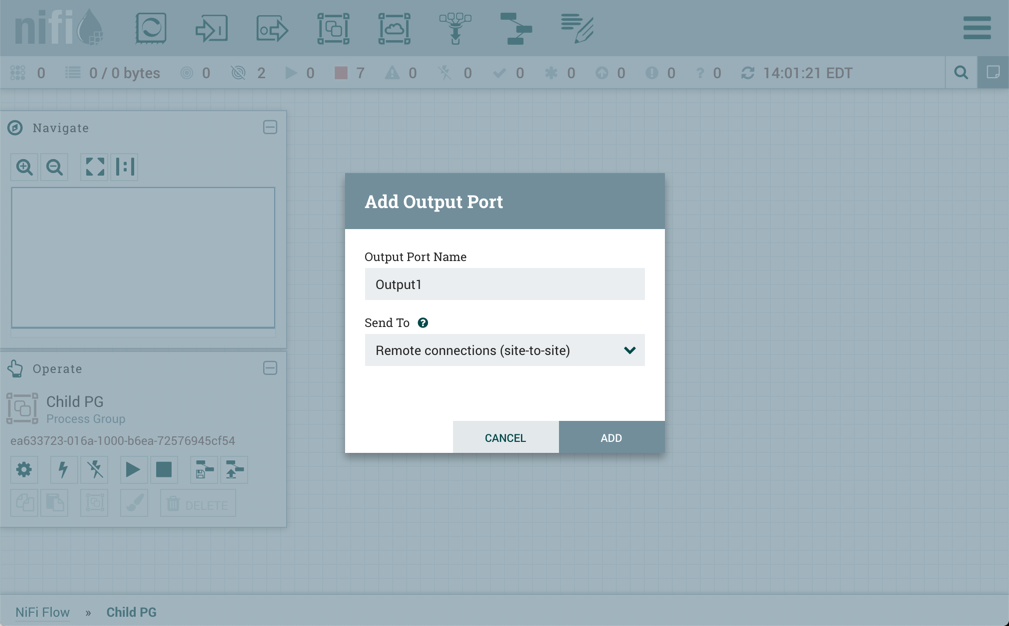

![]() Output Port: Output Ports provide a mechanism for transferring data from a Process Group to destinations outside

of the Process Group. When an Output Port is dragged onto the canvas, the DFM is prompted to name the Port. All Ports

within a Process Group must have unique names.

Output Port: Output Ports provide a mechanism for transferring data from a Process Group to destinations outside

of the Process Group. When an Output Port is dragged onto the canvas, the DFM is prompted to name the Port. All Ports

within a Process Group must have unique names.

If the Output Port is dragged onto the Root Process Group, the Output Port provides a mechanism for sending data to remote instances of NiFi via Site-to-Site. In this case, the Port acts as a queue. As remote instances of NiFi pull data from the port, that data is removed from the queues of the incoming Connections. If NiFi is configured to run securely, the Output Port can be configured to restrict access to appropriate users. For information on configuring NiFi to run securely, see the System Administrator’s Guide.

![]() Process Group: Process Groups can be used to logically group a set of components so that the dataflow is easier to understand

and maintain. Additionally, Process Groups are used as a mechanism for grouping together components in such a way that they operate together

as a more cohesive unit. For example, by configuring the Execution Engine or the FlowFile Outbound Policy.

Process Group: Process Groups can be used to logically group a set of components so that the dataflow is easier to understand

and maintain. Additionally, Process Groups are used as a mechanism for grouping together components in such a way that they operate together

as a more cohesive unit. For example, by configuring the Execution Engine or the FlowFile Outbound Policy.

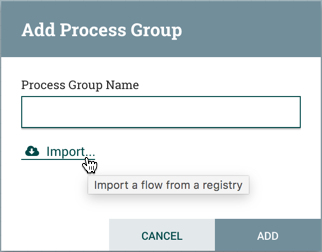

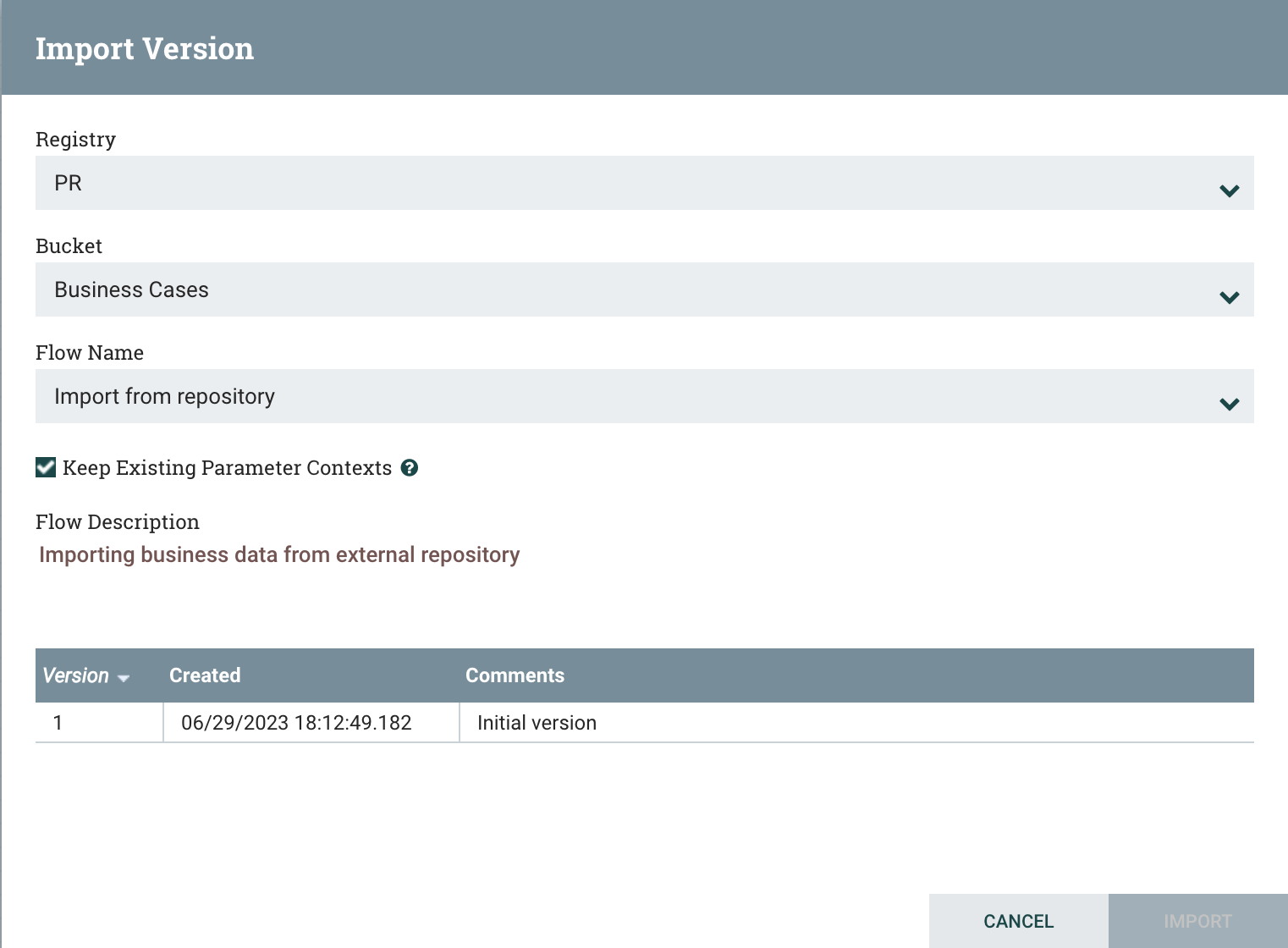

When a Process Group is dragged onto the canvas, the DFM is prompted to name the Process Group. The Process Group will then be nested within that parent group.

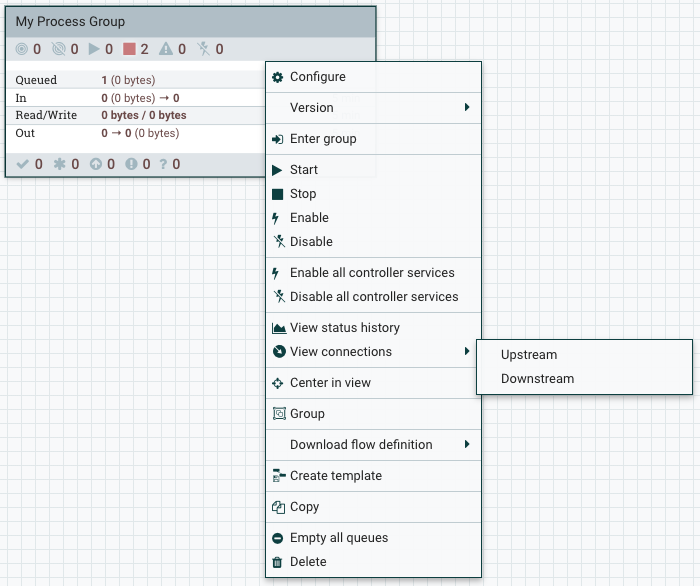

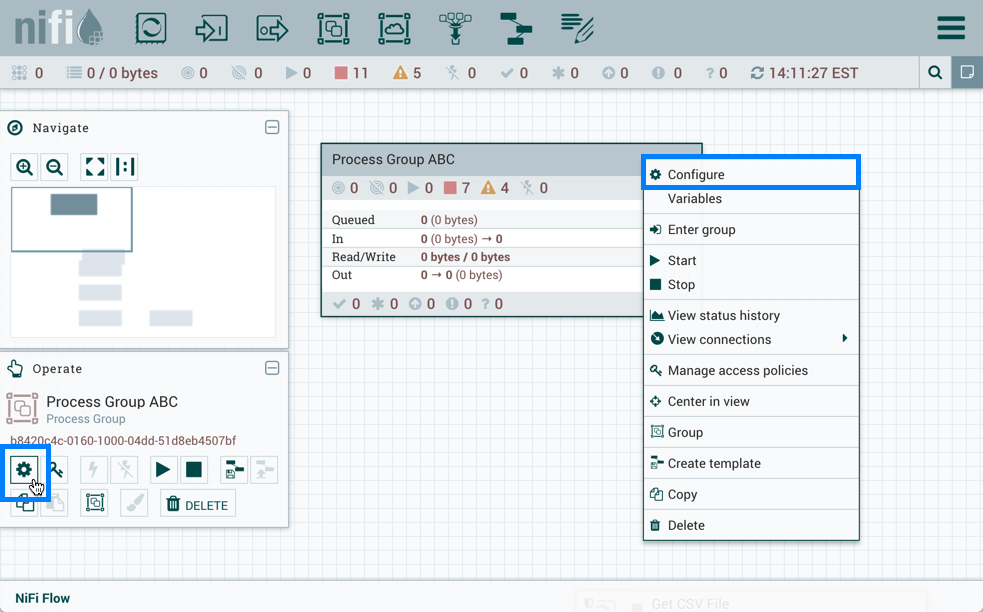

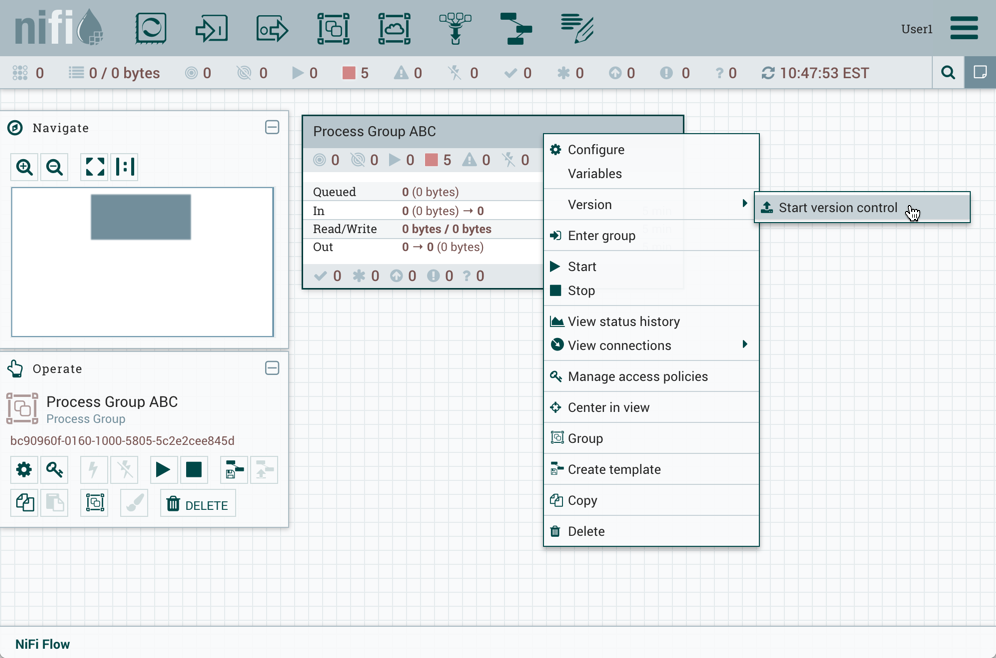

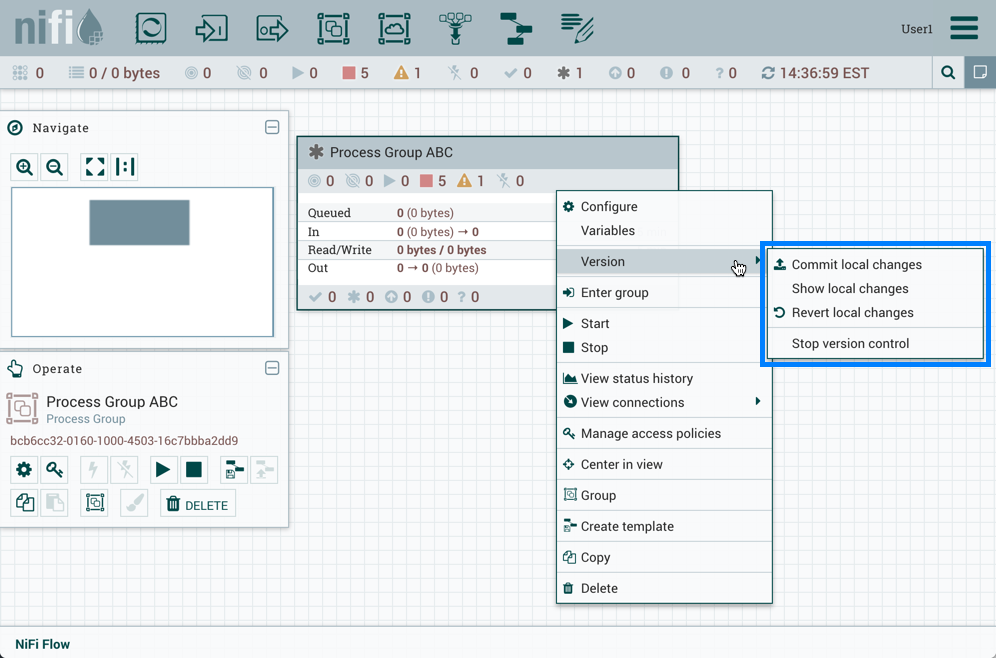

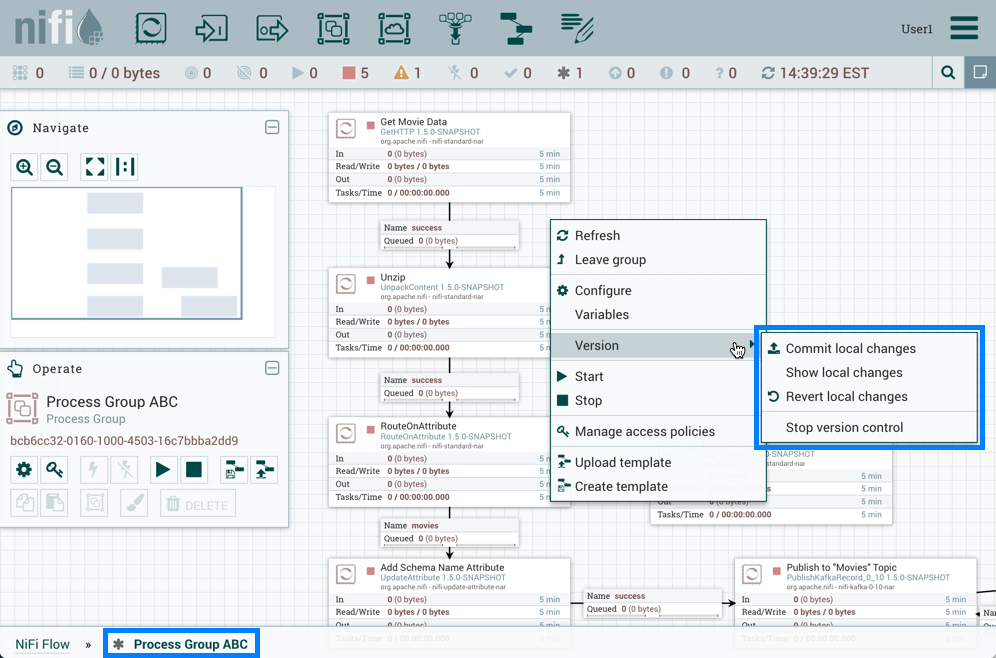

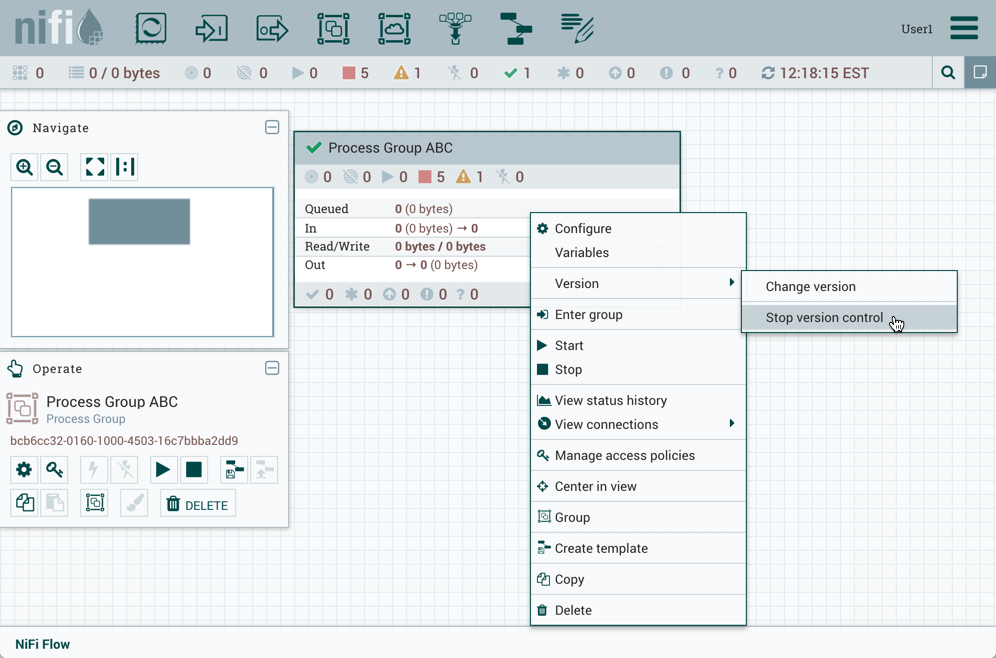

Once you have dragged a Process Group onto the canvas, you can interact with it by right-clicking on the Process Group and selecting an option from the context menu. The options available to you from the context menu vary, depending on the privileges assigned to you.

While the options available from the context menu vary, the following options are typically available when you have full privileges to work with the Process Group:

-

Configure: This option allows the user to establish or change the configuration of the Process Group.

-

Enter group: This option allows the user to enter the Process Group.

| It is also possible to double-click on the Process Group to enter it. |

-

Start: This option allows the user to start a Process Group.

-

Stop: This option allows the user to stop a Process Group.

-

Enable: This option allows the user to enable all Processors in the Process Group.

-

Disable: This option allows the user to disable all Processors in the Process Group.

-

View status history: This option opens a graphical representation of the Process Group’s statistical information over time.

-

View connections→Upstream: This option allows the user to see and "jump to" upstream connections that are coming into the Process Group.

-

View connections→Downstream: This option allows the user to see and "jump to" downstream connections that are going out of the Process Group.

-

Center in view: This option centers the view of the canvas on the given Process Group.

-

Group: This option allows the user to create a new Process Group that contains the selected Process Group and any other components selected on the canvas.

-

Download flow definition: This option allows the user to download the flow definition of the process group as a JSON file. The file can be used as a backup or imported into a NiFi Registry using the NiFi CLI. There are two options when downloading a flow definition:

-

→ Without external services: Controller services referenced by the selected process group but outside its scope (e.g., services in a parent group) will not be included in the flow definition as services.

-

→ With external services: Controller services referenced by the selected process group but outside its scope (e.g., services in a parent group) will be included in the flow definition.

-

-

Copy: This option places a copy of the selected Process Group on the clipboard, so that it may be pasted elsewhere on the canvas by right-clicking on the canvas and selecting Paste. The Copy/Paste actions also may be done using the keystrokes Ctrl-C (Command-C) and Ctrl-V (Command-V).

-

Empty all queues: This option allows the user to empty all queues in the selected Process Group. All FlowFiles from all connections waiting at the time of the request will be removed.

-

Delete: This option allows the DFM to delete a Process Group.

(Note: If "Download flow definition" is selected for a versioned process group, there is no versioning information in the download. In other words, the resulting contents of the JSON file is the same whether the process group is versioned or not.)

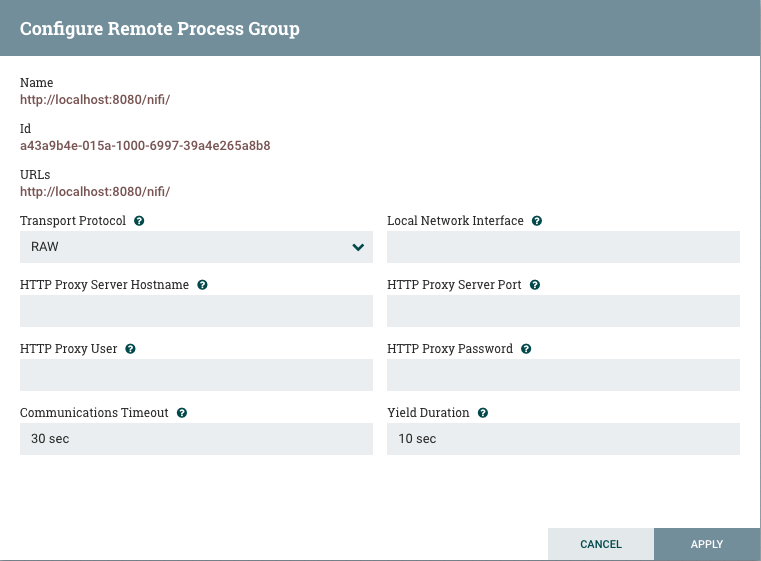

![]() Remote Process Group: Remote Process Groups appear and behave similar to Process Groups. However, the Remote Process Group (RPG) references a remote instance of NiFi. When an RPG is dragged onto the canvas, rather than being prompted for a name, the DFM is prompted for the URL of the remote NiFi instance. If the remote NiFi is a clustered instance, adding two or more cluster node URLs is recommended so that an initial connection can be made even if one of the nodes is unavailable. Multiple URLs can be specified in a comma-separated format.

Remote Process Group: Remote Process Groups appear and behave similar to Process Groups. However, the Remote Process Group (RPG) references a remote instance of NiFi. When an RPG is dragged onto the canvas, rather than being prompted for a name, the DFM is prompted for the URL of the remote NiFi instance. If the remote NiFi is a clustered instance, adding two or more cluster node URLs is recommended so that an initial connection can be made even if one of the nodes is unavailable. Multiple URLs can be specified in a comma-separated format.

When data is transferred to a clustered instance of NiFi via an RPG, the RPG will first connect to the remote instance whose URL is configured to determine which nodes are in the cluster and how busy each node is. This information is then used to load balance the data that is pushed to each node. The remote instances are then interrogated periodically to determine information about any nodes that are dropped from or added to the cluster and to recalculate the load balancing based on each node’s load. For more information, see the section on Site-to-Site.

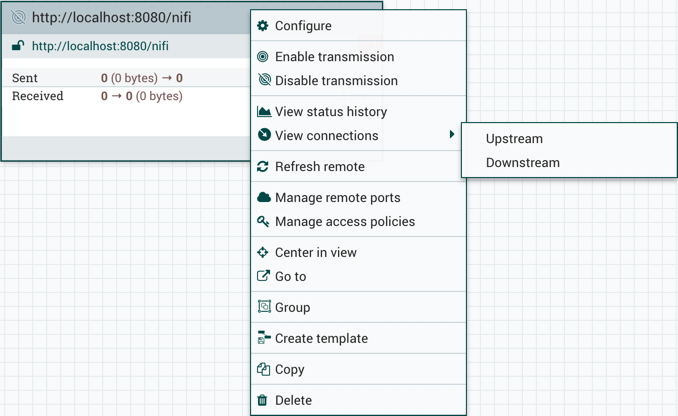

Once you have dragged a Remote Process Group onto the canvas, you can interact with it by right-clicking on the Remote Process Group and selecting an option from the context menu. The options available to you from the menu vary, depending on the privileges assigned to you.

The following options are typically available when you have full privileges to work with the Remote Process Group:

-

Configure: This option allows the user to establish or change the configuration of the Remote Process Group.

-

Enable transmission: Makes the transmission of data between NiFi instances active (see Remote Process Group Transmission).

-

Disable transmission: Disables the transmission of data between NiFi instances.

-

View status history: This option opens a graphical representation of the Remote Process Group’s statistical information over time.

-

View connections→Upstream: This option allows the user to see and "jump to" upstream connections that are coming into the Remote Process Group.

-

View connections→Downstream: This option allows the user to see and "jump to" downstream connections that are going out of the Remote Process Group.

-

Refresh remote: This option refreshes the view of the status of the remote NiFi instance.

-

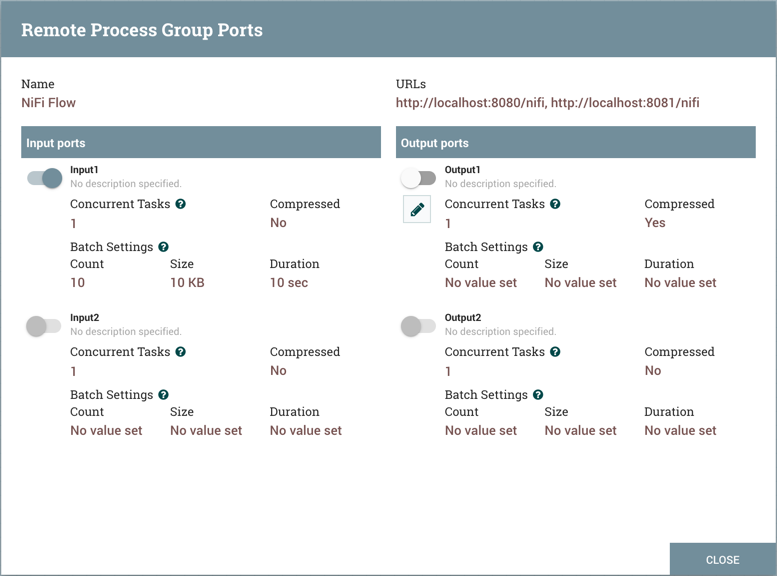

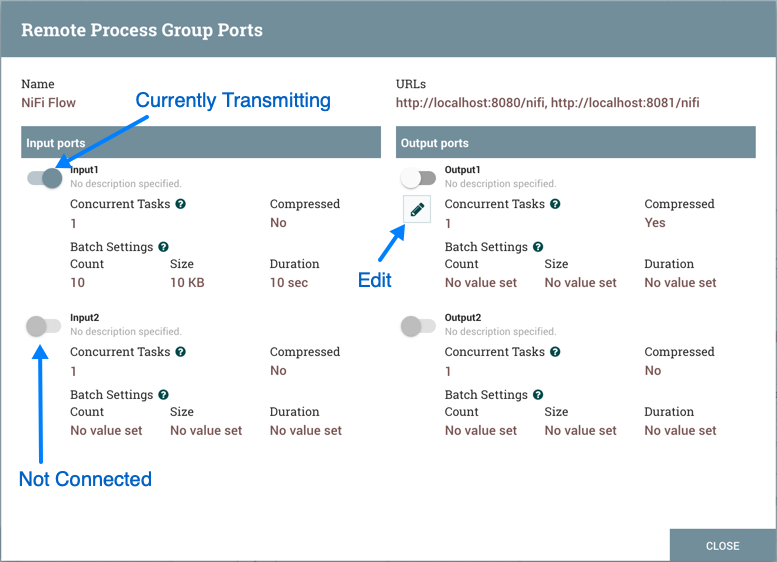

Manage remote ports: This option allows the user to see input ports and/or output ports that exist on the remote instance of NiFi that the Remote Process Group is connected to. Note that if the Site-to-Site configuration is secure, only the ports that the connecting NiFi has been given accessed to will be visible.

-

Center in view: This option centers the view of the canvas on the given Remote Process Group.

-

Go to: This option opens a view of the remote NiFi instance in a new tab of the browser. Note that if the Site-to-Site configuration is secure, the user must have access to the remote NiFi instance in order to view it.

-

Group: This option allows the user to create a new Process Group that contains the selected Remote Process Group and any other components selected on the canvas.

-

Copy: This option places a copy of the selected Process Group on the clipboard, so that it may be pasted elsewhere on the canvas by right-clicking on the canvas and selecting Paste. The Copy/Paste actions also may be done using the keystrokes Ctrl-C (Command-C) and Ctrl-V (Command-V).

-

Delete: This option allows the DFM to delete a Remote Process Group from the canvas.

![]() Funnel: Funnels are used to combine the data from many Connections into a single Connection. This has two advantages.

First, if many Connections are created with the same destination, the canvas can become cluttered if those Connections

have to span a large space. By funneling these Connections into a single Connection, that single Connection can then be

drawn to span that large space instead. Secondly, Connections can be configured with FlowFile Prioritizers. Data from

several Connections can be funneled into a single Connection, providing the ability to Prioritize all of the data on that

one Connection, rather than prioritizing the data on each Connection independently.

Funnel: Funnels are used to combine the data from many Connections into a single Connection. This has two advantages.

First, if many Connections are created with the same destination, the canvas can become cluttered if those Connections

have to span a large space. By funneling these Connections into a single Connection, that single Connection can then be

drawn to span that large space instead. Secondly, Connections can be configured with FlowFile Prioritizers. Data from

several Connections can be funneled into a single Connection, providing the ability to Prioritize all of the data on that

one Connection, rather than prioritizing the data on each Connection independently.

![]() Label: Labels are used to provide documentation to parts of a dataflow. When a Label is dropped onto the canvas,

it is created with a default size. The Label can then be resized by dragging the handle in the bottom-right corner.

The Label has no text when initially created. The text of the Label can be added by right-clicking on the Label and

choosing

Label: Labels are used to provide documentation to parts of a dataflow. When a Label is dropped onto the canvas,

it is created with a default size. The Label can then be resized by dragging the handle in the bottom-right corner.

The Label has no text when initially created. The text of the Label can be added by right-clicking on the Label and

choosing Configure.

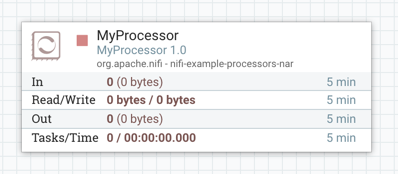

Component Versions

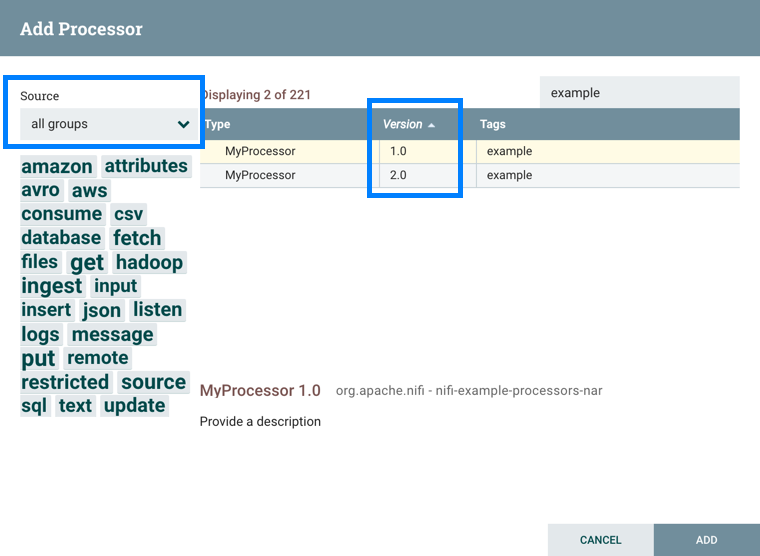

You have access to information about the version of your Processors, Controller Services, Reporting Tasks and Flow Analysis Rules. This is especially useful when you are working within a clustered environment with multiple NiFi instances running different versions of a component or if you have upgraded to a newer version of a processor. The Add Processor, Add Controller Service, Add Reporting Task and Add Flow Analysis Rule dialogs include a column identifying the component version, as well as the name of the component, the organization or group that created the component, and the NAR bundle that contains the component.

Each component displayed on the canvas also contains this information.

Sorting and Filtering Components

When you are adding a component, you can sort on version number or filter based on originating source.

To sort based on version, click the version column to display in ascending or descending version order.

To filter based on source group, click the source drop-down in the upper left of your Add Component dialog, and select the group you want to view.

Changing Component Versions

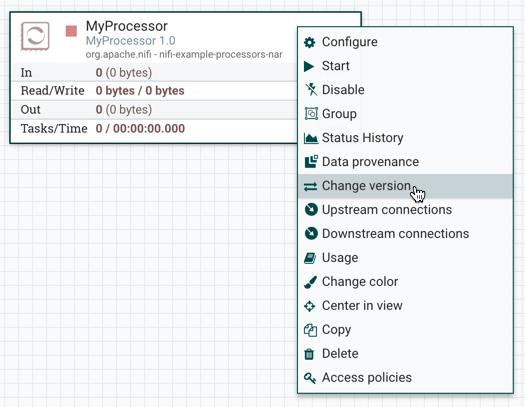

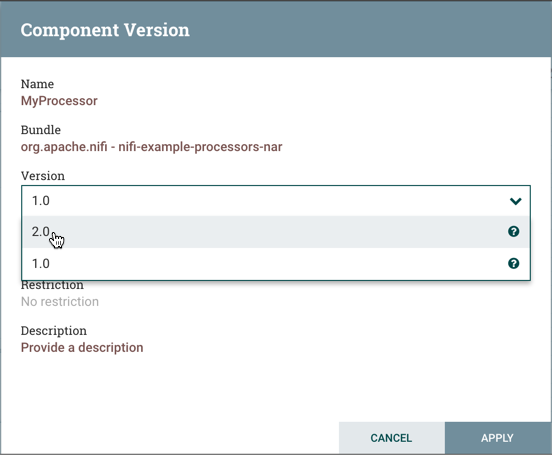

To change a component version, perform the following steps.

-

Right-click the component on the canvas to display configuration options.

-

Select Change version.

-

In the Component Version dialog, select the version you want to run from the Version drop-down menu.

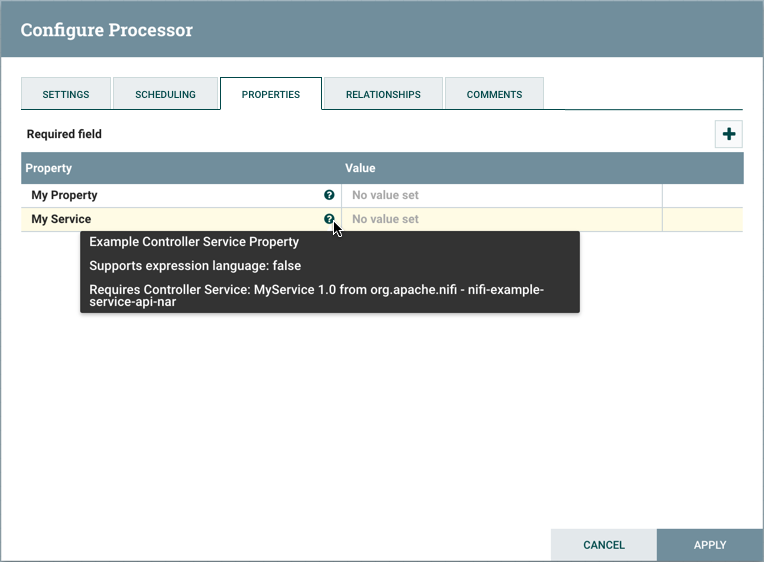

Understanding Version Dependencies

When you are configuring a component, you can also view information about version dependencies.

-

Right-click your component and select Configure to display the Configure dialog for your component.

-

Click the Properties tab.

-

Click the information icon to view any version dependency information.

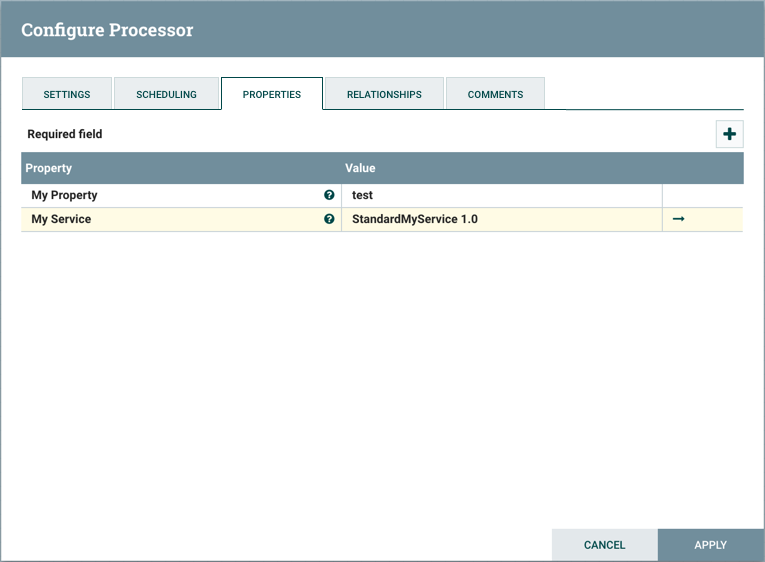

In the following example, MyProcessor version 1.0 is configured properly with the controller service StandardMyService version 1.0:

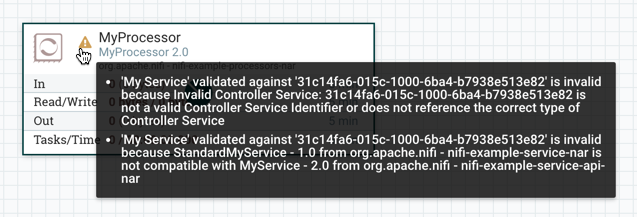

If the version of MyProcessor is changed to an incompatible version (MyProcessor 2.0), validation errors will be displayed on the processor:

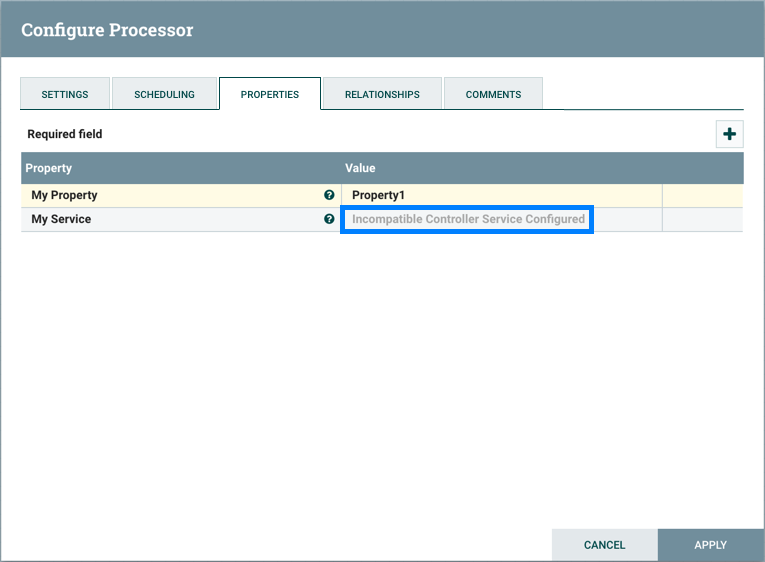

and an error message will be displayed in the processor’s controller service configuration since the service is no longer valid:

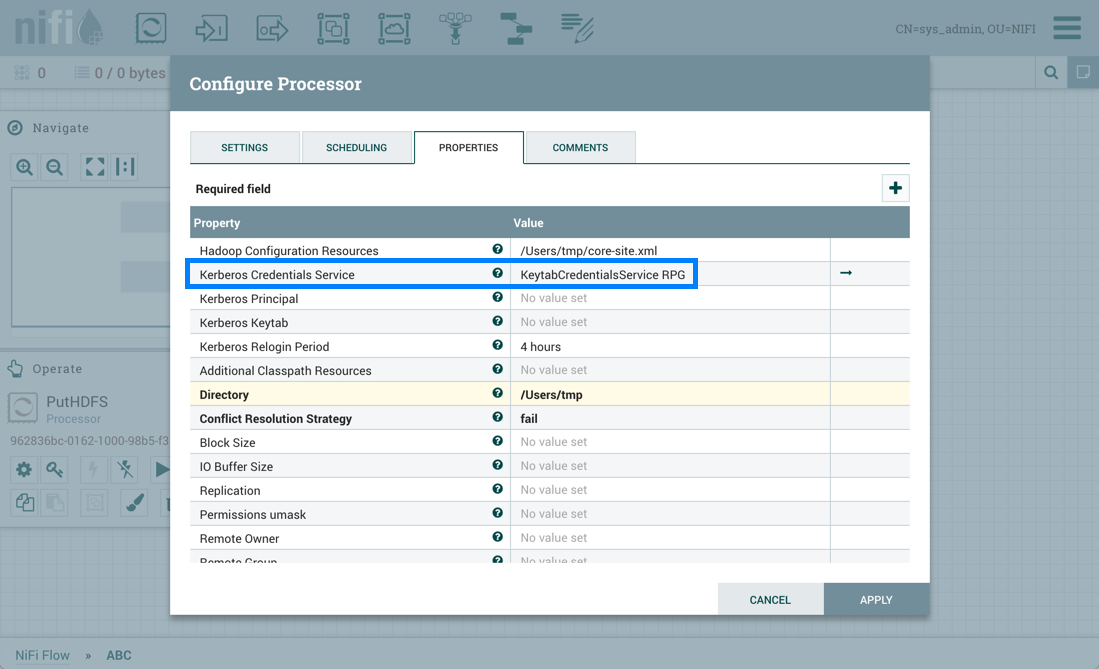

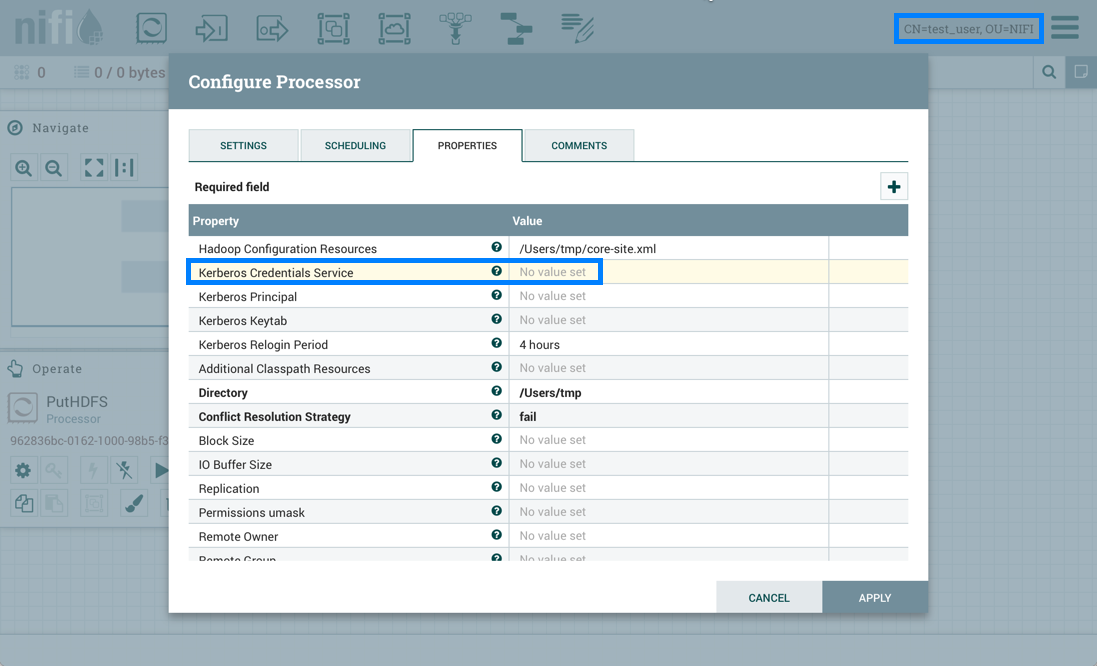

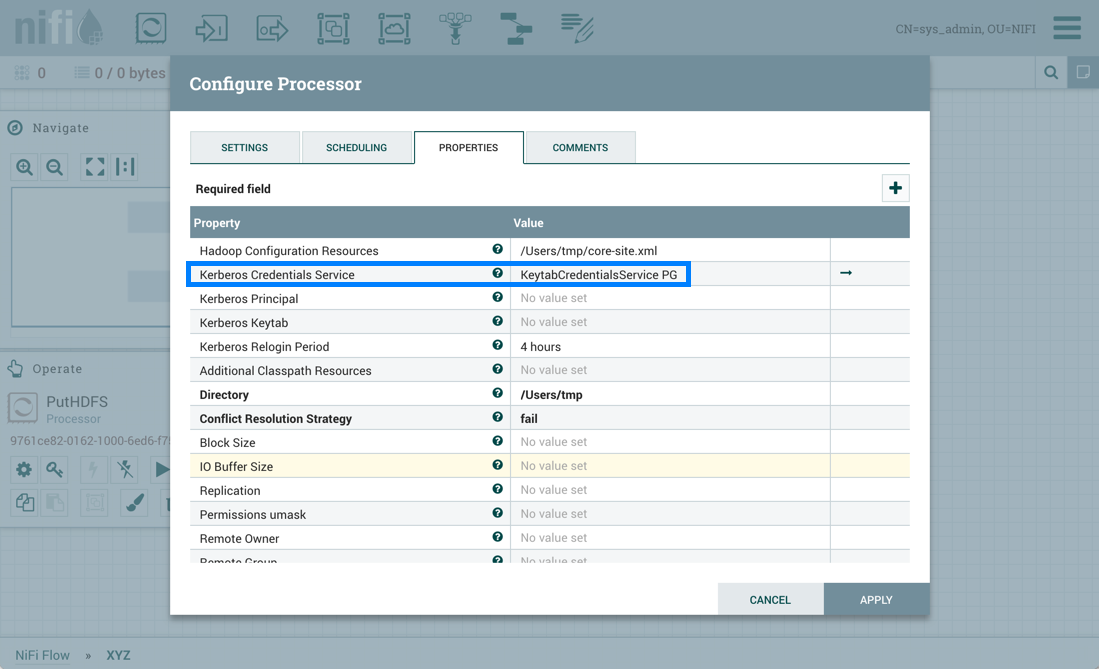

Configuring a Processor

To configure a processor, right-click on the Processor and select the Configure option from the context menu. Alternatively, just double-click on the Processor. The configuration dialog is opened with four

different tabs, each of which is discussed below. Once you have finished configuring the Processor, you can apply

the changes by clicking "Apply" or cancel all changes by clicking "Cancel".

Note that after a Processor has been started, the context menu shown for the Processor no longer has a Configure

option but rather has a View Configuration option. Processor configuration cannot be changed while the Processor is

running. You must first stop the Processor and wait for all of its active tasks to complete before configuring

the Processor again.

Note that entering certain control characters are not supported and will be automatically filtered out when entered. The following characters and any unpaired Unicode surrogate codepoints will not be retained in any configuration:

[#x0], [#x1], [#x2], [#x3], [#x4], [#x5], [#x6], [#x7], [#x8], [#xB], [#xC], [#xE], [#xF], [#x10], [#x11], [#x12], [#x13], [#x14], [#x15], [#x16], [#x17], [#x18], [#x19], [#x1A], [#x1B], [#x1C], [#x1D], [#x1E], [#x1F], [#xFFFE], [#xFFFF]

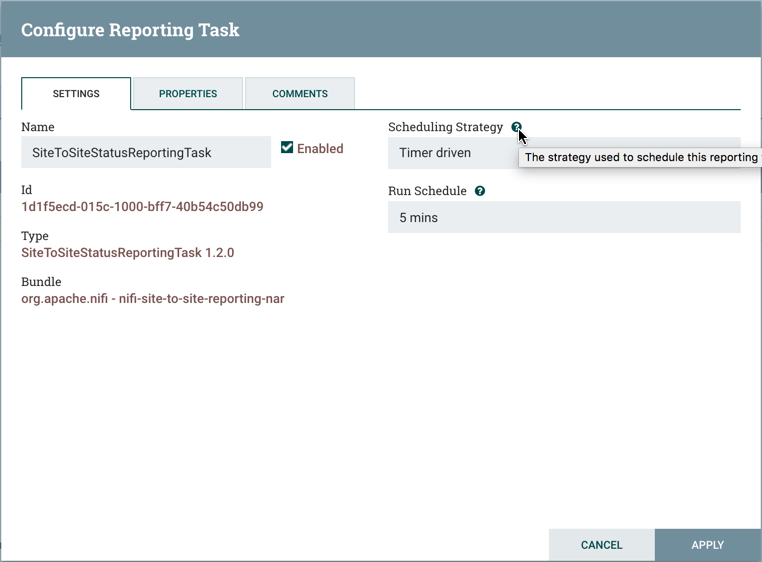

Settings Tab

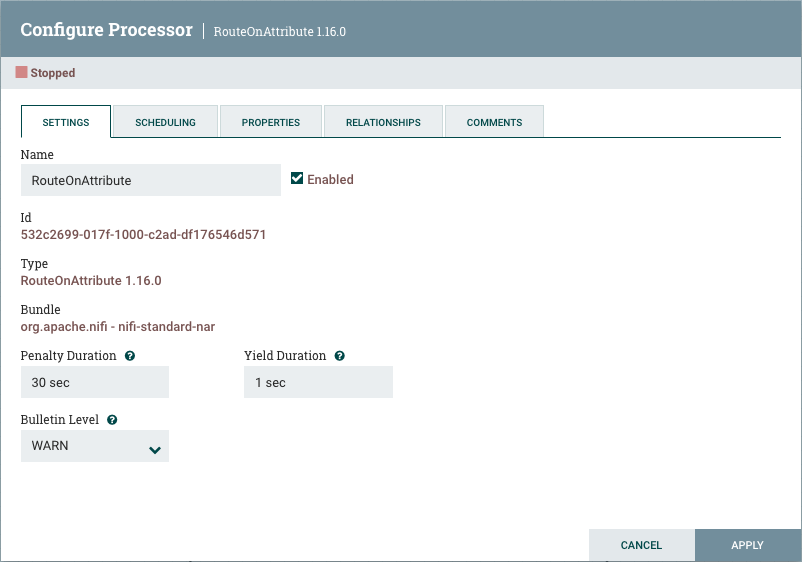

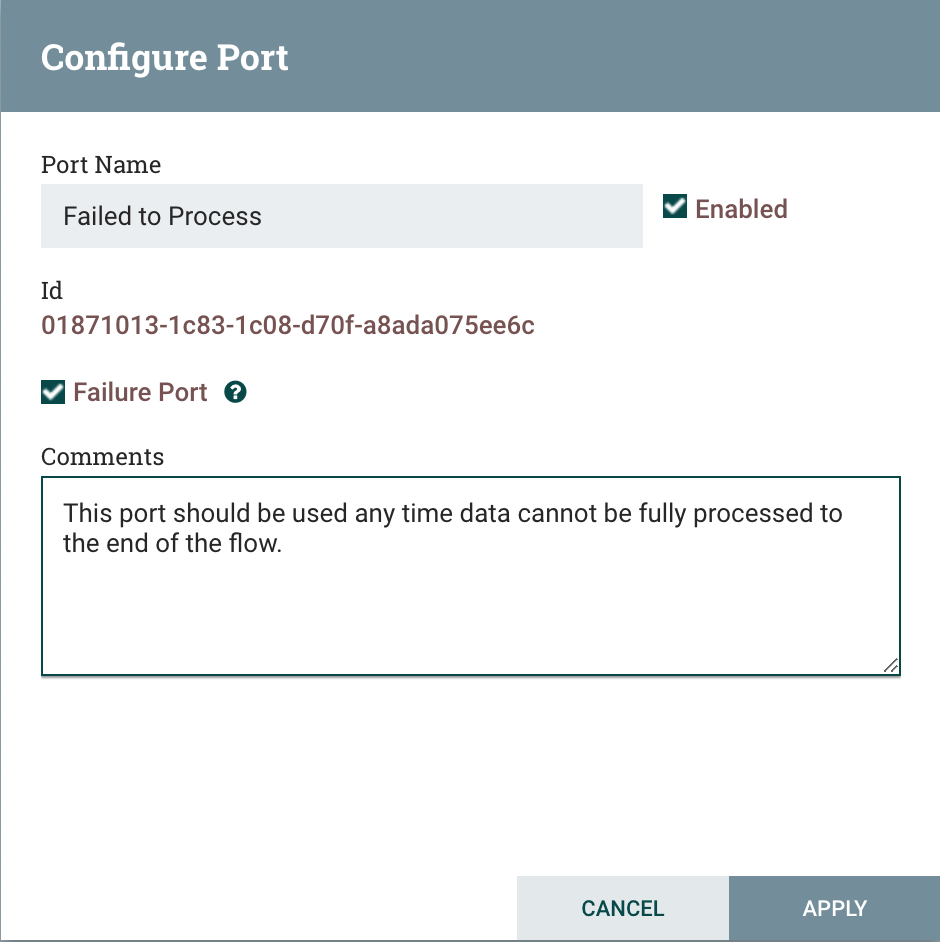

The first tab in the Processor Configuration dialog is the Settings tab:

This tab contains several different configuration items. First, it allows the DFM to change the name of the Processor. The name of a Processor by default is the same as the Processor type. Next to the Processor Name is a checkbox, indicating whether the Processor is Enabled. When a Processor is added to the canvas, it is enabled. If the Processor is disabled, it cannot be started. The disabled state is used to indicate that when a group of Processors is started, such as when a DFM starts an entire Process Group, this (disabled) Processor should be excluded.

Below the Name configuration, the Processor’s unique identifier is displayed along with the Processor’s type and NAR bundle. These values cannot be modified.

Next are two dialogues for configuring 'Penalty Duration' and 'Yield Duration'. During the normal course of processing a

piece of data (a FlowFile), an event may occur that indicates that the data cannot be processed at this time but the

data may be processable at a later time. When this occurs, the Processor may choose to Penalize the FlowFile. This will

prevent the FlowFile from being Processed for some period of time. For example, if the Processor is to push the data

to a remote service, but the remote service already has a file with the same name as the filename that the Processor

is specifying, the Processor may penalize the FlowFile. The 'Penalty Duration' allows the DFM to specify how long the

FlowFile should be penalized. The default value is 30 seconds.

Similarly, the Processor may determine that some situation exists such that the Processor can no longer make any progress,

regardless of the data that it is processing. For example, if a Processor is to push data to a remote service and that

service is not responding, the Processor cannot make any progress. As a result, the Processor should 'yield', which will

prevent the Processor from being scheduled to run for some period of time. That period of time is specified by setting

the 'Yield Duration'. The default value is 1 second.

The last configurable option on the left-hand side of the Settings tab is the Bulletin level. Whenever the Processor writes

to its log, the Processor also will generate a Bulletin. This setting indicates the lowest level of Bulletin that should be

shown in the User Interface. By default, the Bulletin level is set to WARN, which means it will display all warning and error-level

bulletins.

Scheduling Tab

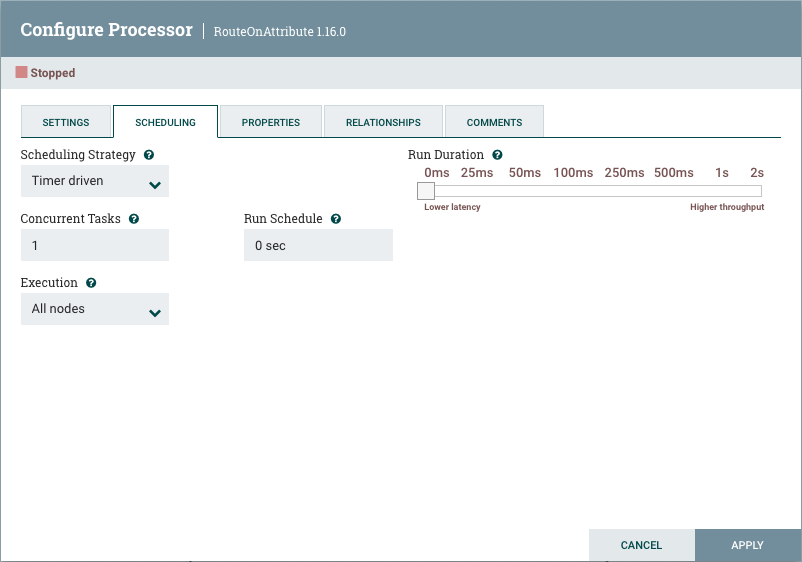

The second tab in the Processor Configuration dialog is the Scheduling Tab:

Scheduling Strategy

The first configuration option is the Scheduling Strategy. There are three possible options for scheduling components:

Timer driven: This is the default mode. The Processor will be scheduled to run on a regular interval. The interval at which the Processor is run is defined by the 'Run Schedule' option (see below).

CRON driven: When using the CRON driven scheduling mode, the Processor is scheduled to run periodically, similar to the Timer driven scheduling mode. However, the CRON driven mode provides significantly more flexibility at the expense of increasing the complexity of the configuration. The CRON driven scheduling value is a string of six required fields and one optional field, each separated by a space. These fields are:

| Field | Valid values |

|---|---|

Seconds |

0-59 |

Minutes |

0-59 |

Hours |

0-23 |

Day of Month |

1-31 |

Month |

1-12 or JAN-DEC |

Day of Week |

0-7 or SUN-SAT (0 or 7 is Sunday) |

You typically specify values one of the following ways:

-

Number: Specify one or more valid value. You can enter more than one value using a comma-separated list.

-

Range: Specify a range using the <number>-<number> syntax.

-

Increment: Specify an increment using <start value>/<increment> syntax. For example, in the Minutes field, 0/15 indicates the minutes 0, 15, 30, and 45.

You should also be aware of several valid special characters:

-

* — Indicates that all values are valid for that field.

-

? — Indicates that no specific value is specified. This special character is valid in the Days of Month and Days of Week field.

-

L — You can append L to one of the Day of Week values, to specify the last occurrence of this day in the month. For example, 1L indicates the last Sunday of the month.

For example:

-

The string

0 0 13 * * *indicates that you want to schedule the processor to run at 1:00 PM every day. -

The string

0 20 14 ? * MON-FRIindicates that you want to schedule the processor to run at 2:20 PM every Monday through Friday.

Concurrent Tasks

Next, the Scheduling tab provides a configuration option named 'Concurrent Tasks'. This controls how many threads the Processor will use. Said a different way, this controls how many FlowFiles should be processed by this Processor at the same time. Increasing this value will typically allow the Processor to handle more data in the same amount of time. However, it does this by using system resources that then are not usable by other Processors. This essentially provides a relative weighting of Processors — it controls how much of the system’s resources should be allocated to this Processor instead of other Processors. This field is available for most Processors. There are, however, some types of Processors that can only be scheduled with a single Concurrent task.

Run Schedule

The 'Run Schedule' dictates how often the Processor should be scheduled to run. The valid values for this field depend on the selected

Scheduling Strategy (see above). When using the Timer driven Scheduling Strategy, this value is a time duration specified by a number

followed by a time unit. For example, 1 second or 5 mins. The default value of 0 sec means that the Processor should run as often

as possible as long as it has data to process. This is true for any time duration of 0, regardless of the time unit (e.g., 0 sec,

0 mins, 0 days). For an explanation of values that are applicable for the CRON driven Scheduling Strategy, see the description of

the CRON driven Scheduling Strategy itself.

Execution

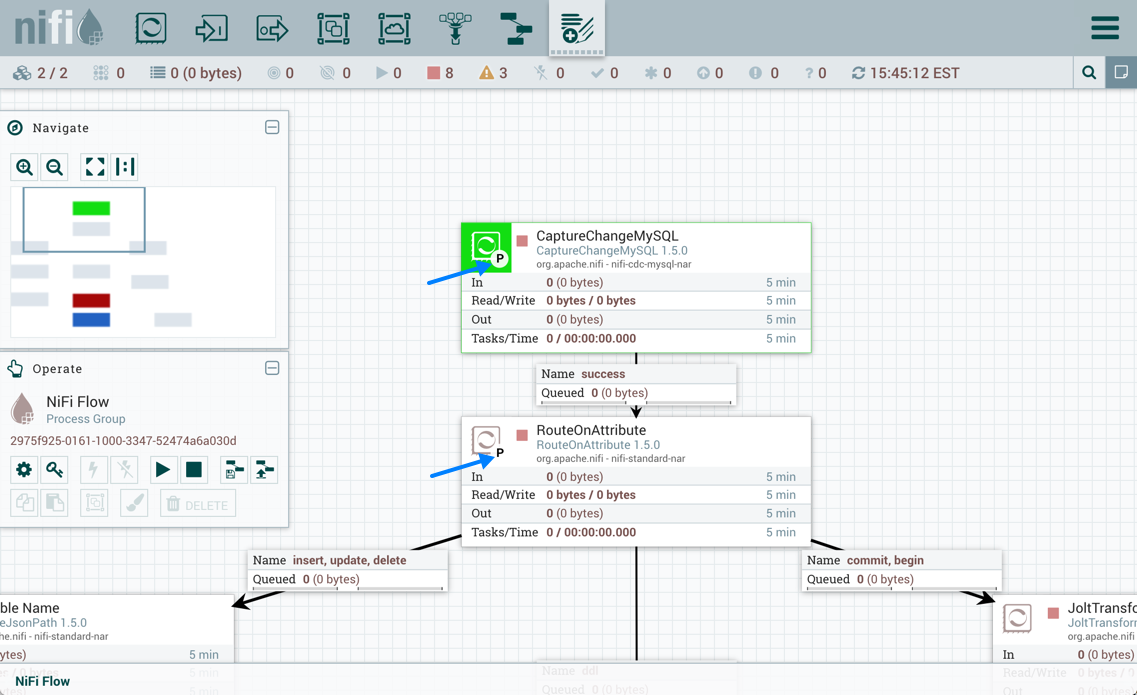

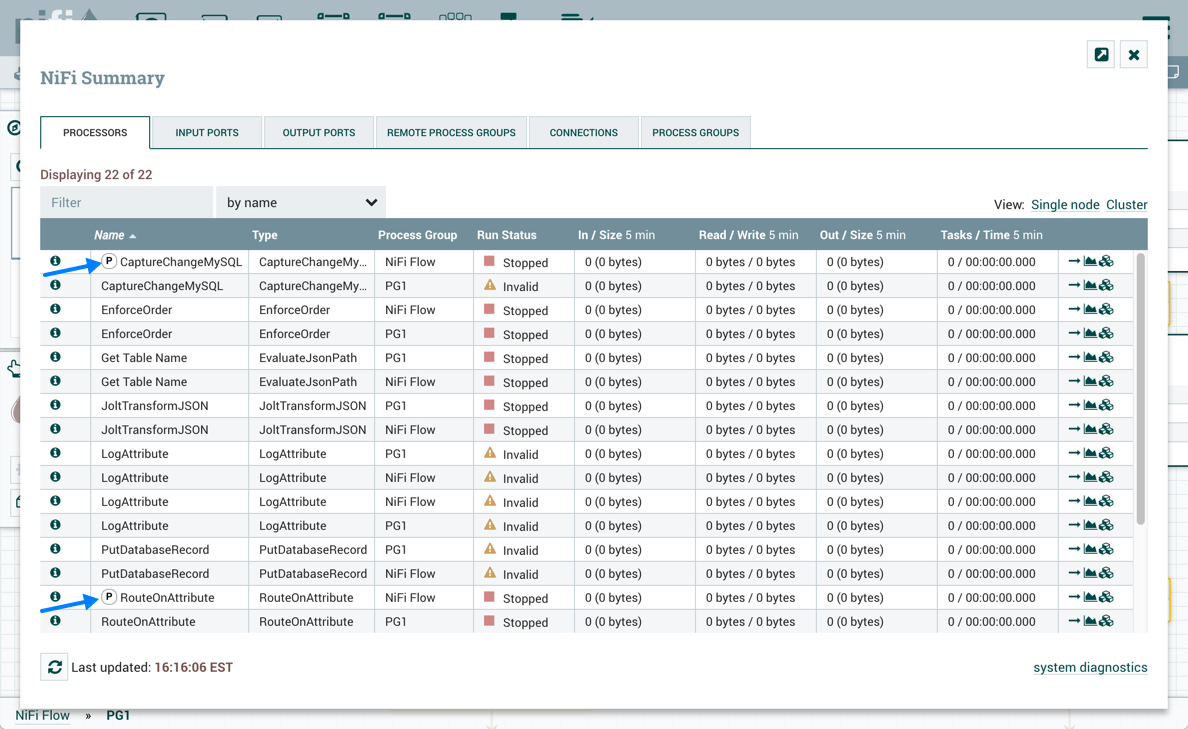

The Execution setting is used to determine on which node(s) the Processor will be scheduled to execute. Selecting 'All Nodes' will result in this Processor being scheduled on every node in the cluster. Selecting 'Primary Node' will result in this Processor being scheduled on the Primary Node only. Processors that have been configured for 'Primary Node' execution are identified by a "P" next to the processor icon:

To quickly identify 'Primary Node' processors, the "P" icon is also shown in the Processors tab on the Summary page:

Run Duration

The right-hand side of the Scheduling tab contains a slider for choosing the 'Run Duration'. This controls how long the Processor should be scheduled to run each time that it is triggered. On the left-hand side of the slider, it is marked 'Lower latency' while the right-hand side is marked 'Higher throughput'. When a Processor finishes running, it must update the repository in order to transfer the FlowFiles to the next Connection. Updating the repository is expensive, so the more work that can be done at once before updating the repository, the more work the Processor can handle (Higher throughput). However, this means that the next Processor cannot start processing those FlowFiles until the previous Process updates this repository. As a result, the latency will be longer (the time required to process the FlowFile from beginning to end will be longer). As a result, the slider provides a spectrum from which the DFM can choose to favor Lower Latency or Higher Throughput.

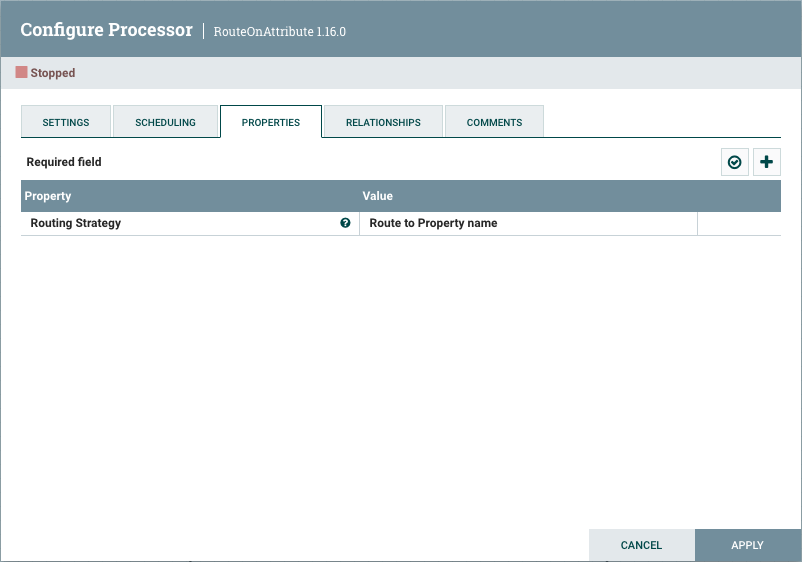

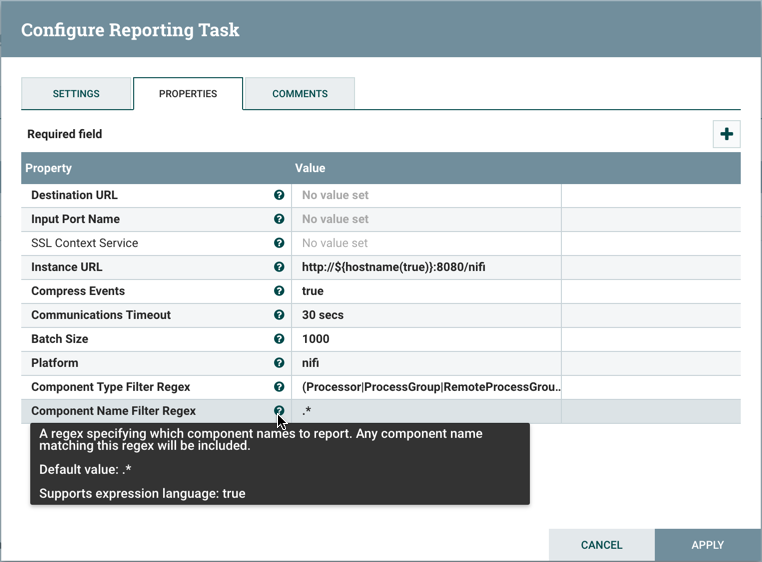

Properties Tab

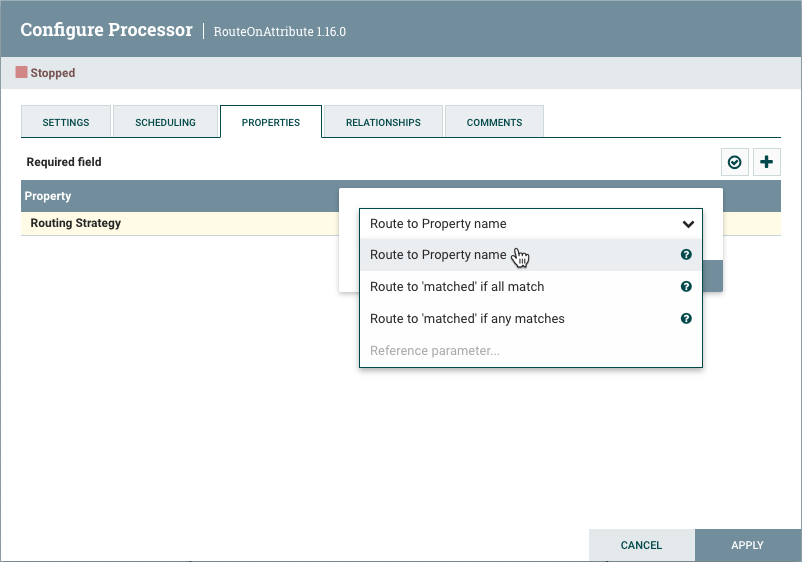

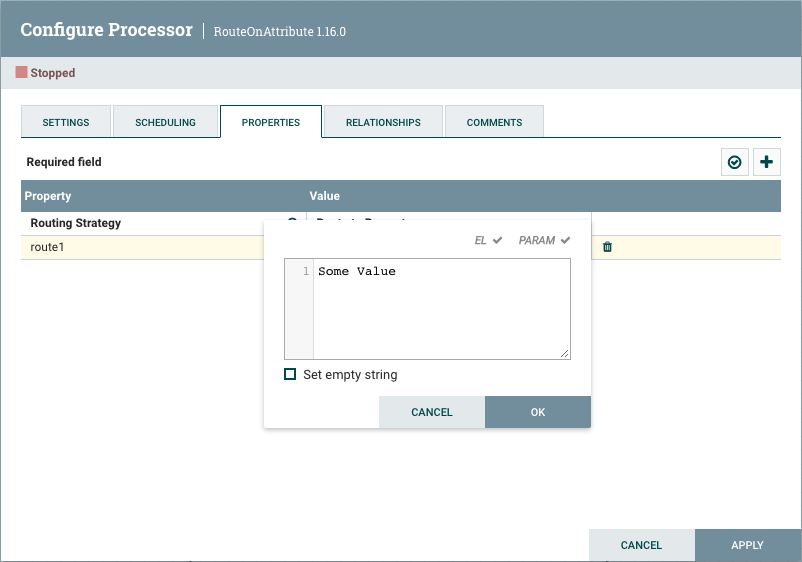

The Properties tab provides a mechanism to configure Processor-specific behavior. There are no default properties. Each type of Processor must define which Properties make sense for its use case. Below, we see the Properties tab for a RouteOnAttribute Processor:

This Processor, by default, has only a single property: 'Routing Strategy'. The default value is 'Route to Property name'. Next to

the name of this property is a small question mark symbol (

![]() ). This help symbol is seen in other places throughout the User Interface, and it indicates that more information is available.

Hovering over this symbol with the mouse will provide additional details about the property and the default value, as well as

historical values that have been set for the Property.

). This help symbol is seen in other places throughout the User Interface, and it indicates that more information is available.

Hovering over this symbol with the mouse will provide additional details about the property and the default value, as well as

historical values that have been set for the Property.

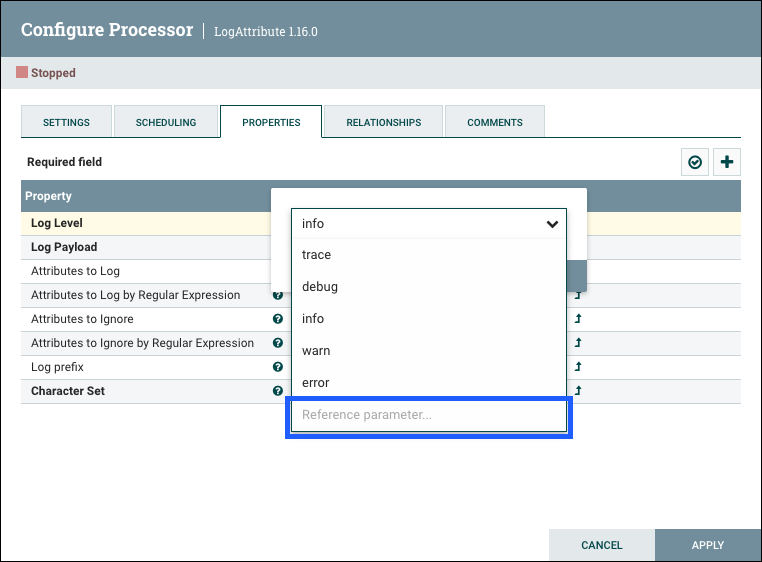

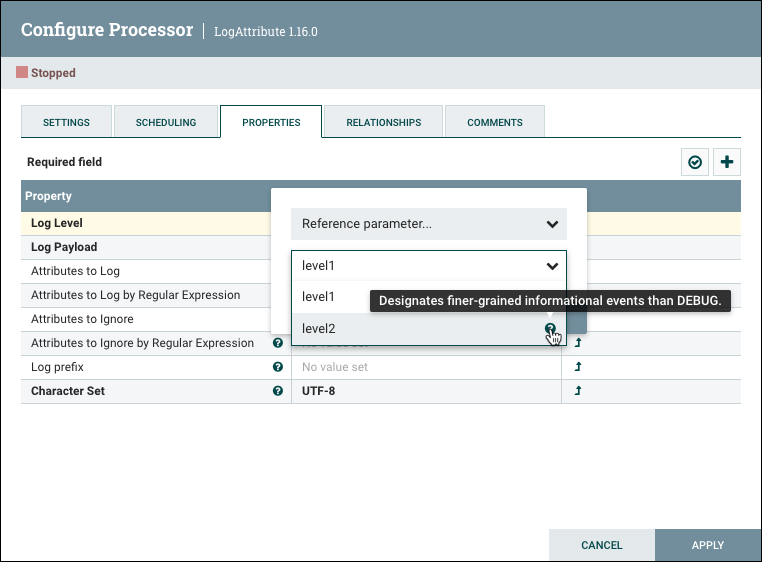

Clicking on the value for the property will allow a DFM to change the value. Depending on the values that are allowed for the property, the user is either provided a drop-down from which to choose a value or is given a text area to type a value:

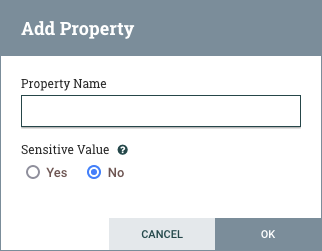

In the top-right corner of the tab is a button for adding a New Property. Clicking this button will provide the DFM with a dialog to enter the name and value of a new property. Not all Processors allow User-Defined properties. In processors that do not allow them, the Processor becomes invalid when User-Defined properties are applied.

Selected Processors support configurable Sensitive Value status for User-Defined properties. Processors must indicate support for configurable Sensitive Value status, otherwise the Sensitive Value selection will be disabled in the Add Property dialog.

Selecting Yes for the Sensitive Value setting instructs NiFi to handle the property value as

sensitive for configuration persistence and framework operations. NiFi encrypts Sensitive Values when storing the flow

configuration and does not include Sensitive Values in exported Flow Definitions.

RouteOnAttribute allows User-Defined properties and will not be valid until the user has added a property.

Note that after a User-Defined property has been added, an icon will appear on the right-hand side of that row (

![]() ). Clicking it will remove the User-Defined property from the Processor.

). Clicking it will remove the User-Defined property from the Processor.

Some processors also have an Advanced User Interface (UI) built into them. For example, the UpdateAttribute processor has an Advanced UI. To access the Advanced UI, click the "Advanced" button that appears at the bottom of the Configure Processor window. Only processors that have an Advanced UI will have this button.

Some processors have properties that refer to other components, such as Controller Services, which also need to be configured. For example, the GetHTTP processor has an SSLContextService property, which refers to the StandardSSLContextService controller service. When DFMs want to configure this property but have not yet created and configured the controller service, they have the option to create the service on the spot, as depicted in the image below. For more information about configuring Controller Services, see the Controller Services section.

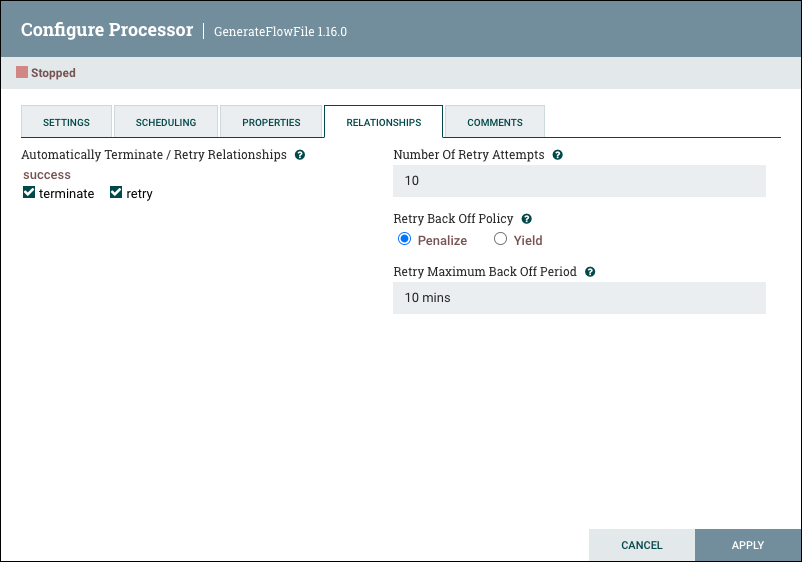

Relationships Tab

The Relationships tab contains an 'Automatically Terminate / Retry Relationships' section. Each of the Relationships that is defined by the Processor is listed here, along with its description.

Automatically Terminate

In order for a Processor to be considered valid and able to run, each Relationship defined by the Processor must be either connected to a downstream component or auto-terminated. If a Relationship is auto-terminated, any FlowFile that is routed to that Relationship will be removed from the flow and its processing considered complete. Any Relationship that is already connected to a downstream component cannot be auto-terminated. The Relationship must first be removed from any Connection that uses it. Additionally, for any Relationship that is selected to be auto-terminated, the auto-termination status will be cleared (turned off) if the Relationship is added to a Connection.

Automatically Retry

Users can also configure whether or not FlowFiles routed to a given Relationship should be retried. If a FlowFile is routed to any Relationship that is configured to be retried, the FlowFile will be re-queued and the Processor will attempt to process it again. If the Processor routes the FlowFile to a retriable Relationship again (either the same Relationship or another that is configured to be retried), it will be re-queued again, up to the number of times specified by the user. If the Processor routes the FlowFile to a retriable Relationship after the specified number of retries, the FlowFile will be transferred to the Connection(s) that include that Relationship - or auto-terminated, as configured. If the Processor routes the FlowFile to any Relationship that is not configured to be retried, it will be routed to that Relationship immediately.

For example, consider a Processor with two relationships: success and failure.

A user configures the failure Relationship to retry 10 times and also be configured to auto-terminate. In this

case, if an incoming FlowFile is routed to the failure Relationship,

it will be retried up to 10 times. After 10 attempts, if it is routed to failure again, it will be auto-terminated. However, if at any point it is

routed to success, it will immediatley be transferred to the Connection(s) that include the success Relationship and not retried any further.

Number of Retry Attempts

For relationships set to retry, this number indicates how many times a FlowFile will attempt to reprocess before it is routed elsewhere.

Retry Back Off Policy

When a FlowFile is to be retried, the user can configure the backoff policy with two options:

-

Penalize - Retry attempts will occur in time, but the processor will continue to process other FlowFiles in the meantime. The Processor will continue to run, processing the FlowFiles are available to it.

-

Yield - The Processor will not be scheduled to run again for some amount of time (determined by the "Yield Duration" configured in the Settings tab). This should be used when either it is desirable to maintain the order of data in the queue and not process subsequent FlowFiles until the first has been processed, or when routing data to the configured Relationship indicates that further processing of other data will not be successful.

Retry Maximum Back Off Period

Initial retries are based on the Penalty/Yield Duration time specified in the Settings tab. The duration time is repeatedly doubled for every subsequent retry attempt. This number indicates the maximum allowable time period before another retry attempt occurs.

| If both terminate and retry are selected, any retry logic will happen first, then auto-termination. |

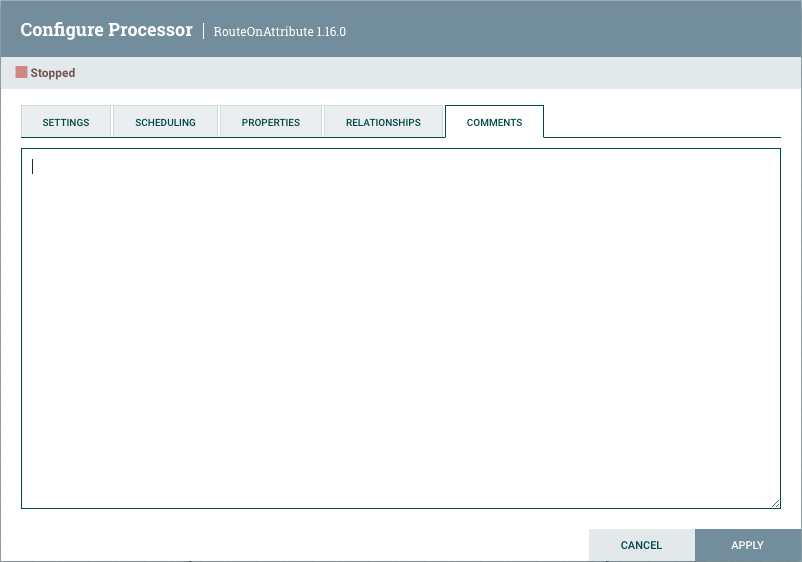

Comments Tab

The last tab in the Processor configuration dialog is the Comments tab. This tab simply provides an area for users to include whatever comments are appropriate for this component. Use of the Comments tab is optional:

Additional Help

You can access additional documentation about each Processor’s usage by right-clicking on the Processor and selecting 'Usage' from the context menu. Alternatively, select Help from the Global Menu in the top-right corner of the UI to display a Help page with all of the documentation, including usage documentation for all the Processors that are available. Click on the desired Processor to view usage documentation.

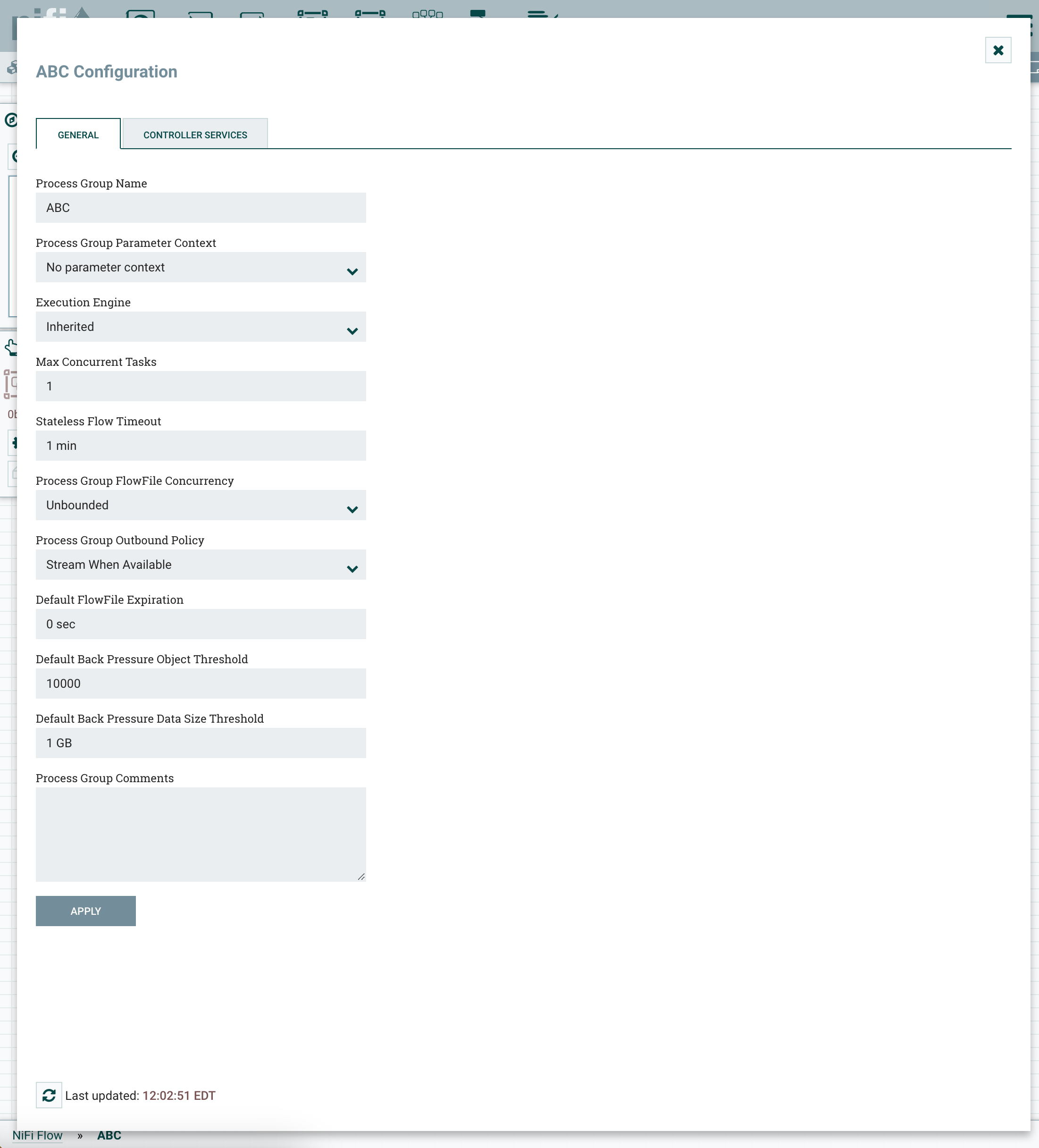

Configuring a Process Group

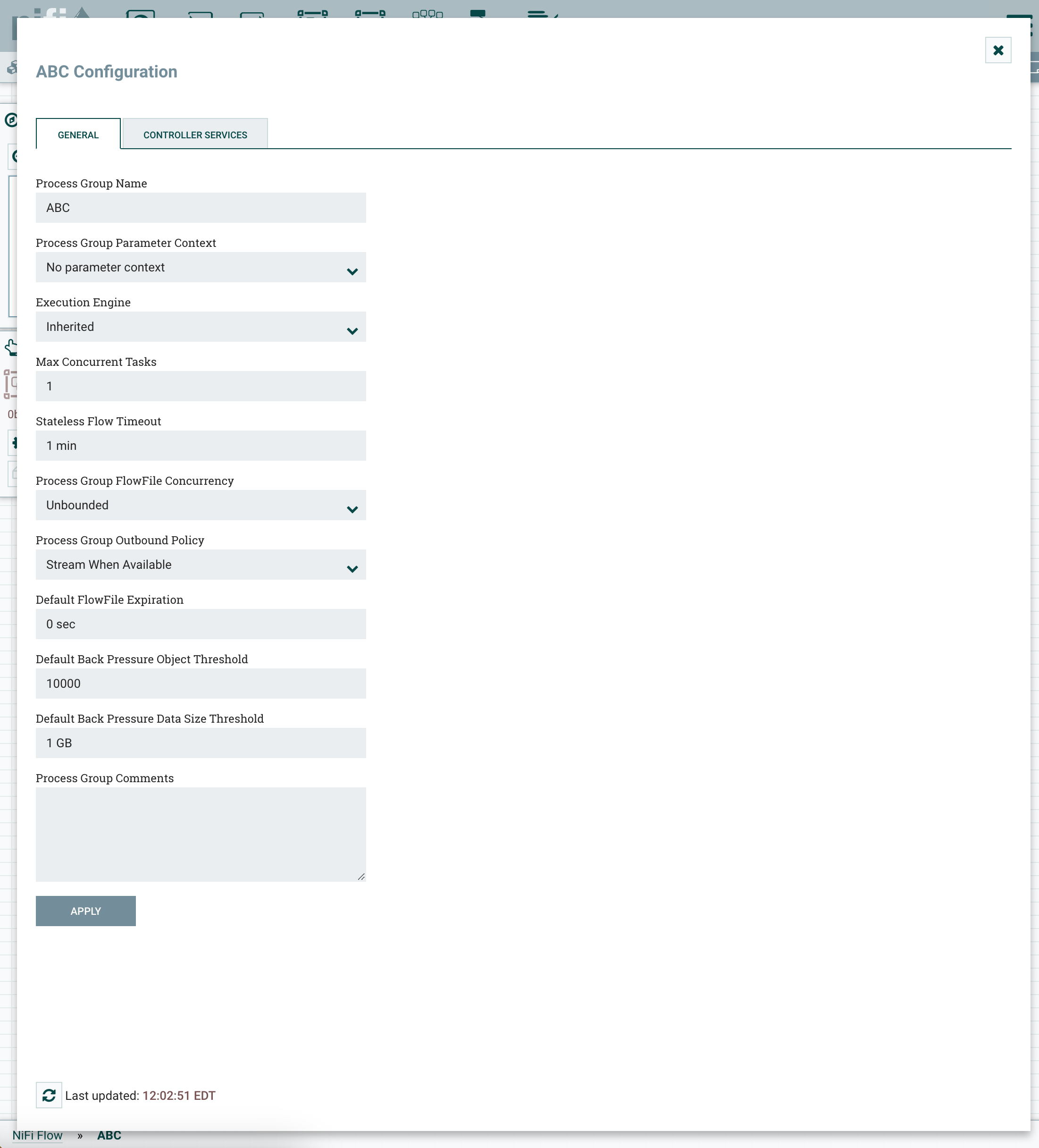

To configure a Process Group, right-click on the Process Group and select the Configure option from the context menu. The configuration dialog is opened with two tabs: General and Controller Services.

General Tab

This tab contains several different configuration items. First is the Process Group Name. This is the name that is shown at the top of the Process Group on the canvas as well as in the breadcrumbs at the bottom of the UI. For the Root Process Group (i.e., the highest level group), this is also the name that is shown as the title of the browser tab. Note that this information is visible to any other NiFi instance that connects remotely to this instance (using Remote Process Groups, a.k.a., Site-to-Site).

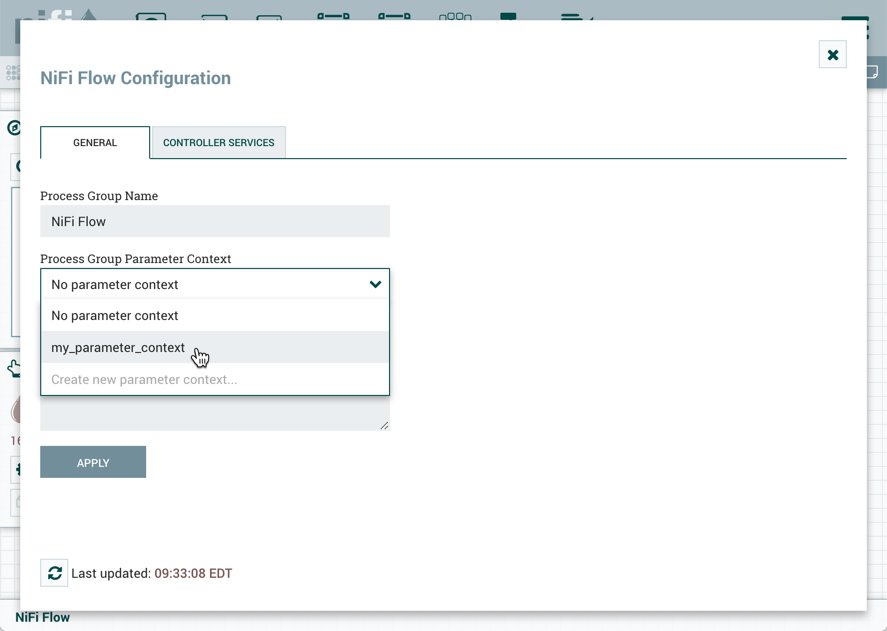

The next configuration element is the Process Group Parameter Context, which is used to provide parameters to components of the flow. From this drop-down, the user is able to choose which Parameter Context should be bound to this Process Group and can optionally create a new one to bind to the Process Group. For more information refer to Parameters and Parameter Contexts.

The next section provides configuration elements for determining how the Process Group should be scheduled to run. NiFi supports two different Execution Engines: The Traditional Execution Engine, and the Stateless Execution Engine. Additionally, the Execution Engine can be inherited from the parent Process Group, which is the default behavior. See Execution Engines for more information.

The next two elements, Process Group FlowFile Concurrency and Process Group Outbound Policy, are covered in the following sections.

FlowFile Concurrency

FlowFile Concurrency is used to control how data is brought into the Process Group. There are three options available:

-

Unbounded (the default)

-

Single FlowFile Per Node

-

Single Batch Per Node

When the FlowFile Concurrency is set to "Unbounded", the Input Ports in the Process Group will ingest data as quickly as they are able, provided that back pressure does not prevent them from doing so.

When the FlowFile Concurrency is configured to "Single FlowFile Per Node", the Input Ports will only allow a single FlowFile through at at time. Once that FlowFile enters the Process Group, no additional FlowFiles will be brought in until all FlowFiles have left the Process Group (either by being removed from the system/auto-terminated, or by exiting through an Output Port). This will often result in slower performance, as it reduces the parallelization that NiFi uses to process the data. However, there are several reasons that a user may want to use this approach. A common use case is one in which each incoming FlowFile contains references to several other data items, such as a list of files in a directory. The user may want to process the entire listing before allowing any other data to enter the Process Group.

When the FlowFile Concurrency is configured to "Single Batch Per Node", the Input Ports will behave similarly to the way that they behave in the "Single FlowFile Per Node" mode, but when a FlowFile is ingested, the Input Ports will continue to ingest all data until all of the queues feeding the Input Ports have been emptied. At that point, they will not bring any more data into the Process Group until all data has finished processing and has left the Process Group (see Connecting Batch-Oriented Process Groups).

| The FlowFile Concurrency controls only when data will be pulled into the Process Group from an Input Port. It does not prevent a Processor within the Process Group from ingesting data from outside of NiFi. |

Outbound Policy

While the FlowFile Concurrency dictates how data should be brought into the Process Group, the Outbound Policy controls the flow of data out of the Process Group. There are two available options available:

-

Stream When Available (the default)

-

Batch Output

When the Outbound Policy is configured to "Stream When Available", data that arrives at an Output Port is immediately transferred out of the Process Group, assuming that no back pressure is applied.

When the Outbound Policy is configured to "Batch Output", the Output Ports will not transfer data out of the Process Group until all data that is in the Process Group is queued up at an Output Port (i.e., no data leaves the Process Group until all of the data has finished processing). It doesn’t matter whether the data is all queued up for the same Output Port, or if some data is queued up for Output Port A while other data is queued up for Output Port B. These conditions are both considered the same in terms of the completion of the FlowFile processing.

Using an Outbound Policy of "Batch Output" along with a FlowFile Concurrency of "Single FlowFile Per Node" allows a user to easily ingest a single FlowFile

(which in and of itself may represent a batch of data) and then wait until all processing of that FlowFile has completed before continuing on to the next step

in the dataflow (i.e., the next component outside of the Process Group). Additionally, when using this mode, each FlowFile that is transferred out of the Process Group

will be given a series of attributes named "batch.output.<Port Name>" for each Output Port in the Process Group. The value will be equal to the number of FlowFiles

that were routed to that Output Port for this batch of data. For example, consider a case where a single FlowFile is split into 5 FlowFiles: two FlowFiles go to Output Port A, one goes

to Output Port B, two go to Output Port C, and no FlowFiles go to Output Port D. In this case, each FlowFile will have attributes batch.output.A = 2,

batch.output.B = 1, batch.output.C = 2, batch.output.D = 0.

The Outbound Policy of "Batch Output" doesn’t provide any benefits when used in conjunction with a FlowFile Concurrency of "Unbounded". As a result, the Outbound Policy is ignored if the FlowFile Concurrency is set to "Unbounded".

Connecting Batch-Oriented Process Groups

A common use case in NiFi is to perform some batch-oriented process and only after that process completes, perform another process on that same batch of data.

NiFi makes this possible by encapsulating each of these processes in its own Process Group. The Outbound Policy of the first Process Group should be configured as "Batch Output" while the FlowFile Concurrency should be either "Single FlowFile Per Node" or "Single Batch Per Node". With this configuration, the first Process Group will process an entire batch of data (which will either be a single FlowFile or many FlowFiles depending on the FlowFile Concurrency) as a coherent batch of data. When processing has completed for that batch of data, the data will be held until all FlowFiles are finished processing and ready to leave the Process Group. At that point, the data can be transferred out of the Process Group as a batch. This configuration - when a Process Group is configured with an Outbound Policy of "Batch Output" and an Output Port is connected directly to the Input Port of a Process Group with a FlowFile Concurrency of "Single Batch Per Node" - is treated as a slightly special case. The receiving Process Group will ingest data not only until its input queues are empty but until they are empty AND the source Process Group has transferred all of the data from that batch out of the Process Group. This allows a collection of FlowFiles to be transferred as a single batch of data between Process Groups, even if those FlowFiles are spread across multiple ports.

Caveats

When using a FlowFile Concurrency of "Single FlowFile Per Node", there are a couple of caveats to consider.

First, an Input Port is free to bring data into the Process Group if there is no data queued up in that Process Group on the same node. This means that in a 5-node cluster, for example, there may be up to 5 incoming FlowFiles being processed simultaneously. Additionally, if a connection is configured to use Load Balancing, it may transfer data to another node in the cluster, allowing data to enter the Process Group while that FlowFile is still being processed. As a result, it is not recommended to use Load-Balanced Connections within a Process Group that is not configured for "Unbounded" FlowFile Concurrency.

When using the Outbound Policy of "Batch Output", it is important to consider back pressure. Consider a case where no data will be transferred out of a Process Group until all data is finished processing. Also consider that the connection to Output Port A has a back pressure threshold of 10,000 FlowFiles (the default). If that queue reaches the threshold of 10,000, the upstream Processor will no longer be triggered. As a result, data will not finish processing, and the flow will end in a deadlock, as the Output Port will not run until the processing completes and the Processor will not run until the Output Port runs. To avoid this, if a large number of FlowFiles are expected to be generated from a single input FlowFile, it is recommended that back pressure for Connections ending in an Output Port be configured in such a way to allow for the largest expected number of FlowFiles or back pressure for those Connections be disabled all together (by setting the Back Pressure Threshold to 0). See Back Pressure for more information.

Default Settings for Connections

The final three elements in the Process Group configuration dialog are for Default FlowFile Expiration, Default Back Pressure Object Threshold, and

Default Back Pressure Data Size Threshold. These settings configure the default values when creating a new Connection. Each Connection represents a queue,

and every queue has settings for FlowFile expiration, back pressure object count, and back pressure data size. The settings specified here will affect the

default values for all new Connections created within the Process Group; it will not affect existing Connections. Child Process Groups created within the

configured Process Group will inherit the default settings. Again, existing Process Groups will not be affected. If not overridden with these options, the

root Process Group obtains its default back pressure settings from nifi.properties, and has a default FlowFile expiration of "0 sec" (i.e., do not expire).

| Setting the Default FlowFile Expiration to a non-zero value may lead to data loss due to a FlowFile expiring as its time limit is reached. |

The last element in the configuration dialog is the Process Group Comments. This provides a mechanism to add any useful information about the Process Group.

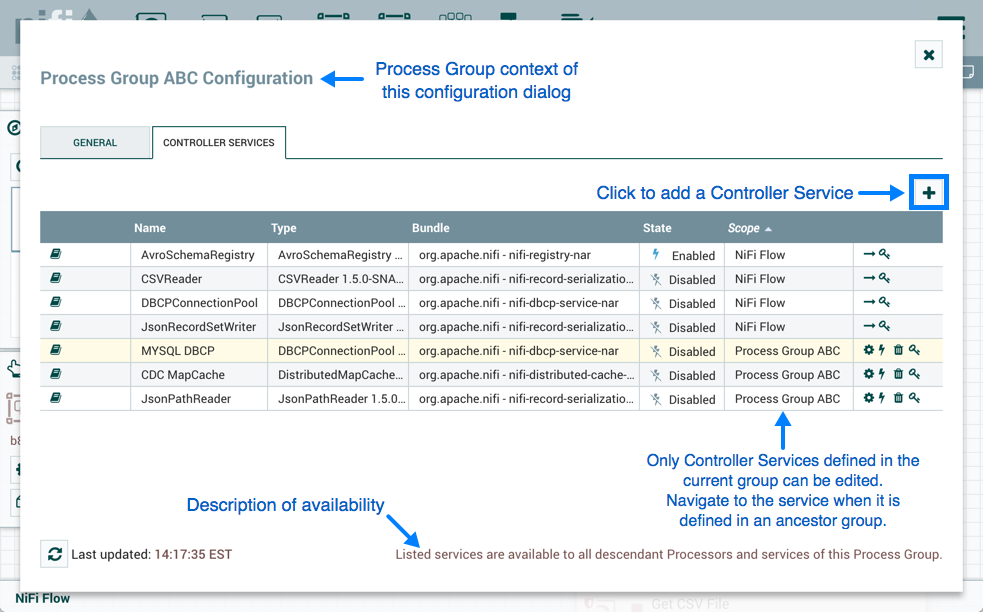

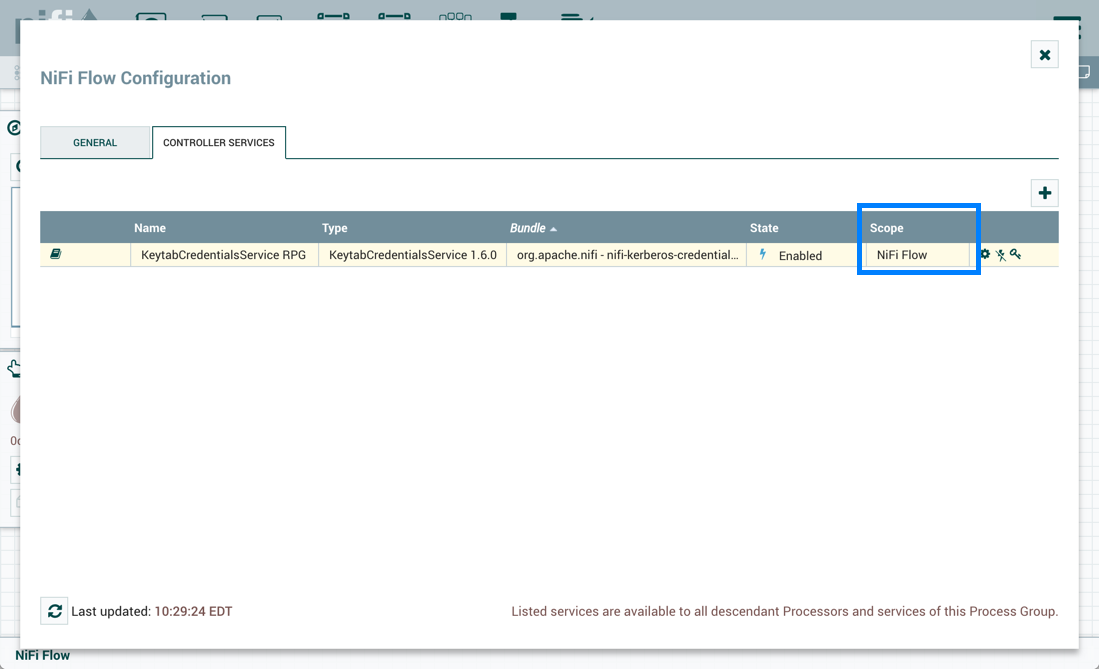

Controller Services

The Controller Services tab in the Process Group configuration dialog is covered in Adding Controller Services for Dataflows.

Parameters

The values of properties in the flow, including sensitive properties, can be parameterized using Parameters. Parameters are created and configured within the NiFi UI. Any property can be configured to reference a Parameter with the following conditions:

-

A sensitive property can only reference a Sensitive Parameter

-

A non-sensitive property can only reference a Non-Sensitive Parameter

-

Properties that reference Controller Services can not use Parameters

-

Parameters cannot be referenced in Reporting Tasks, Flow Analysis Rules or in Management Controller Services

The UI indicates whether a Parameter can be used for a property value.

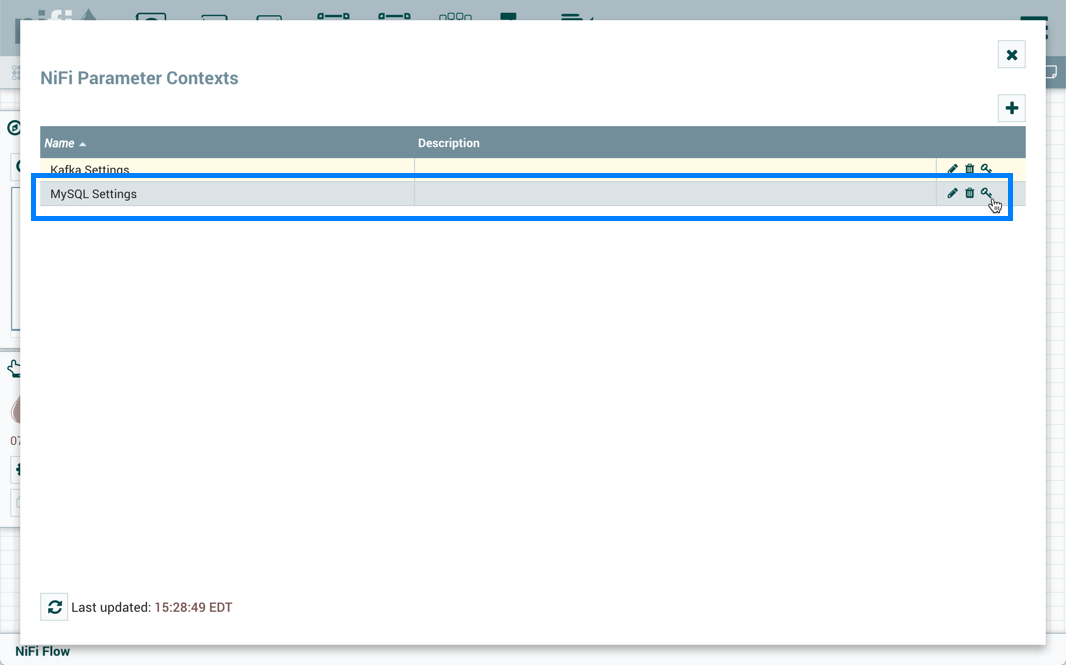

Parameter Contexts

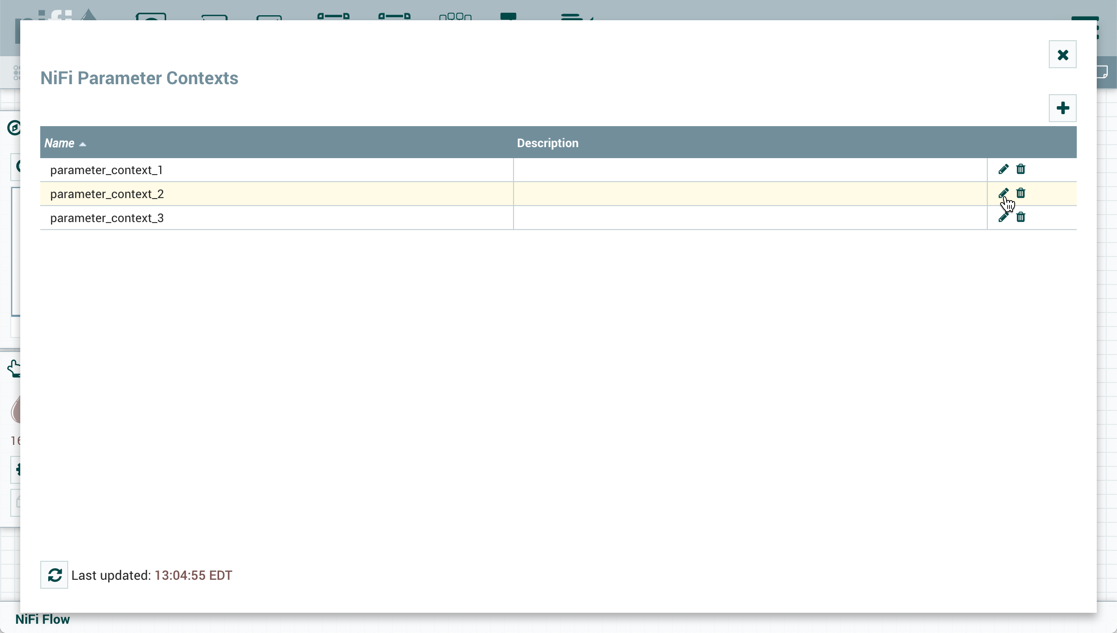

Parameters are created within Parameter Contexts. Parameter Contexts are globally defined/accessible to the NiFi instance. Access policies can be applied to Parameter Contexts to determine which users can create them. Once created, policies to read and write to a specific Parameter Context can also be applied (see Accessing Parameters for more information).

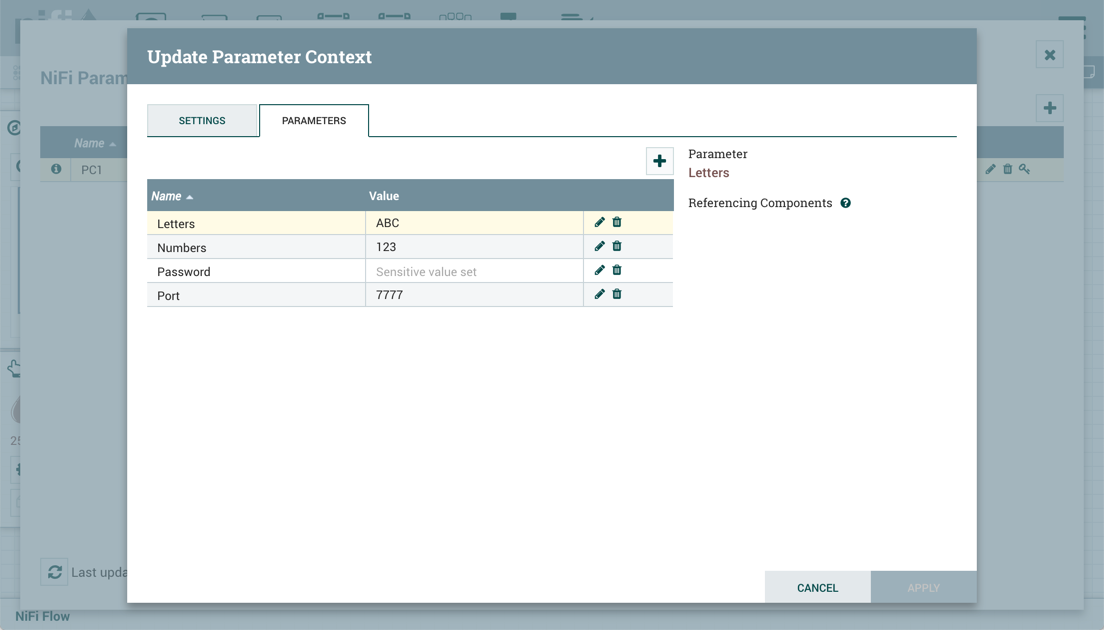

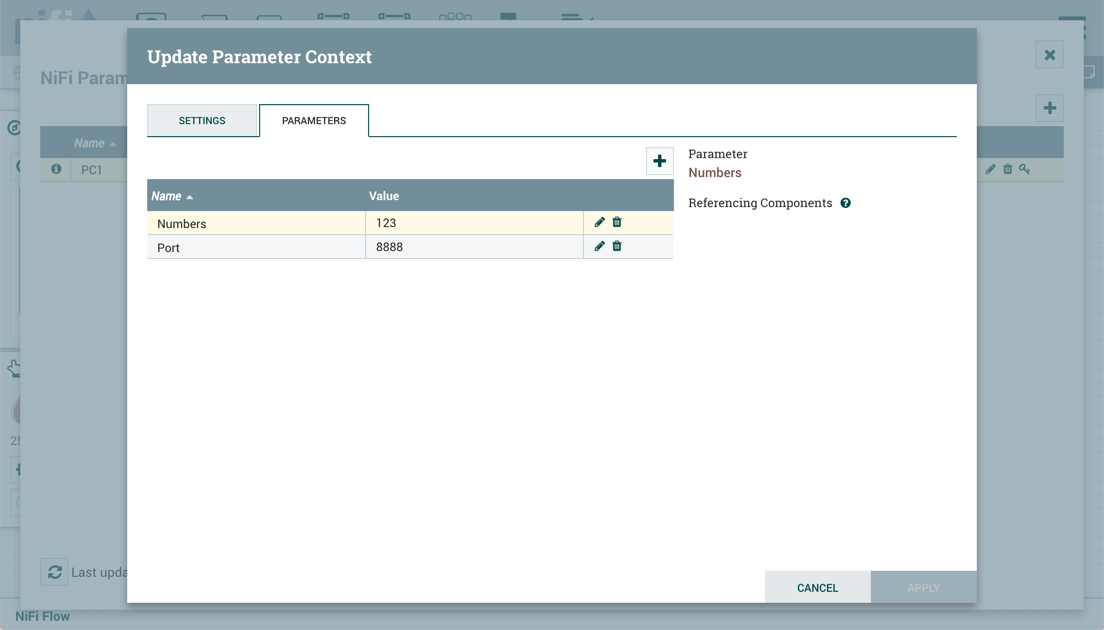

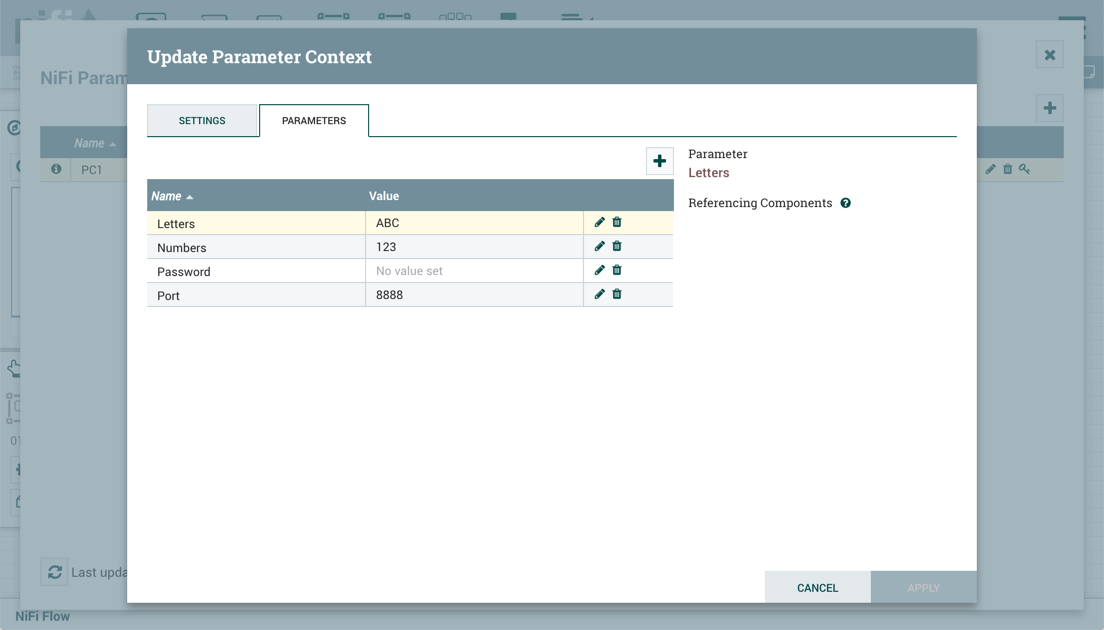

Creating a Parameter Context

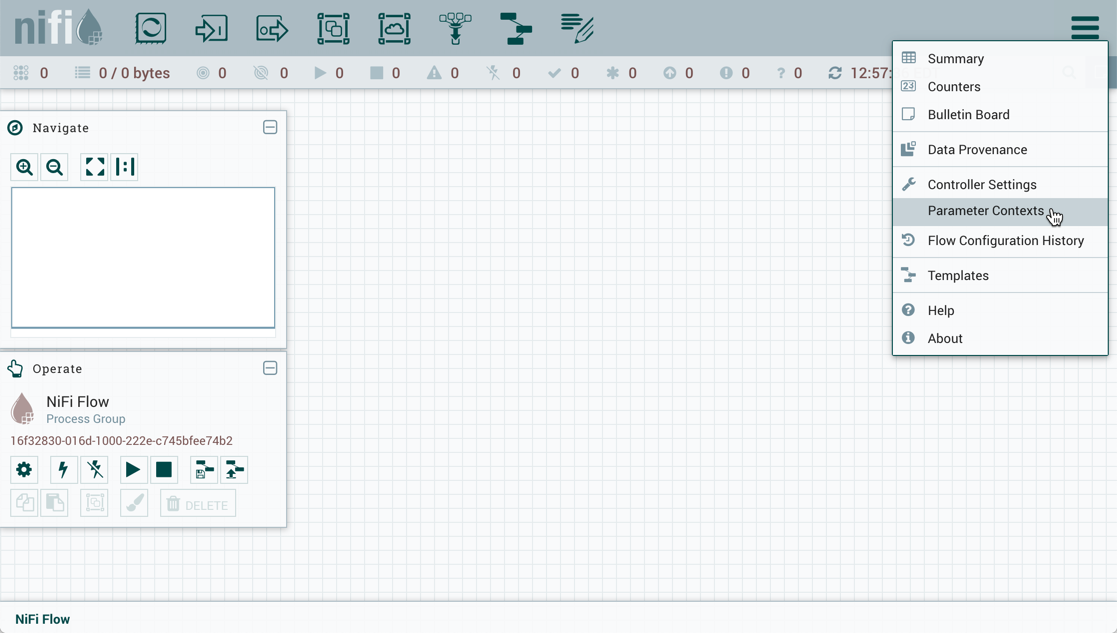

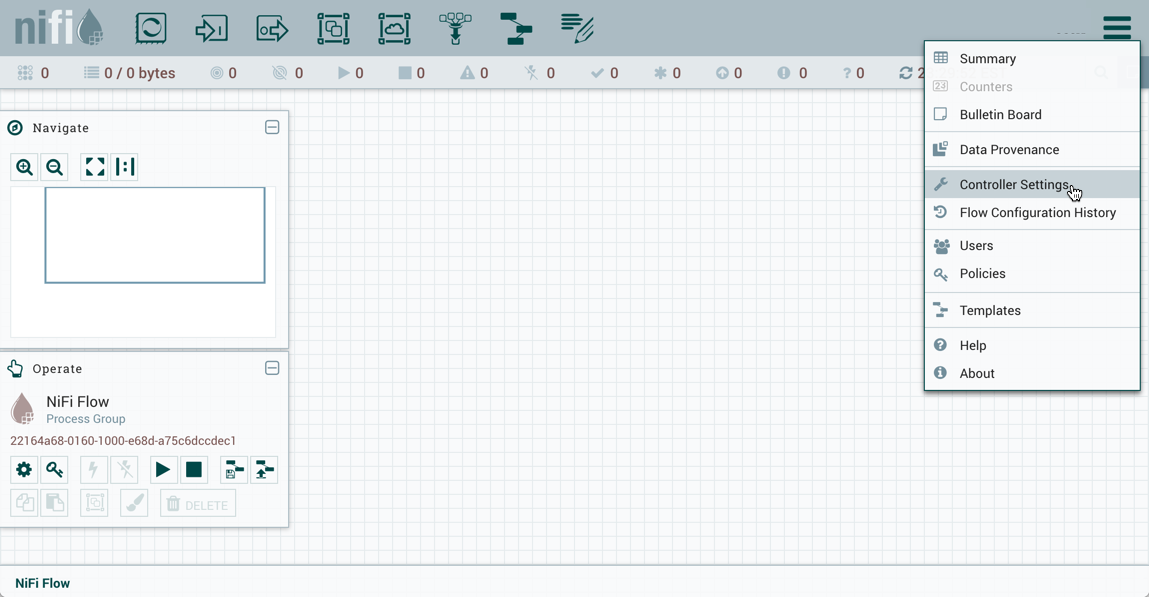

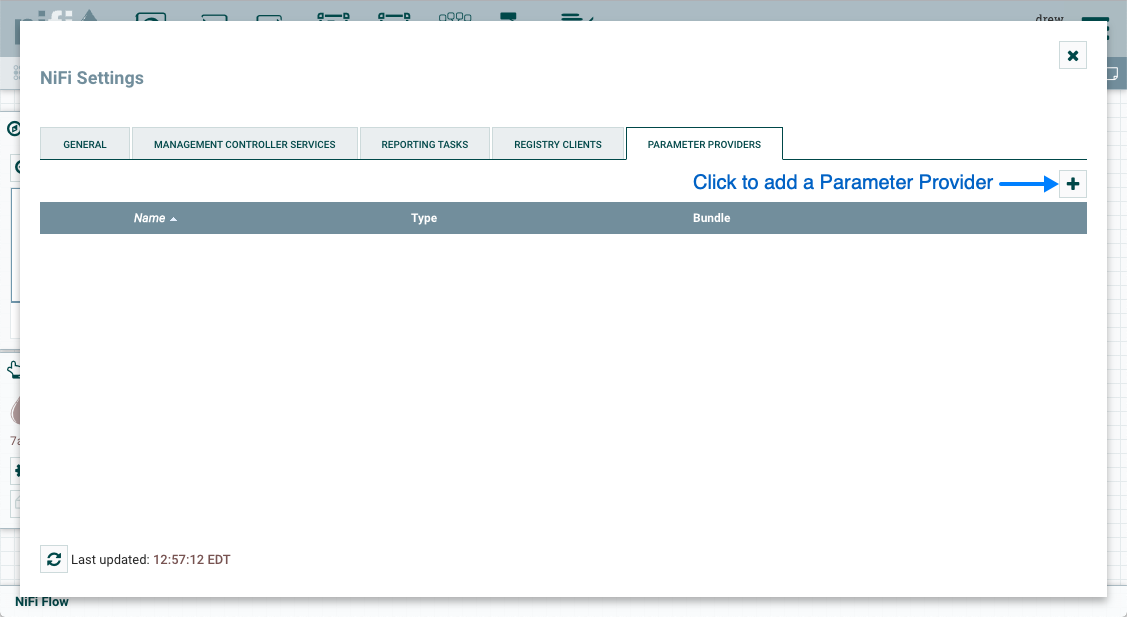

To create a Parameter Context, select Parameter Contexts from the Global Menu:

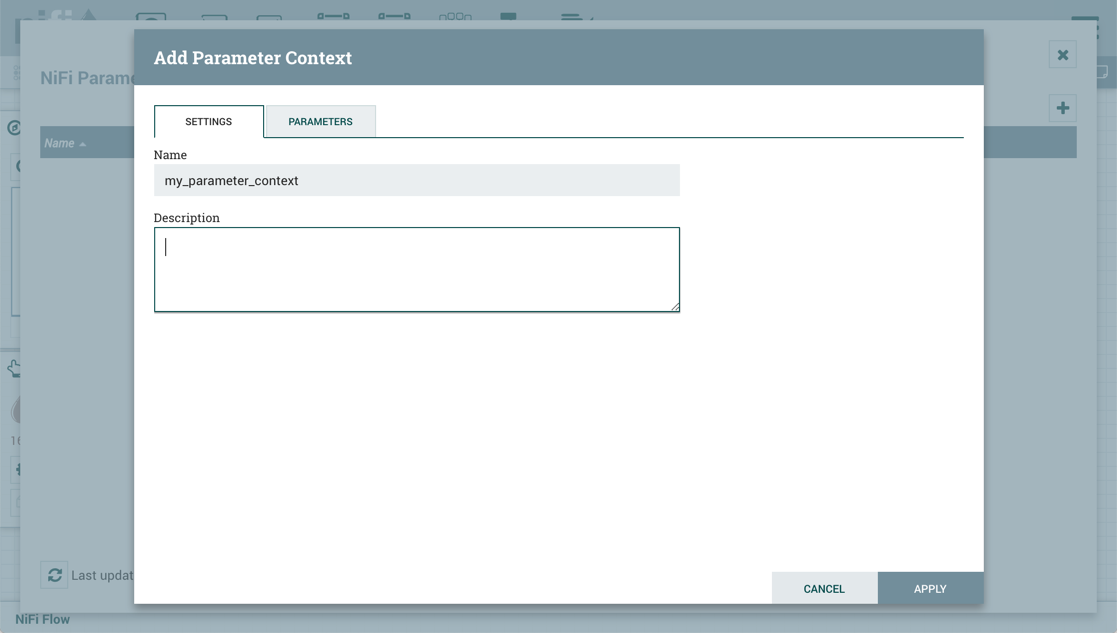

In the Parameter Contexts window, click the + button in the upper-right corner and the Add Parameter Context window opens. The window has two tabs: Settings and Parameters.

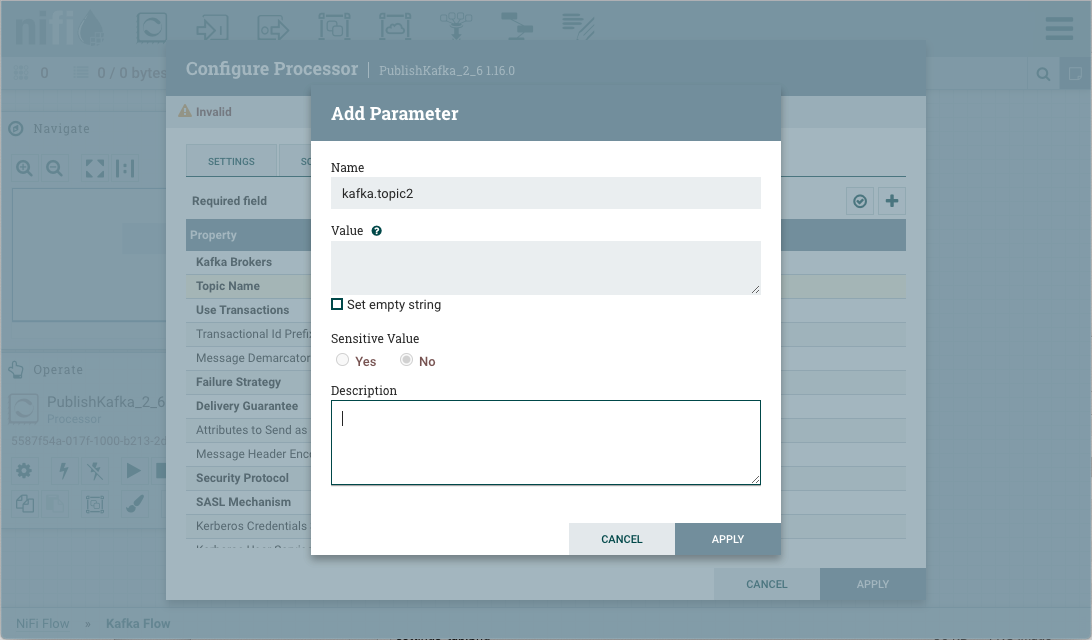

On the "Settings" tab, add a name for the Parameter Context and a description if desired. Select "Apply" to save the Parameter Context or select the "Parameters" tab to add parameters to the context.

Adding a Parameter to a Parameter Context

Parameters can be added during Parameter Context creation or added to existing Parameter Contexts.

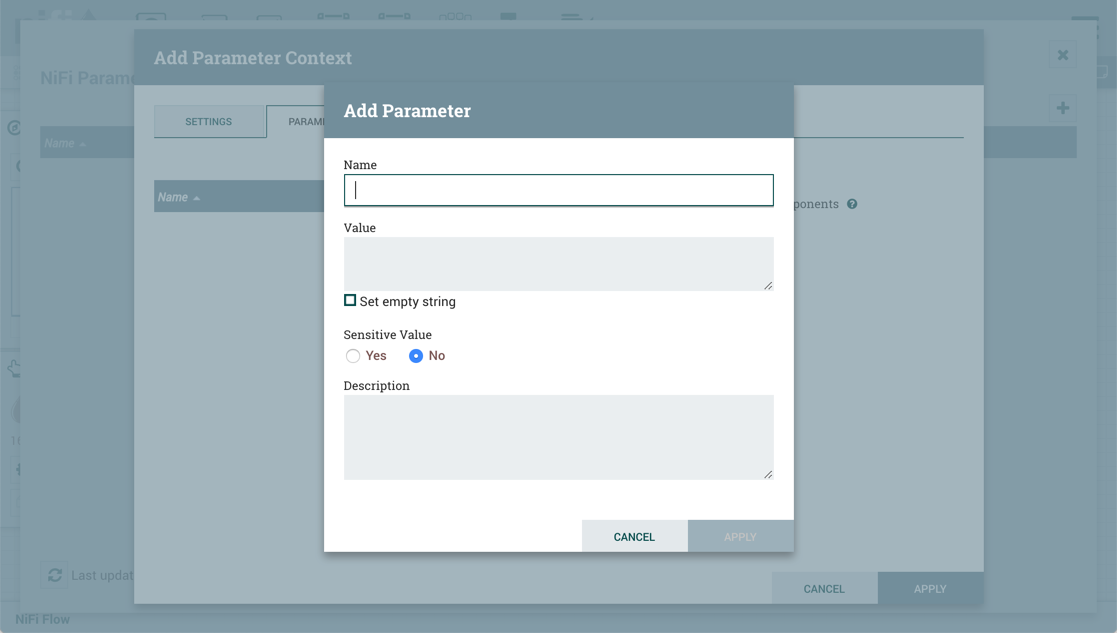

During Parameter Context creation, select the "Parameters" tab. Click the + button to open the Add Parameter window.

To add parameters to an existing Parameter Context, open the Parameter Context window and click the Edit button (![]() ) in the row of the desired Parameter Context.

) in the row of the desired Parameter Context.

On the "Parameters" tab, click the + button to open the Add Parameter window.

The Add Parameter window has the following settings:

-

Name - A name that is used to denote the Parameter. Only alpha-numeric characters (a-z, A-Z, 0-9), hyphens ( - ), underscores ( _ ), periods ( . ), and spaces are allowed.

-

Value - The value that will be used when the Parameter is referenced. If a Parameter makes use of the Expression Language, it is important to note that the Expression Language will be evaluated in the context of the component that references the Parameter. Please see the Parameters and Expression Language section below for more information.

-

Set empty string - Check to explicitly set the value of the Parameter to an empty string. Unchecked by default. (Note: If checked but a value is set, the checkbox is ignored.)

-

Sensitive Value - Set to "Yes" if the Parameter’s Value should be considered sensitive. If sensitive, the value of the Parameter will not be shown in the UI once applied. The default setting is "No". Sensitive Parameters can only be referenced by sensitive properties and Non-Sensitive Parameters by non-sensitive properties. Once a Parameter is created, its sensitivity flag cannot be changed.

-

Description - A description that explains what the Parameter is, how it is to be used, etc. This field is optional.

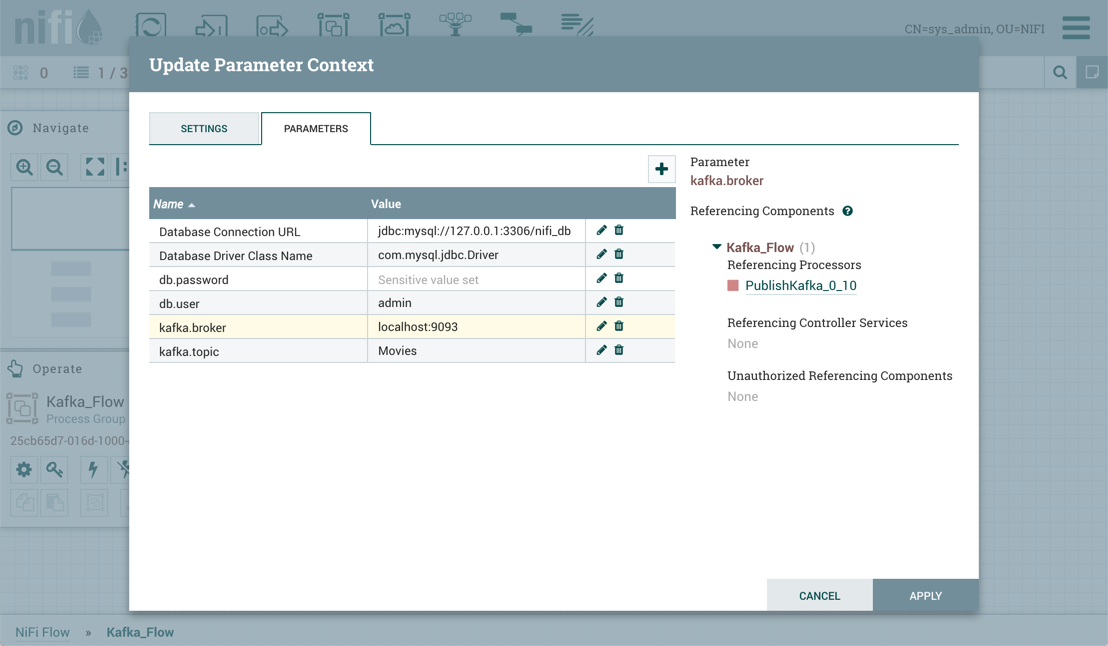

Once these settings are configured, select "Apply". The Referencing Components lists the components referenced by the currently selected parameter. Add additional Parameters or edit any existing Parameters.

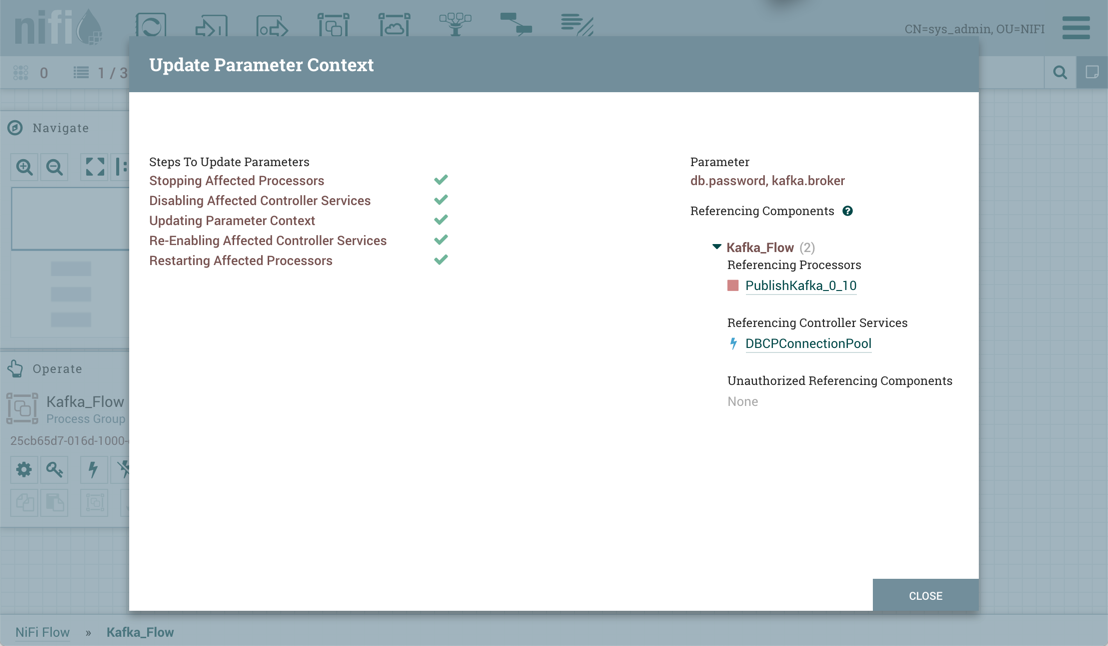

To complete the process, select "Apply" from the Parameter Context window. The following operations are performed to validate all components that reference the added or modified parameters: Stopping/Restarting affected Processors, Disabling/Re-enabling affected Controller Services, Updating Parameter Context.

The Referencing Components section now lists an aggregation of all the components referenced by the set of parameters added/edited/deleted, organized by process group.

Parameters and Expression Language

When adding a Parameter that makes use of the Expression Language, it is important to understand the context in which the Expression Language will be evaluated. The expression is always evaluated

in the context of the Processor or Controller Service that references the Parameter. Take, for example, a scenario where a Parameter with the name Time is added with a value of ${now()}. The

Expression Language results in a call to determine the system time when it is evaluated. When added as a Parameter, the system time is not evaluated when the Parameter is added, but rather when a

Processor or Controller Service evaluates the Expression. That is, if a Processor has a Property whose value is set to #{Time} it will function in exactly the same manner as if the Property’s

value were set to ${now()}. Each time that the property is referenced, it will produce a different timestamp.

Furthermore, some Properties do not allow for Expression Language, while others allow for Expression Language but do not evaluate expressions against FlowFile attributes. To help understand how

this works, consider a Parameter named File whose value is ${filename}. Then consider three different properties, each with a different Expression Language Scope and a FlowFile whose filename

is test.txt. If each of those Properties is set to #{File}, then the follow table illustrates the resultant value.

| Configured Property Value | Expression Language Scope | Effective Property Value | Notes |

|---|---|---|---|

#{File} |

FlowFile Attributes |

test.txt |

The filename is resolved by looking at the |

#{File} |

Environment |

Empty String |

FlowFile attributes are not in scope, and we assume there is no system property nor environment variable defined at JVM level named "filename" |

#{File} |

None |

${filename} |

The literal text "${filename}" will be unevaluated. |

Assigning a Parameter Context to a Process Group

For a component to reference a Parameter, its Process Group must first be assigned a Parameter Context. Once assigned, processors and controller services within that Process Group may only reference Parameters within that Parameter Context.

A Process Group can only be assigned one Parameter Context, while a given Parameter Context can be assigned to multiple Process Groups.

| A user can only set the Parameter Context of a Process Group to one of the Parameter Contexts that the user has the view policy for. Additionally, in order to set the Parameter Context, the user must have the modify policy for the Process Group. See Accessing Parameters for more information. |

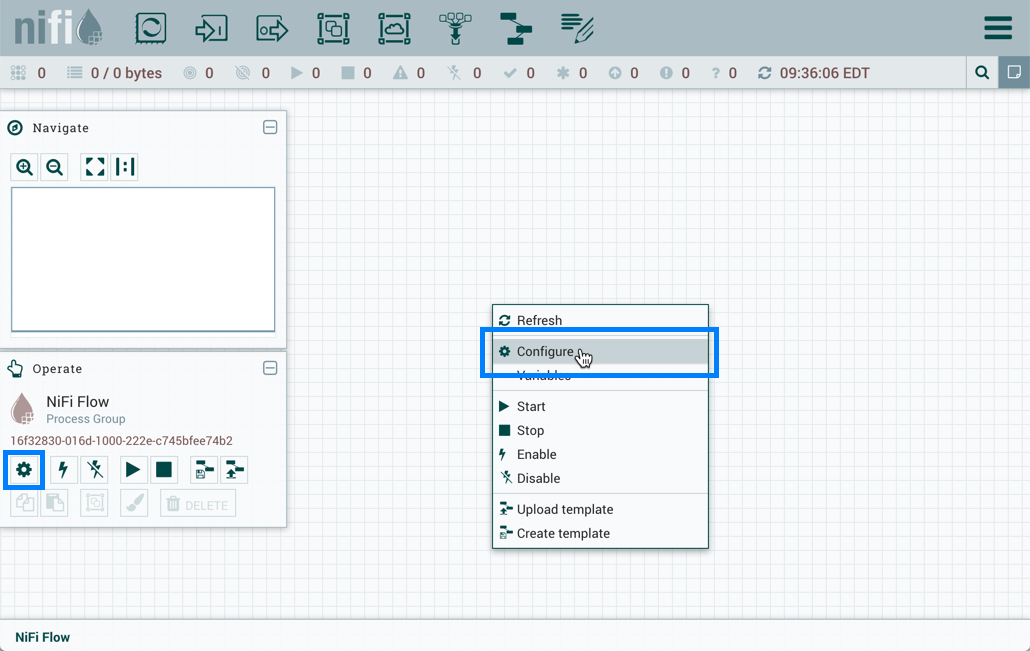

To assign a Parameter Context to a Process Group, click Configure, either from the Operate Palette or from the Process Group context menu.

In the Flow Configuration window, select the "General" tab. From the Process Group Parameter Context drop-down menu, select an existing Parameter Context or create a new one.

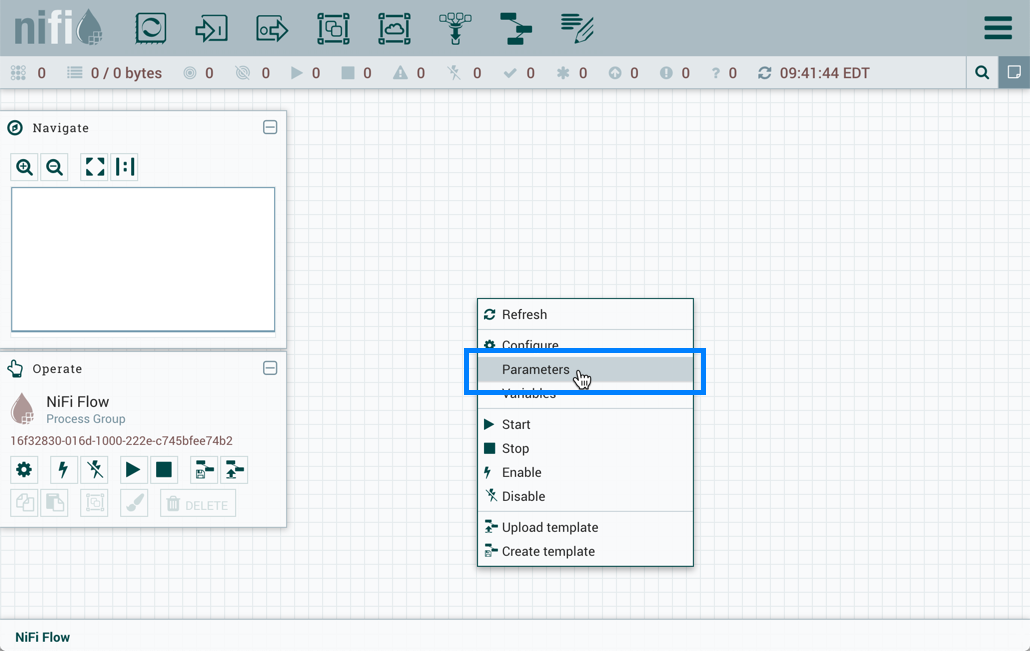

Select "Apply" to save the configuration changes. The Process Group context menu now includes a "Parameters" option which allows quick access to the Update Parameter Context window for the assigned Parameter Context.

If the Parameter Context for a Process Group is changed, all components that reference any Parameters in that Process Group will be stopped, validated, and restarted assuming the components were previously running and are still valid.

| If a Parameter Context is unset from a Process Group, it does NOT inherit the Parameter Context from the parent Process Group. Instead, no Parameters can be referenced. Any component that does already reference a Parameter will become invalid. |

Referencing Parameters

Parameter Reference Syntax

To configure an eligible property to reference a Parameter, use the # symbol as the start, with the Parameter’s name enclosed in curly braces:

#{Parameter.Name}

This can be escaped using an additional # character at the beginning. To illustrate this, assume that the Parameter abc has a value of xxx and Parameter def has a value of yyy. Then, the following user-defined property values will evaluate to these effective values:

User-Entered Literal Property Value |

Effective Property Value |

Explanation |

|

|

Simple substitution |

|

|

Simple substitution with additional literal data |

|

|

Multiple substitution with additional literal data |

|

|

No { } for parameter replacement |

|

|

No { } for parameter replacement |

|

|

Escaped # for literal interpretation |

|

|

Escaped # for literal interpretation, followed by simple substitution |

|

|

Escaped # for literal interpretation, twice |

|

|

Escaped # for literal interpretation, twice, followed by simple substitution |

|

Exception thrown on property set operation |

|

When referencing a Parameter from within Expression Language, the Parameter reference is evaluated first. As an example, to replace xxx with zzz for the abc Parameter:

${ #{abc}:replace('xxx', 'zzz') }

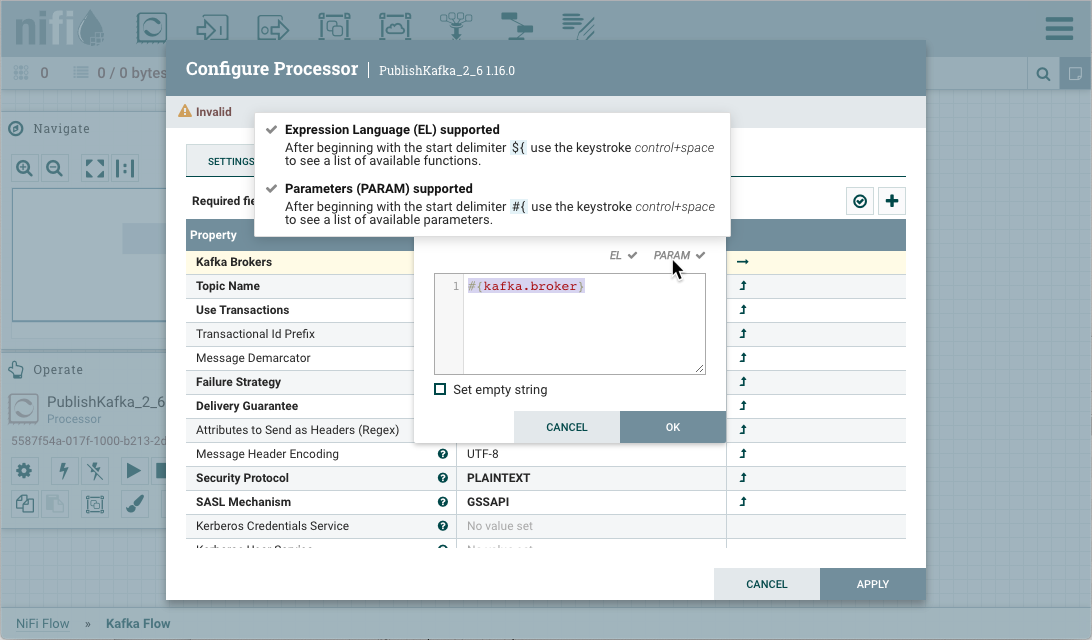

Referencing and Creating Parameters During Component Configuration

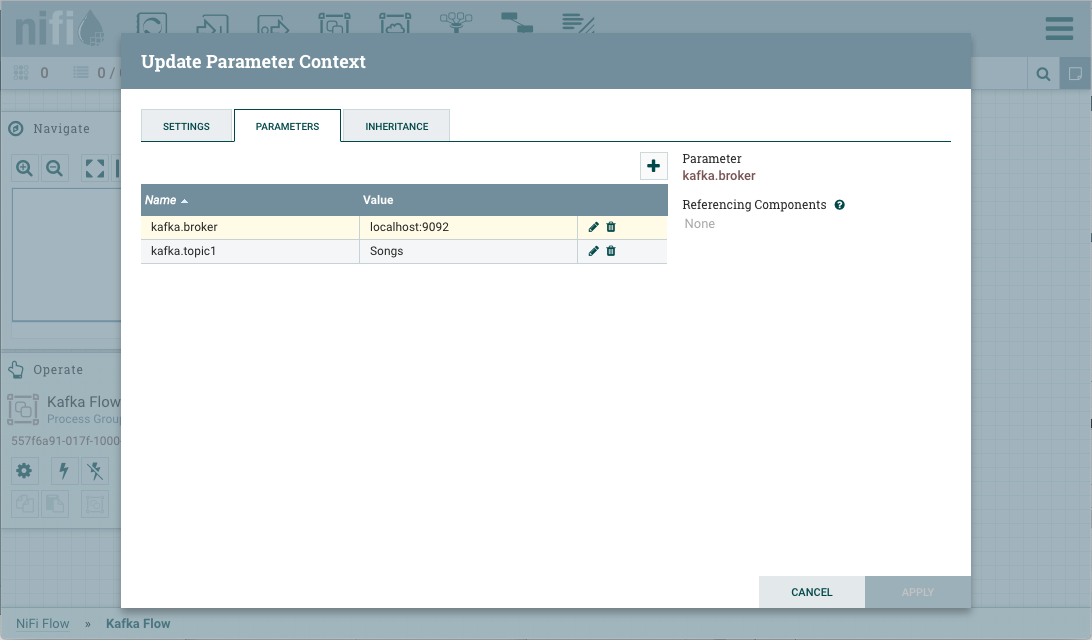

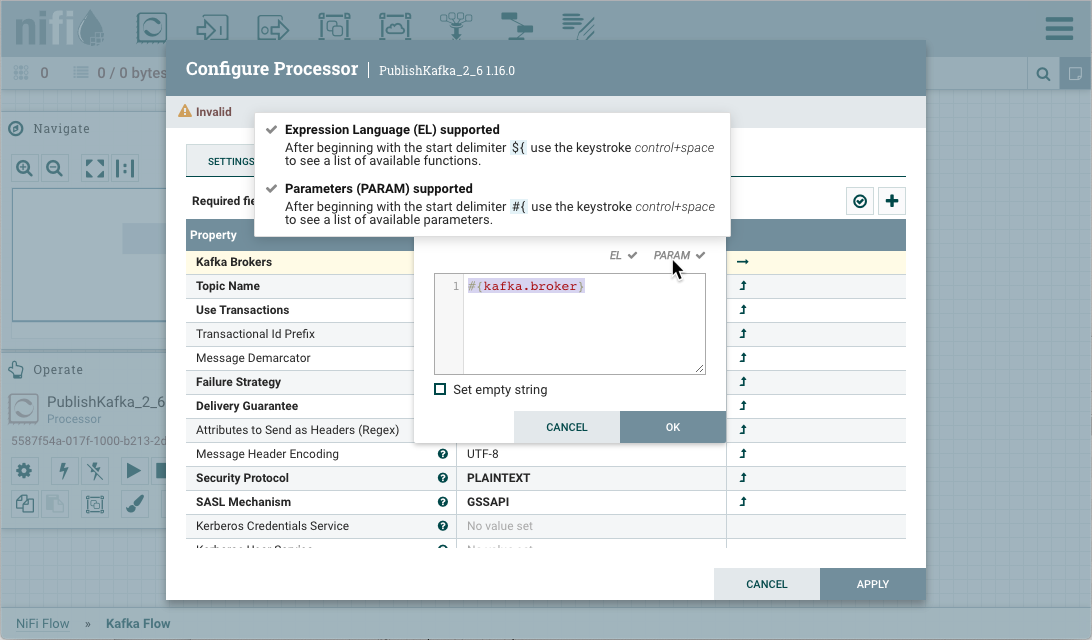

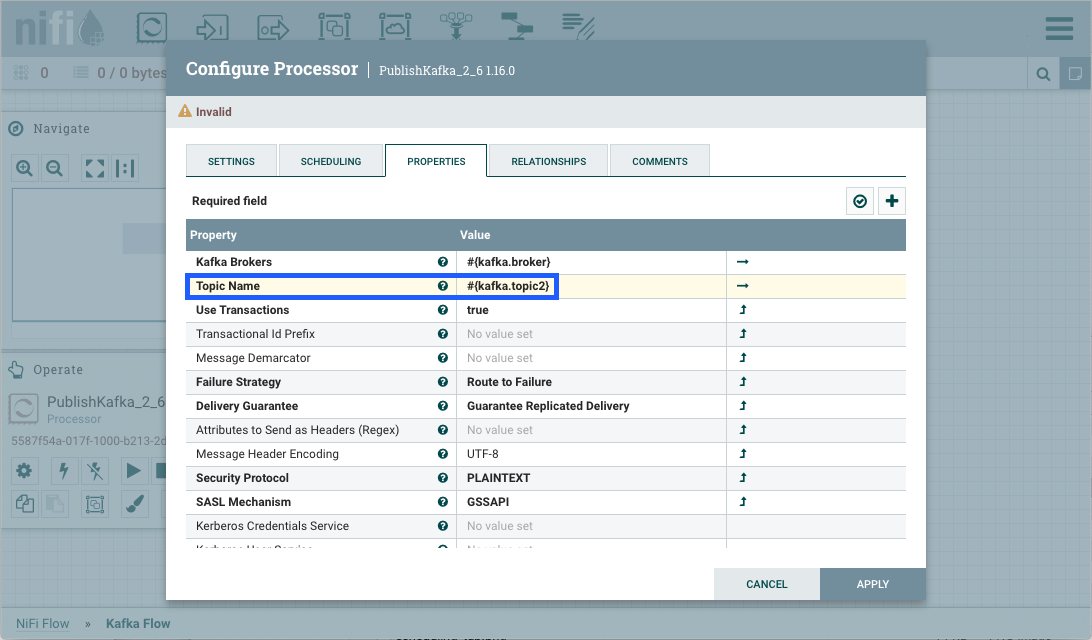

Parameters can be easily referenced or created as you configure the components in your flow. For example, assume a process group has the Parameter Context "Kafka Settings" assigned to it. "Kafka Settings" contains the parameters kafka.broker and kafka.topic1.

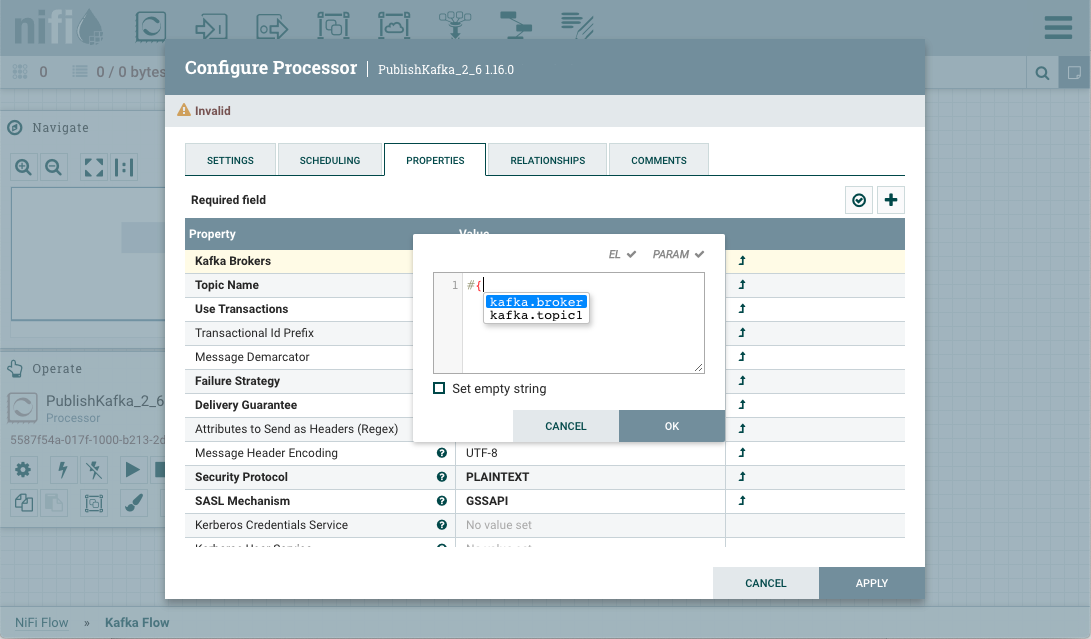

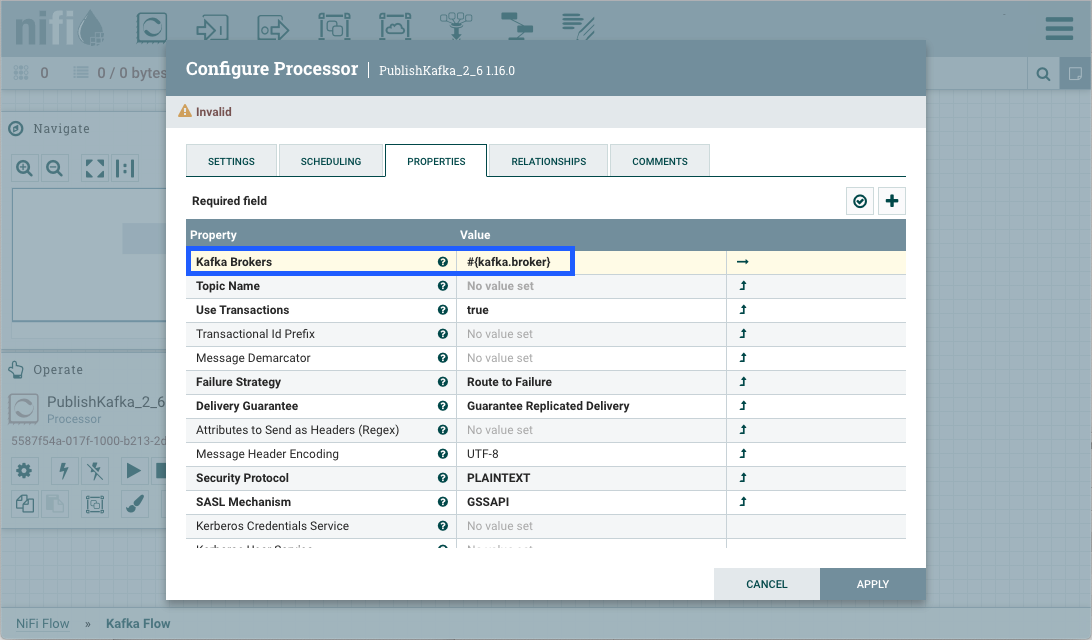

To reference kafka.broker as the value for the "Kafka Brokers" property in the PublishKafka processor, clear the default value and begin a new entry with the start delimiter #{. Next use the keystroke control+space to show the list of available parameters:

Select kafka.broker and complete the entry with a closing curly brace }.

Help text describing this process is displayed when hovering over the Expression Language and Parameters eligibility indicators.

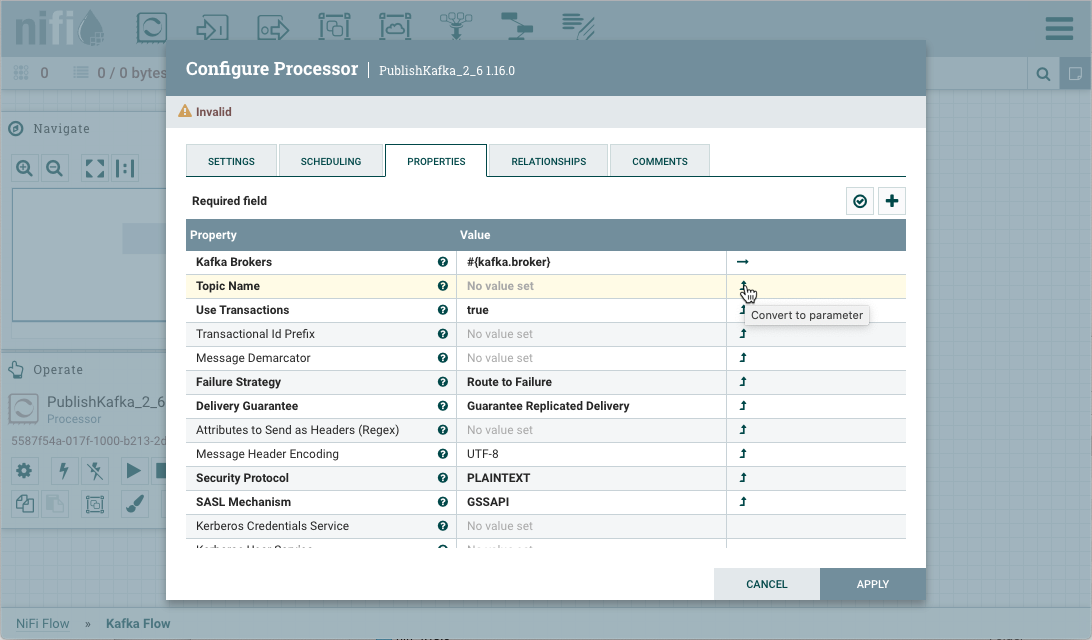

Parameters can also be created on the fly. For example, to create a parameter for the "Topic Name" property, select the "Convert to Parameter" icon (![]() ) in that property’s row. This icon will only be available if the user has appropriate permissions to modify the Parameter Context (see Accessing Parameters for more information).

) in that property’s row. This icon will only be available if the user has appropriate permissions to modify the Parameter Context (see Accessing Parameters for more information).

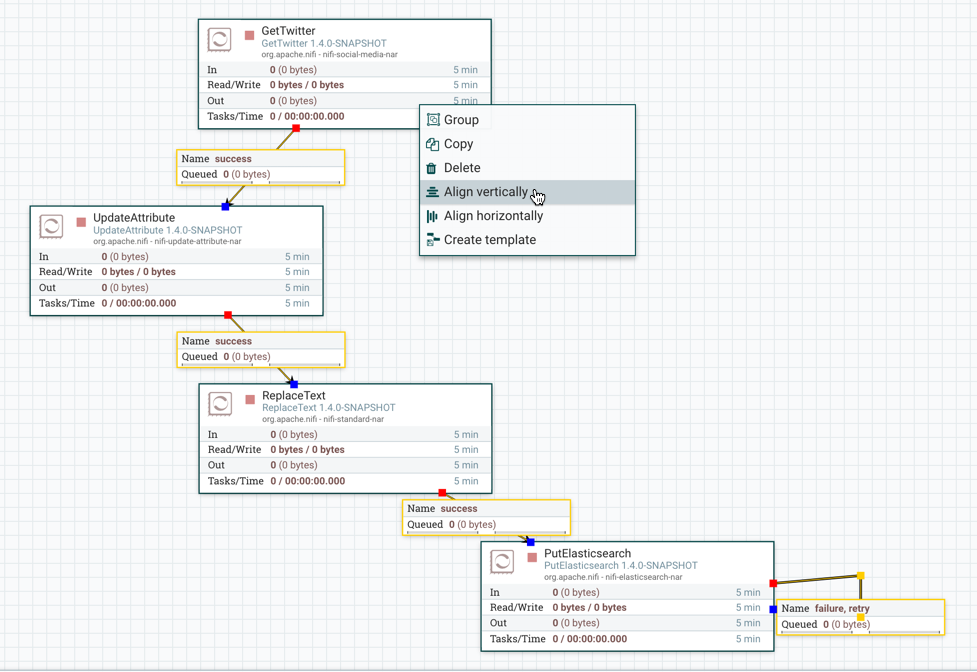

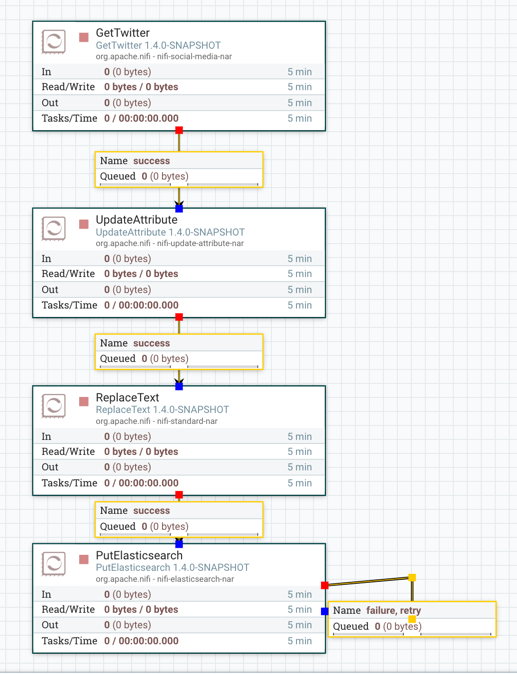

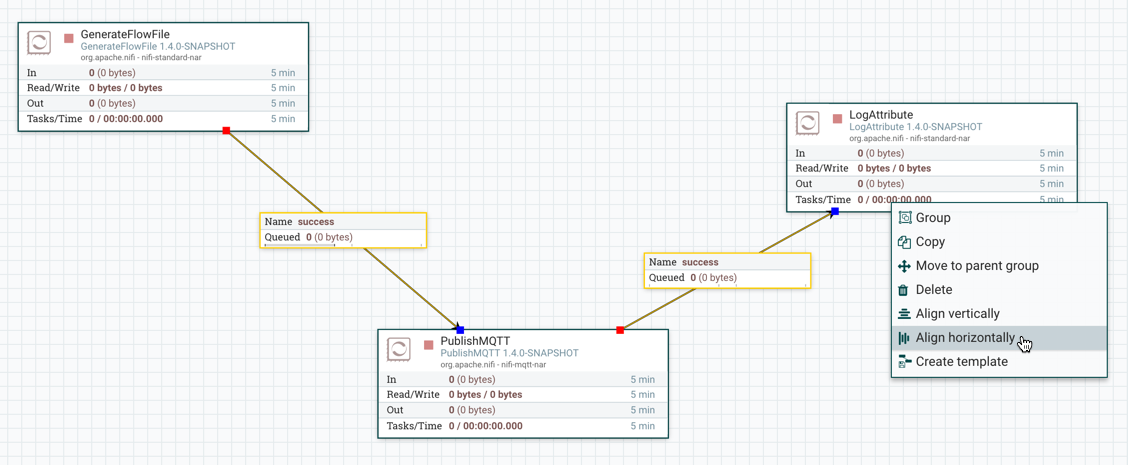

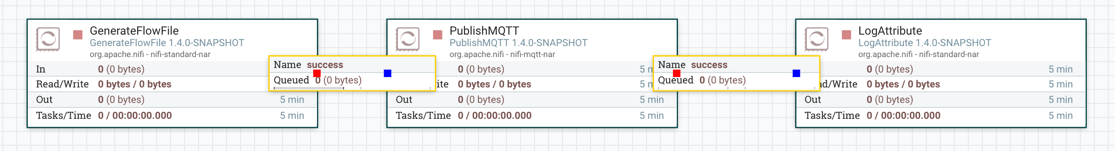

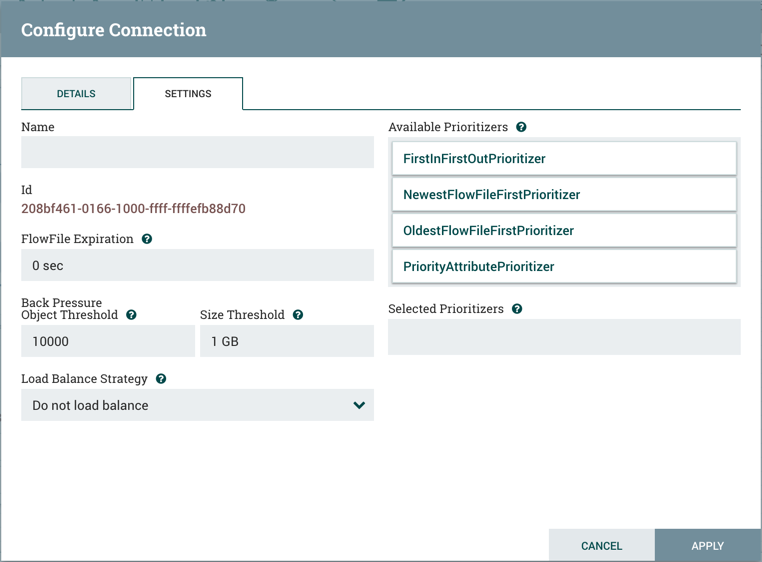

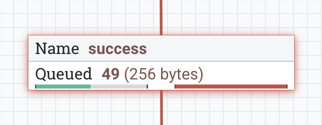

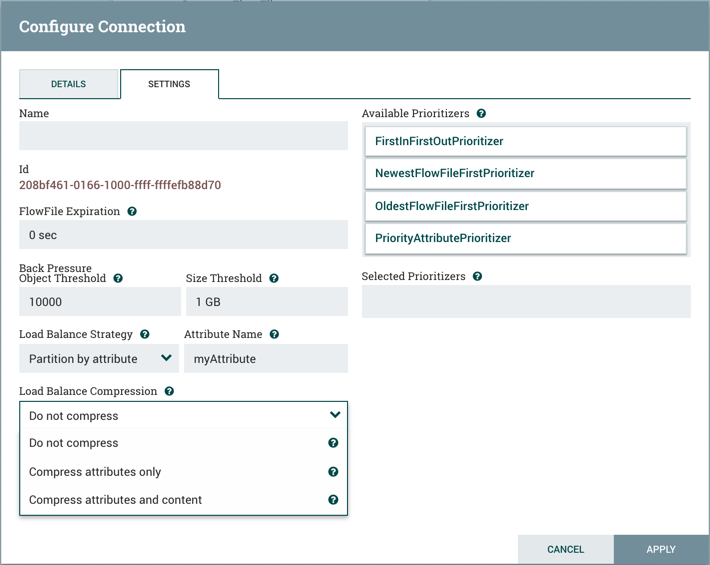

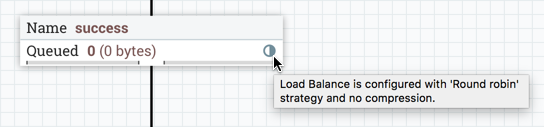

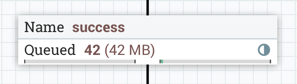

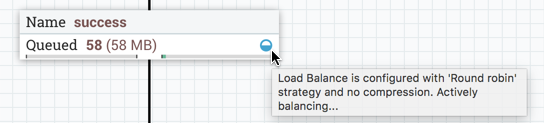

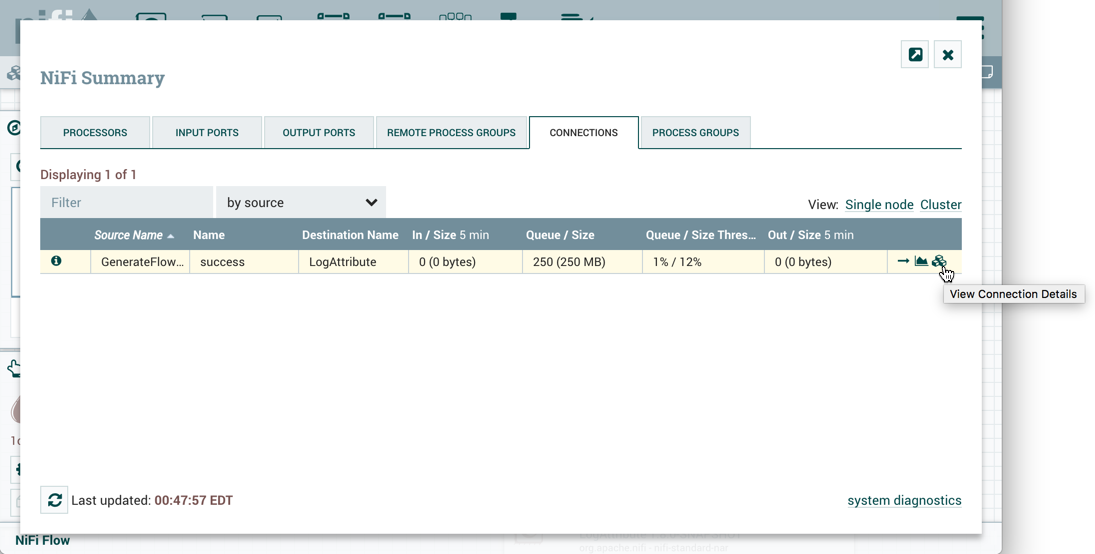

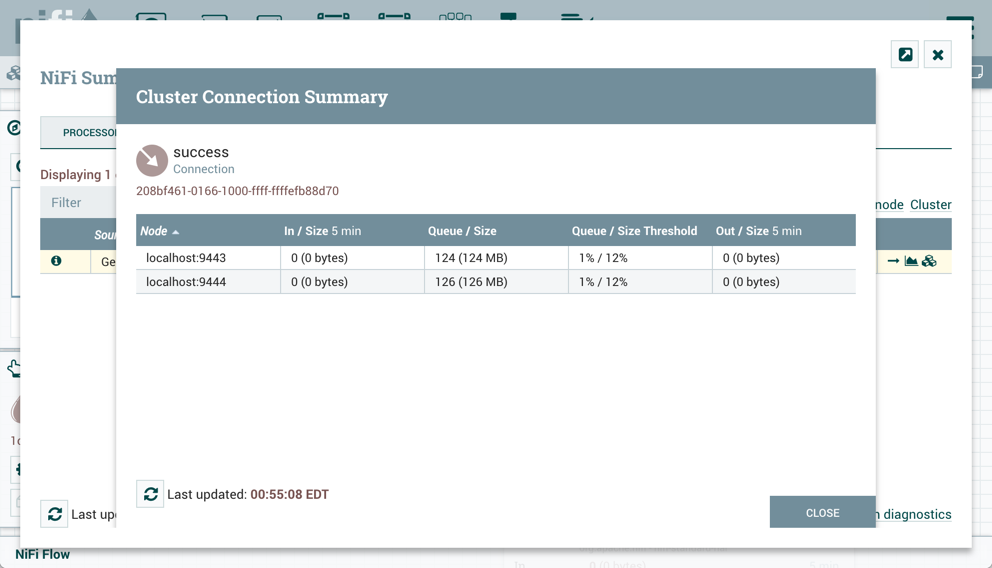

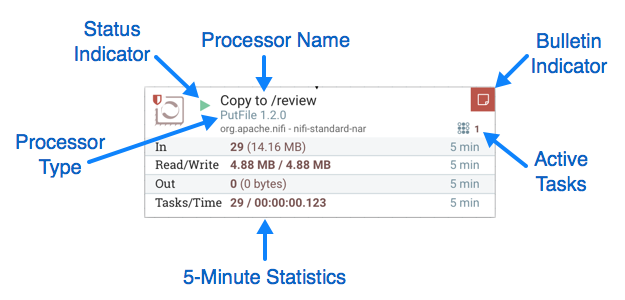

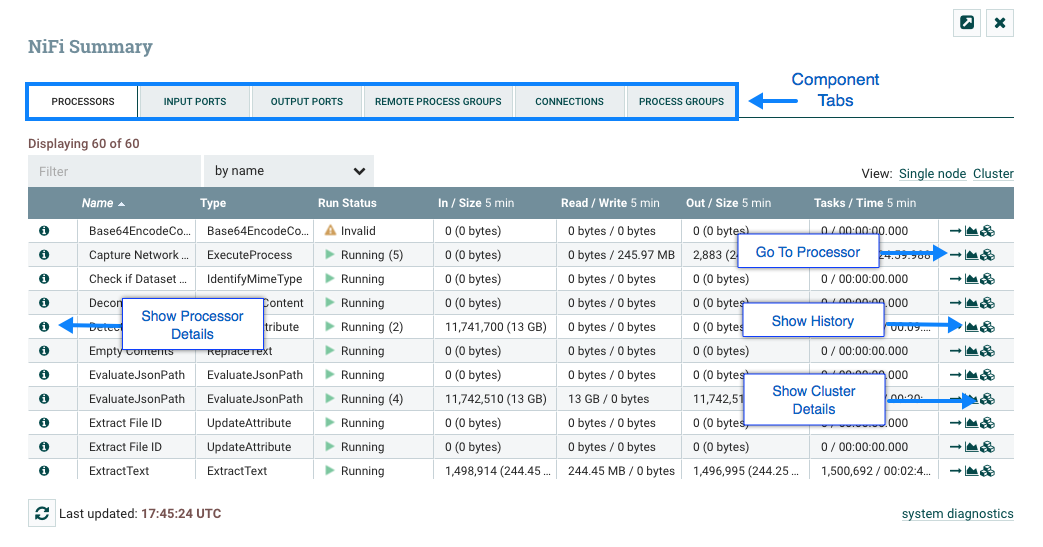

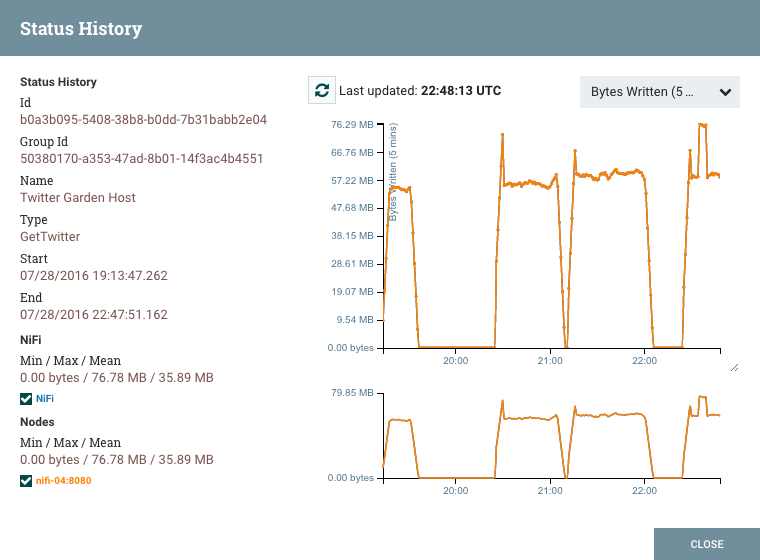

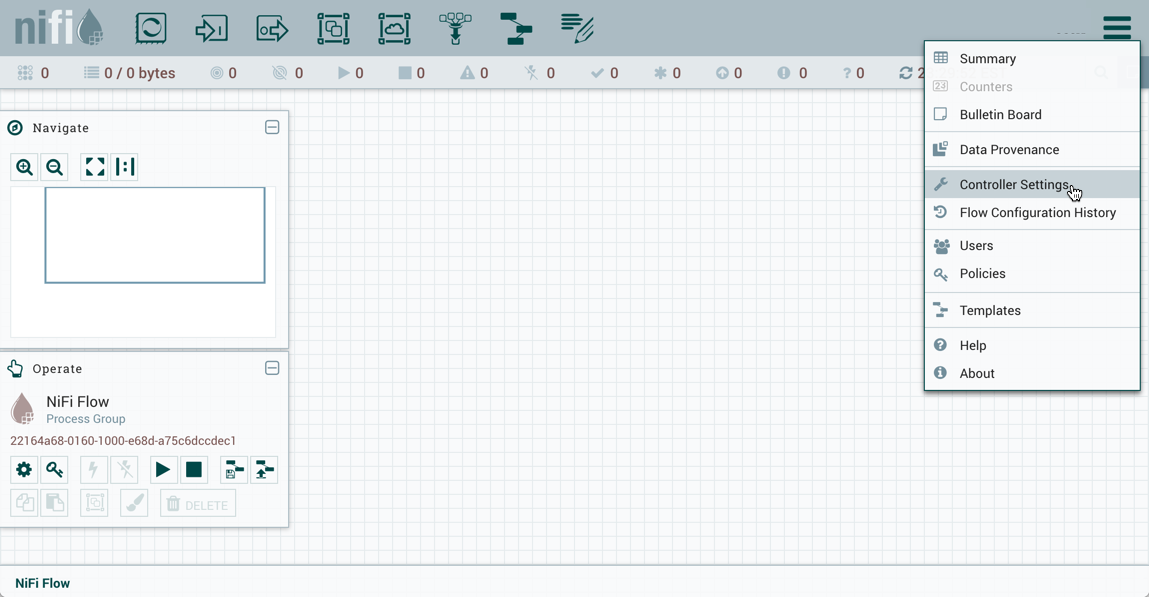

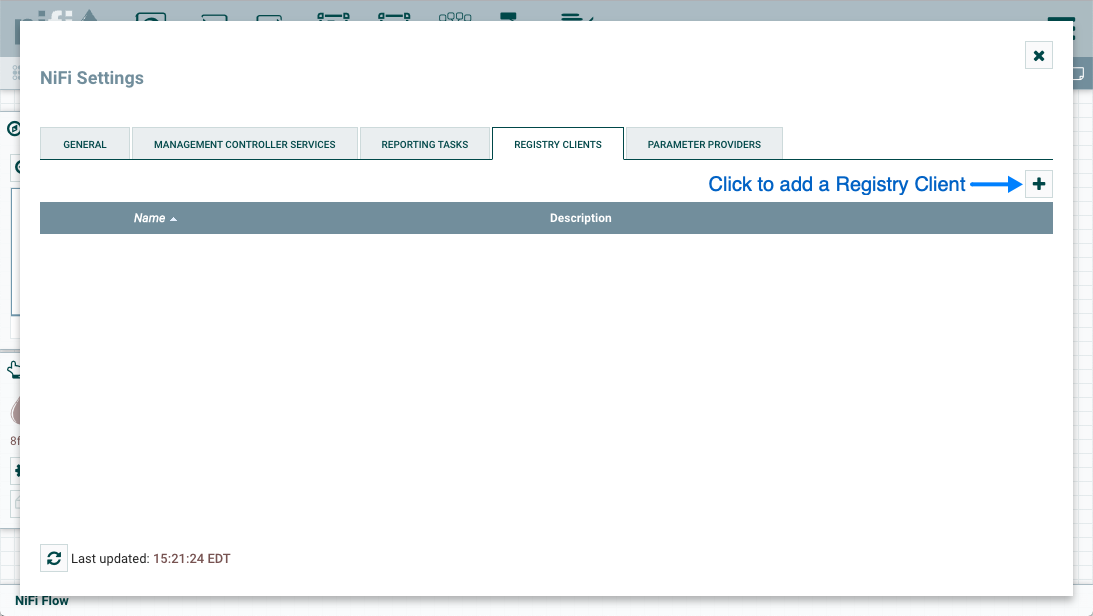

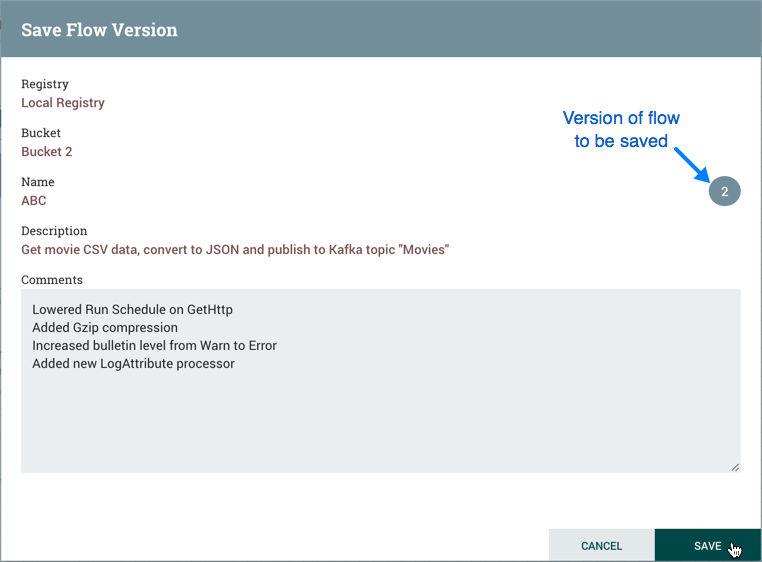

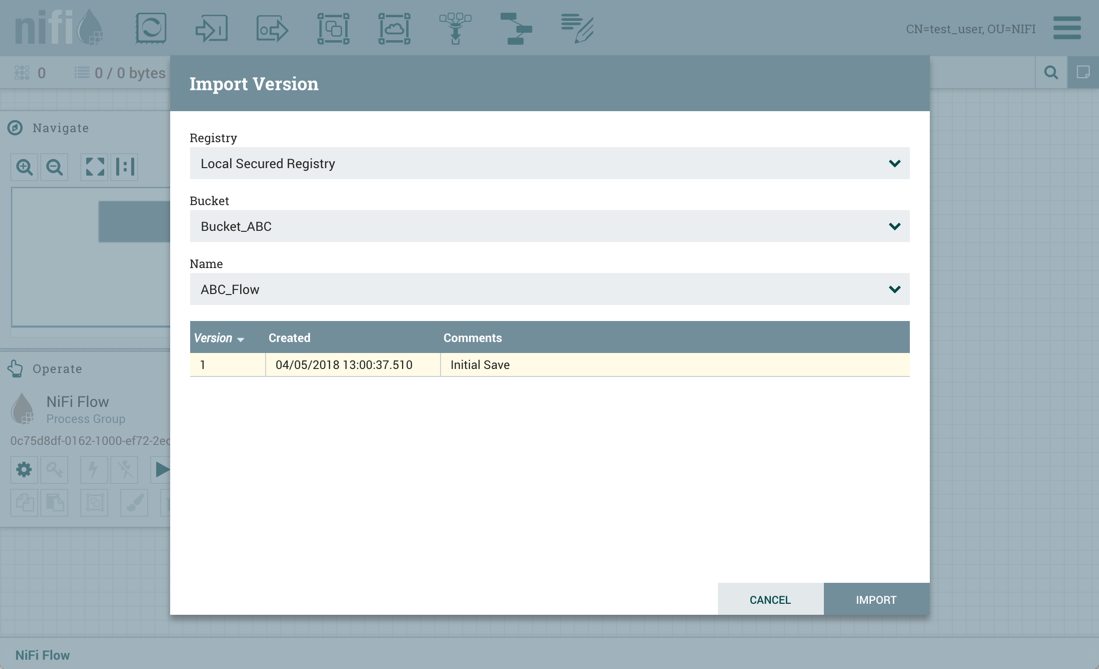

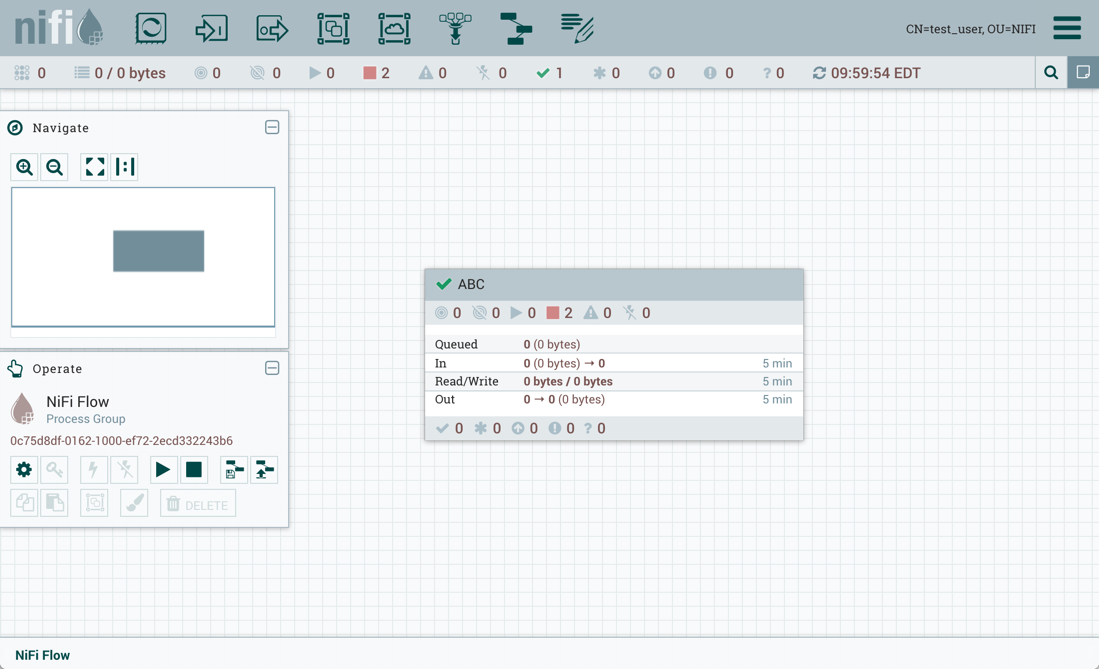

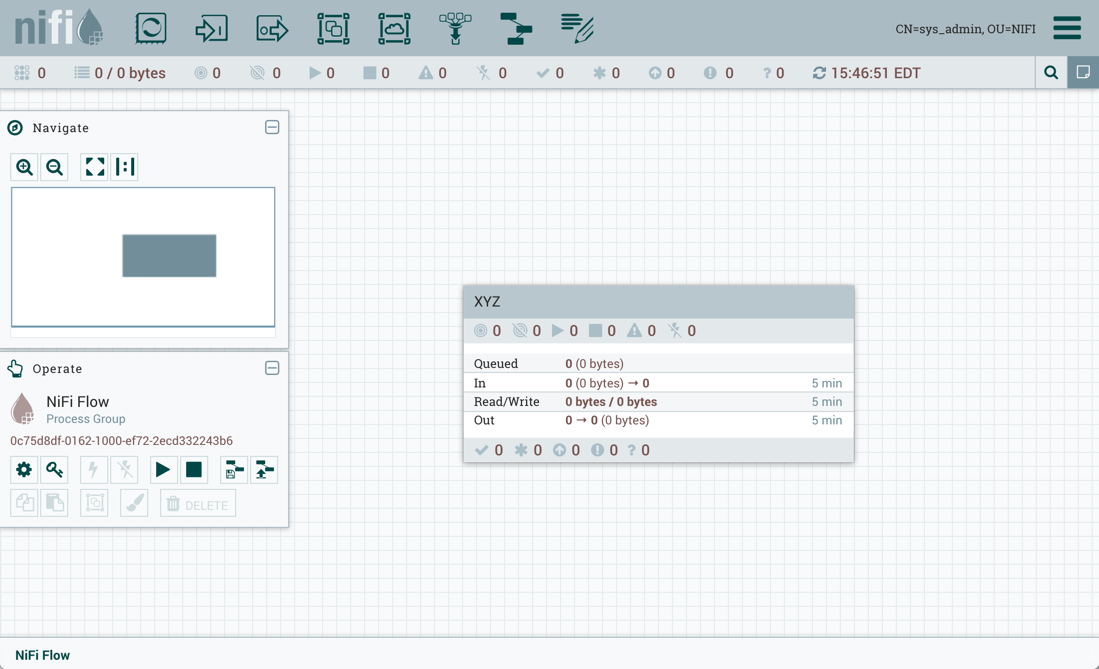

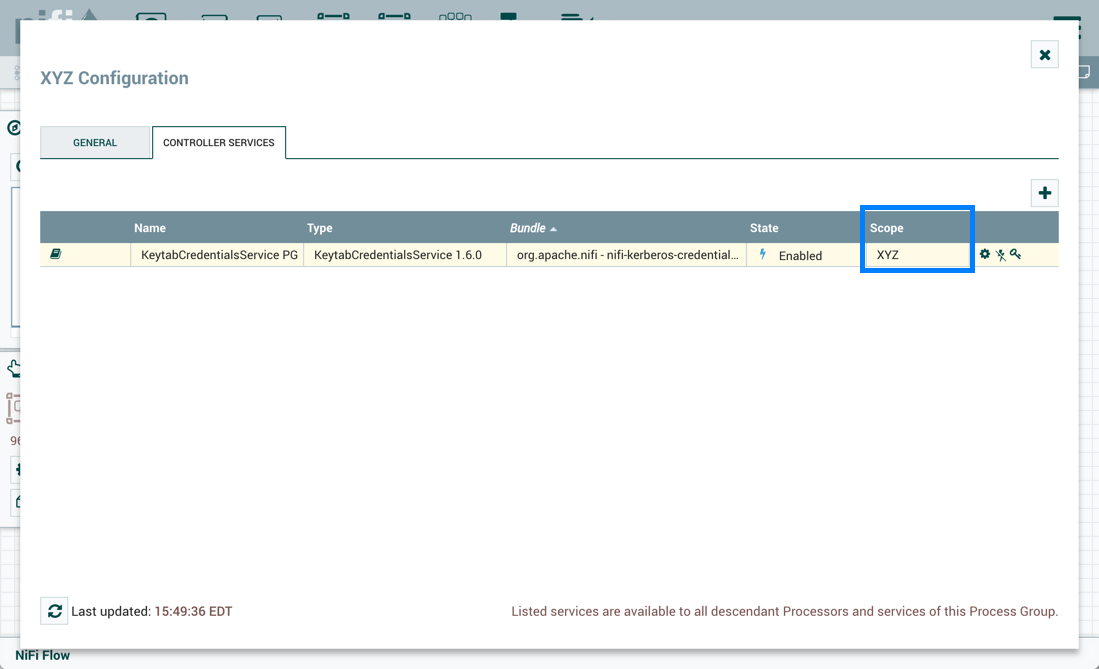

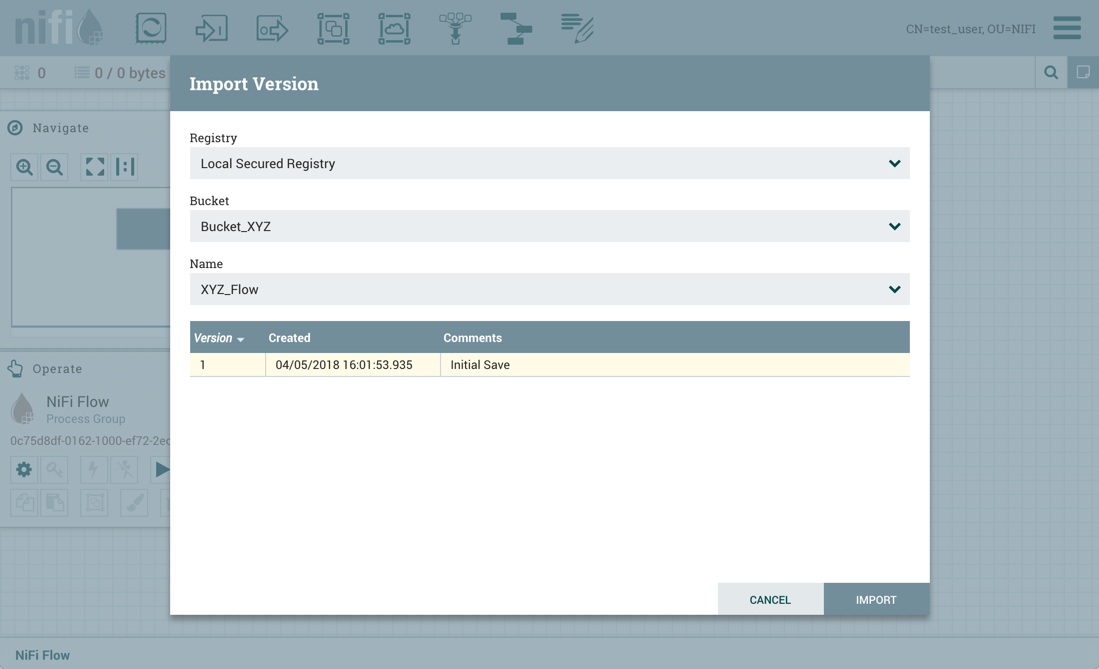

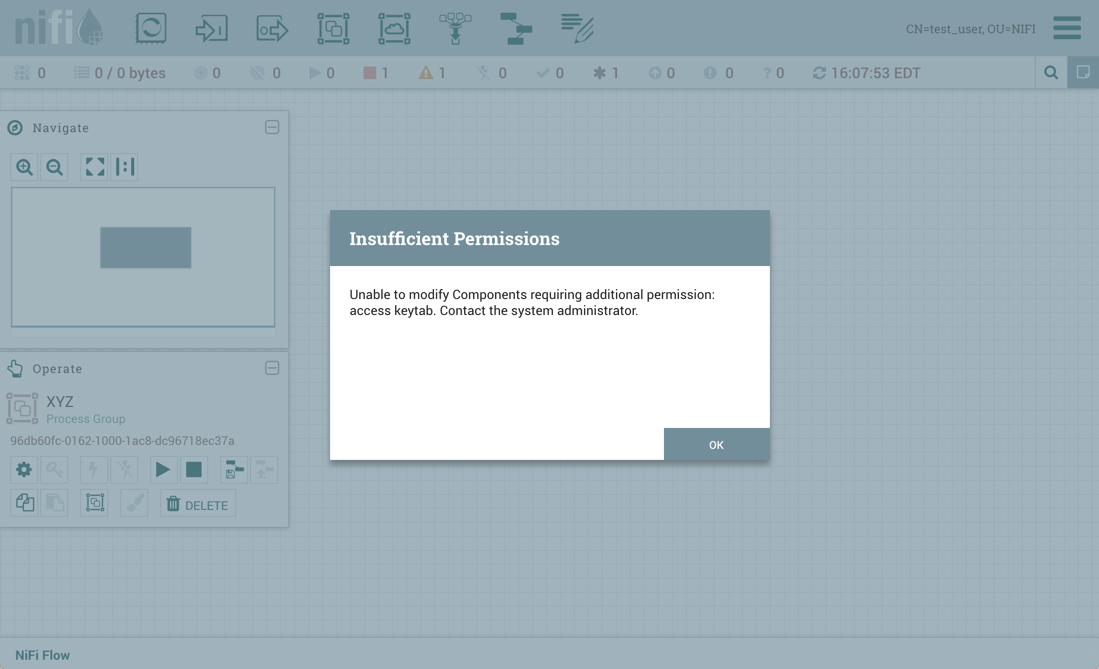

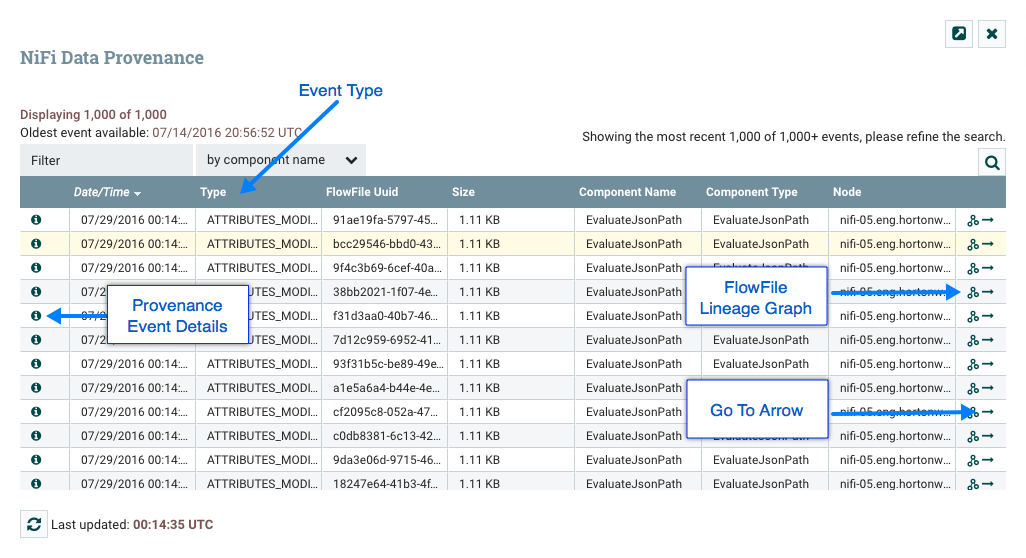

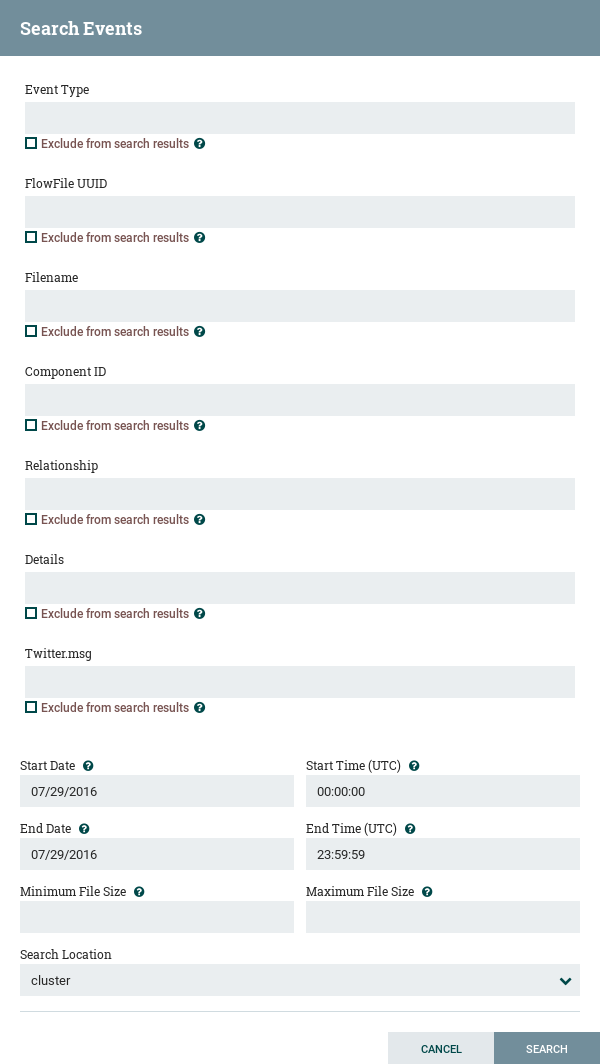

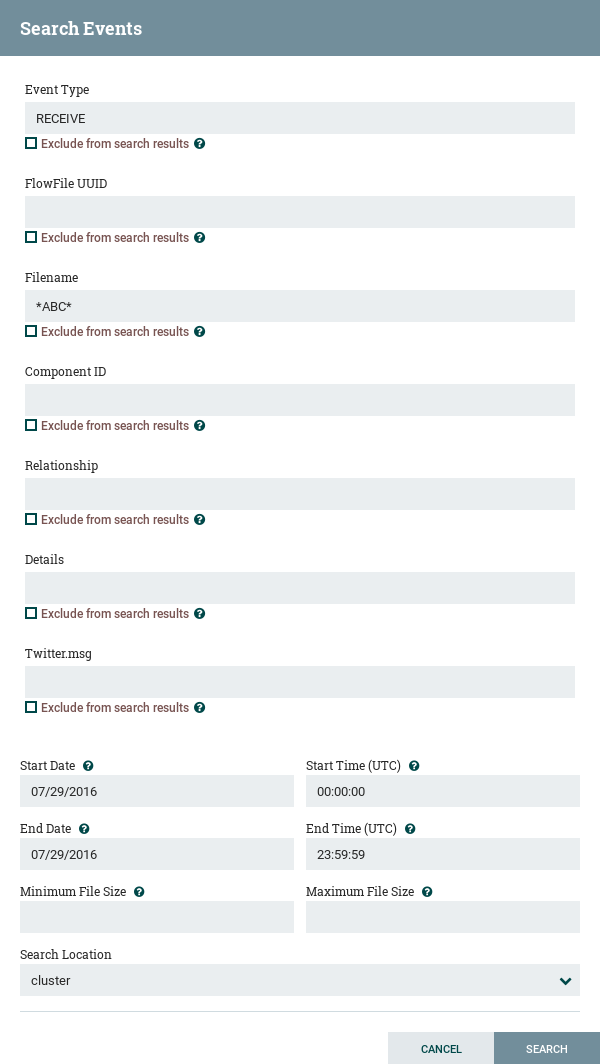

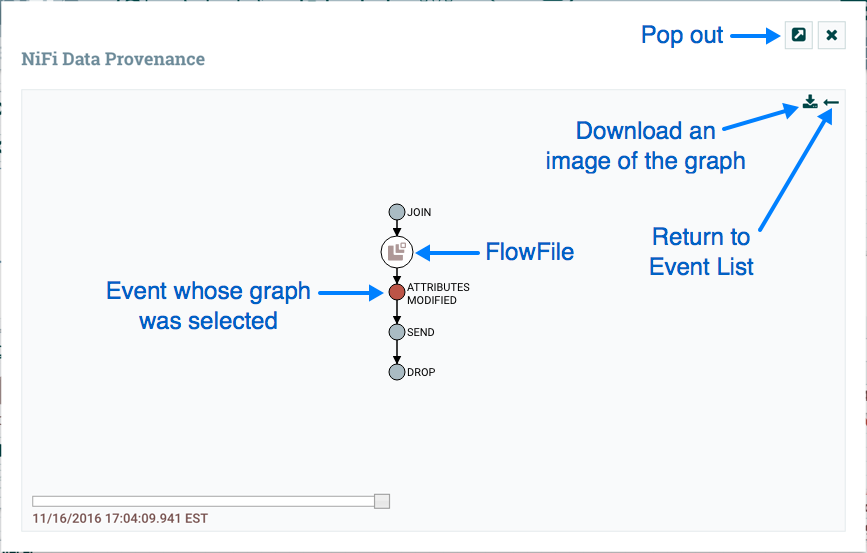

The Add Parameter dialog will open. Configure the new parameter as desired.