System Requirements

Apache NiFi can run on something as simple as a laptop, but it can also be clustered across many enterprise-class servers. Therefore, the amount of hardware and memory needed will depend on the size and nature of the dataflow involved. The data is stored on disk while NiFi is processing it. So NiFi needs to have sufficient disk space allocated for its various repositories, particularly the content repository, flowfile repository, and provenance repository (see the System Properties section for more information about these repositories). NiFi has the following minimum system requirements:

-

Requires Java 21

-

Use of Python-based Processors (beta feature) requires Python 3.10, 3.11, or 3.12

-

Supported Operating Systems:

-

Linux

-

Unix

-

Windows

-

macOS

-

-

Supported Web Browsers:

-

Microsoft Edge: Current & (Current - 1)

-

Mozilla FireFox: Current & (Current - 1)

-

Google Chrome: Current & (Current - 1)

-

Safari: Current & (Current - 1)

-

| Under sustained and extremely high throughput the CodeCache settings may need to be tuned to avoid sudden performance loss. See the Bootstrap Properties section for more information. |

How to install and start NiFi

-

Linux/Unix/macOS

-

Decompress and untar into desired installation directory

-

Make any desired edits in files found under

<installdir>/conf-

At a minimum, we recommend editing the nifi.properties file and entering a password for the

nifi.sensitive.props.key(see System Properties below)

-

-

From the

<installdir>/bindirectory, execute the following commands by typing./nifi.sh <command>:-

start: starts NiFi in the background -

stop: stops NiFi that is running in the background -

status: provides the current status of NiFi -

run: runs NiFi in the foreground and waits for a Ctrl-C to initiate shutdown of NiFi

-

-

-

Windows

-

Decompress into the desired installation directory

-

Make any desired edits in the files found under

<installdir>/conf-

At a minimum, we recommend editing the nifi.properties file and entering a password for the

nifi.sensitive.props.key(see System Properties below)

-

-

From the

<installdir>\bindirectory, execute the following commands by typing.\nifi.cmd <command>:-

start: starts NiFi in the background -

stop: stops NiFi that is running in the background -

status: provides the current status of NiFi

-

-

When NiFi first starts up, the following files and directories are created:

-

content_repository -

database_repository -

flowfile_repository -

provenance_repository -

workdirectory -

logsdirectory -

Within the

confdirectory, the flow.json.gz file is created

| For security purposes, when no security configuration is provided NiFi will now bind to 127.0.0.1 by default and the UI will only be accessible through this loopback interface. HTTPS properties should be configured to access NiFi from other interfaces. See the Security Configuration for guidance on how to do this. |

See the System Properties section of this guide for more information about configuring NiFi repositories and configuration files.

Build a Custom Distribution

The binary build of Apache NiFi that is provided by the Apache mirrors does not contain every NAR file that is part of the official release. This is due to size constraints imposed by the mirrors to reduce the expenses associated with hosting such a large project. The Developer Guide has a list of optional Maven profiles that can be activated to build a binary distribution of NiFi with these extra capabilities.

Java 21 is required for building and running Apache NiFi.

The next step is to download a copy of the Apache NiFi source code from the NiFi Downloads page. The reason you need the source build is that it includes a module called nifi-assembly which is the Maven module that builds a binary distribution. Expand the archive and run a Maven clean build. The following example shows how to build a distribution that activates the graph and media bundle profiles to add in support for graph databases and Apache Tika content and metadata extraction.

cd <nifi_source_folder>/nifi-assembly

./mvnw clean install -Pinclude-grpc,include-graph,include-media

There is also a specific profile allowing you to build NiFi with all of the additional bundles that are not included by default:

./mvnw clean install -Pinclude-all

This will include all optional bundles.

Port Configuration

NiFi

The following table lists the default ports used by NiFi and the corresponding property in the nifi.properties file.

| Function | Property | Default Value |

|---|---|---|

HTTPS Port |

|

|

Remote Input Socket Port* |

|

|

Cluster Node Protocol Port* |

|

|

Cluster Node Load Balancing Port |

|

|

| The ports marked with an asterisk (*) have property values that are blank by default in nifi.properties. |

Embedded ZooKeeper

The following table lists the default ports used by an Embedded ZooKeeper Server and the corresponding property in the zookeeper.properties file.

| Function | Property | Default Value |

|---|---|---|

ZooKeeper Client Port (Deprecated: client port is no longer specified on a separate line as of NiFi 1.10.x) |

|

|

ZooKeeper Server Quorum and Leader Election Ports |

|

none |

Commented examples for the ZooKeeper server ports are included in the zookeeper.properties file in the form server.N=nifi-nodeN-hostname:2888:3888;2181.

|

Configuration Best Practices

If you are running on Linux, consider these best practices. Typical Linux defaults are not necessarily well-tuned for the needs of an IO intensive application like NiFi. For all of these areas, your distribution’s requirements may vary. Use these sections as advice, but consult your distribution-specific documentation for how best to achieve these recommendations.

- Maximum File Handles

-

NiFi will at any one time potentially have a very large number of file handles open. Increase the limits by editing /etc/security/limits.conf to add something like

* hard nofile 50000 * soft nofile 50000

- Maximum Forked Processes

-

NiFi may be configured to generate a significant number of threads. To increase the allowable number, edit /etc/security/limits.conf

* hard nproc 10000 * soft nproc 10000

And your distribution may require an edit to /etc/security/limits.d/90-nproc.conf by adding

* soft nproc 10000

- Increase the number of TCP socket ports available

-

This is particularly important if your flow will be setting up and tearing down a large number of sockets in a small period of time.

sudo sysctl -w net.ipv4.ip_local_port_range="10000 65000"

- Set how long sockets stay in a TIMED_WAIT state when closed

-

You don’t want your sockets to sit and linger too long given that you want to be able to quickly setup and teardown new sockets. It is a good idea to read more about it and adjust to something like

sudo sysctl -w net.netfilter.nf_conntrack_tcp_timeout_time_wait="1"

- Tell Linux you never want NiFi to swap

-

Swapping is fantastic for some applications. It isn’t good for something like NiFi that always wants to be running. To tell Linux you’d like swapping off, you can edit /etc/sysctl.conf to add the following line

vm.swappiness = 0

For the partitions handling the various NiFi repos, turn off things like atime.

Doing so can cause a surprising bump in throughput. Edit the /etc/fstab file

and for the partition(s) of interest, add the noatime option.

Recommended Antivirus Exclusions

Antivirus software can take a long time to scan large directories and the numerous files within them. Additionally, if the antivirus software locks files or directories during a scan, those resources are unavailable to NiFi processes, causing latency or unavailability of these resources in a NiFi instance/cluster. To prevent these performance and reliability issues from occurring, it is highly recommended to configure your antivirus software to skip scans on the following NiFi directories:

-

content_repository -

flowfile_repository -

logs -

provenance_repository -

state

Logging Configuration

NiFi uses logback as the runtime logging implementation. The conf directory contains a

standard logback.xml configuration with default appender and level settings. The

logback manual provides a complete reference of available options.

Standard Log Files

The standard logback configuration includes the following appender definitions and associated log files:

| File | Description |

|---|---|

|

Application log containing framework and component messages |

|

Bootstrap log containing startup and shutdown messages |

|

Deprecation log containing warnings for deprecated components and features |

|

HTTP request log containing user interface and REST API access messages |

|

User log containing authentication and authorization messages |

Mapped Diagnostic Context Properties

Logging for extension components such as Processors and Controller Services include variables in the Mapped Diagnostic Context that provide additional information about the component location. MDC values can be added to log messages with custom layout configuration.

Component logs provide the following MDC named values:

-

processGroupIdcontains the UUID of the Process Group -

processGroupIdPathcontains the hierarchy of UUIDs for Process Groups with separators -

processGroupNamecontains the name of the Process Group -

processGroupNamePathcontains of the hierarchy of names for Process Groups with separators

MDC named values can be added to a Logback pattern layout using the mdc conversion word.

<pattern>%date %level [%thread] %mdc{processGroupId} %logger{40} %msg%n</pattern>Logs from classes other than extension components do not have MDC named values. Logs formatted using the pattern layout will include an empty space when an MDC named value is not found.

Deprecation Logging

The nifi-deprecation.log contains warning messages describing components and features that will be removed in

subsequent versions. Deprecation warnings should be evaluated and addressed to avoid breaking changes when upgrading to

a new major version. Resolving deprecation warnings involves upgrading to new components, changing component property

settings, or refactoring custom component classes.

Deprecation logging provides a method for checking compatibility before upgrading from one major release version to another. Upgrading to the latest minor release version will provide the most accurate set of deprecation warnings.

It is important to note that deprecation logging applies to both components and features. Logging for deprecated features requires a runtime reference to the property or method impacted. Disabled components with deprecated properties or methods will not generate deprecation logs. For this reason, it is important to exercise all configured components long enough to exercise standard flow behavior.

Deprecation logging can generate repeated messages depending on component configuration and usage patterns. Disabling

deprecation logging for a specific component class can be configured by adding a logger element to logback.xml.

The name attribute must start with deprecation, followed by the component class. Setting the level attribute to

OFF disables deprecation logging for the component specified.

<logger name="deprecation.org.apache.nifi.processors.ListenLegacyProtocol" level="OFF" />Python Configuration

NiFi is a Java-based application. NiFi 2.0 introduces support for a Python-based Processor API. This capability is still considered to be in "Beta" mode and should not be used in production. By default, support for Python-based Processors is disabled. In order to enable it, Python 3.10, 3.11, or 3.12 must be installed on the NiFi node.

The following properties may be used to configure the Python 3 installation and process management. These properties are all located under the "Python Extensions" heading in the nifi.properties file:

| Property Name | Default Value | Description |

|---|---|---|

nifi.python.command |

python3 |

The command used to launch Python. By default, this property is set to "python3" but commented out. In order to enable Python-based Processors, uncomment this line and set it to the command that should be used to invoke Python 3. |

nifi.python.framework.source.directory |

./python/framework |

The directory that contains the Python framework for communicating between the Python and Java processes. The API is expected to be

located as a sibling of this directory. For example, if the value of this property is |

nifi.python.extensions.source.directory.default |

./python/extensions |

The directory that NiFi should look in to find Python-based Processors. Note that this property is supplied

by default, but multiple Python Extension directories can be added by adding additional properties with the prefix |

nifi.python.working.directory |

./work/python |

The working directory where NiFi should store artifacts, such as any third-party libraries that are downloaded as dependencies for the Python Processors. |

nifi.python.max.processes.per.extension.type |

10 |

The maximum number of Python processes that should be spawned for any one type of Processor. Because Python does not scale vertically, adding many NiFi Processors within the same Python process would yield very poor performance. Instead, NiFi creates a Python process for every Python Processor that is added to the canvas, within limits. This property indicates the maximum number of Python processes that can be created for any particular type of Processor. For example, if there are 5 instances of the TransformFoo Processor on the canvas, and this value is set to 10, then adding another TransformFoo will spawn another Python process. However, after the tenth process, adding an eleventh instance of TransformFoo will result in adding a second TransformFoo processor to the first Python process. This may result in poorer performance, but limits the number of compute resources that can be allocated for each individual type of Processor. |

nifi.python.max.processes |

100 |

The maximum number of Python processes to spawn for all Processors combined. Once this limit is reached, if another Processor is added to the NiFi canvas, the newly added Processor will be added to one of the existing Python processes that was allocated for other Processors of the same type. If there are no other Python processes allocated for the same type, an Exception will be thrown and the Processor will not be added to the canvas. |

Security Configuration

NiFi provides several different configuration options for security purposes. The most important properties are those under the "security properties" heading in the nifi.properties file. In order to run securely, the following properties must be set:

| Property Name | Description |

|---|---|

|

File path to the key store containing the server private key and certificate entry. |

|

File path to |

|

File path to |

|

The type of key store. Supported types include |

|

The password for the key store. This property will be used as the key password when |

|

The password for the server private key entry in the key store. The |

|

File path to the trust store containing one or more certificates of trusted authorities for TLS connections. |

|

File path to |

|

The type of trust store. Supported types include |

|

The password for the trust store. |

Once the above properties have been configured, we can enable the User Interface to be accessed over HTTPS instead of HTTP. This is accomplished

by setting the nifi.web.https.host and nifi.web.https.port properties. The nifi.web.https.host property indicates which hostname the server

should run on. If it is desired that the HTTPS interface be accessible from all network interfaces, a value of 0.0.0.0 should be used. To allow

admins to configure the application to run only on specific network interfaces, nifi.web.http.network.interface* or nifi.web.https.network.interface*

properties can be specified.

It is important when enabling HTTPS that the nifi.web.http.port property be unset. NiFi only supports running on HTTP or HTTPS, not both simultaneously.

|

NiFi’s web server will REQUIRE certificate based client authentication for users accessing the User Interface when not configured with an alternative authentication mechanism which would require one way SSL (for instance LDAP, OpenID Connect, etc). Enabling an alternative authentication mechanism will configure the web server to WANT certificate base client authentication. This will allow it to support users with certificates and those without that may be logging in with credentials. See User Authentication for more details.

Now that the User Interface has been secured, we can easily secure Site-to-Site connections and inner-cluster communications, as well. This is

accomplished by setting the nifi.remote.input.secure property to true. These communications

will always REQUIRE two way SSL as the nodes will use their configured keystore/truststore for authentication.

Automatic refreshing of NiFi’s web SSL context factory can be enabled using the following properties:

| Property Name | Description |

|---|---|

|

Specifies whether the SSL context factory should be automatically reloaded if updates to the keystore and truststore are detected. By default, it is set to |

|

Specifies the interval at which the keystore and truststore are checked for updates. Only applies if |

Once the nifi.security.autoreload.enabled property is set to true, any valid changes to the configured keystore and truststore will cause NiFi’s SSL context factory to be reloaded, allowing clients to pick up the changes. This is intended to allow expired certificates to be updated in the keystore and new trusted certificates to be added in the truststore, all without having to restart the NiFi server.

Changes to any of the nifi.security.keystore* or nifi.security.truststore* properties will not be picked up by the auto-refreshing logic, which assumes the passwords and store paths will remain the same.

|

TLS Cipher Suites

The Java Runtime Environment provides the ability to specify custom TLS cipher suites to be used by servers when accepting client connections. See here for more information. To use this feature for the NiFi web service, the following NiFi properties may be set:

| Property Name | Description |

|---|---|

|

Set of ciphers that are available to be used by incoming client connections. Replaces system defaults if set. |

|

Set of ciphers that must not be used by incoming client connections. Filters available ciphers if set. |

Each property should take the form of a comma-separated list of common cipher names as specified

here. Regular expressions

(for example ^.*GCM_SHA256$) may also be specified.

The semantics match the use of the following Jetty APIs:

User Authentication

NiFi supports user authentication using a number of configurable protocols and strategies.

Username and password authentication is performed by a 'Login Identity Provider'. The Login Identity Provider is a pluggable mechanism for authenticating users via their username/password. Which Login Identity Provider to use is configured in the nifi.properties file. Currently NiFi offers username/password with Login Identity Providers options for Single User, Lightweight Directory Access Protocol (LDAP) and Kerberos.

The nifi.login.identity.provider.configuration.file property specifies the configuration file for Login Identity Providers. By default, this property is set to ./conf/login-identity-providers.xml.

The nifi.security.user.login.identity.provider property indicates which of the configured Login Identity Provider should be

used. The default value of this property is single-user-provider supporting authentication with a generated username and password.

For Single sign-on authentication, NiFi will redirect users to the Identity Provider before returning to NiFi. NiFi will then process responses and convert attributes to application token information.

| NiFi cannot be configured for multiple authentication strategies simultaneously. NiFi will require client certificates for authenticating users over HTTPS if no other strategies have been configured. |

A user cannot anonymously authenticate with a secured instance of NiFi unless nifi.security.allow.anonymous.authentication is set to true.

If this is the case, NiFi must also be configured with an Authorizer that supports authorizing an anonymous user. Currently, NiFi does not ship

with any Authorizers that support this. There is a feature request here to help support it (NIFI-2730).

| Allowing anonymous authentication is deprecated for removal in subsequent releases. |

There are three scenarios to consider when setting nifi.security.allow.anonymous.authentication. When the user is directly calling an endpoint

with no attempted authentication then nifi.security.allow.anonymous.authentication will control whether the request is authenticated or rejected.

The other two scenarios are when the request is proxied. This could either be proxied by a NiFi node (e.g. a node in the NiFi cluster) or by a separate

proxy that is proxying a request for an anonymous user. In these proxy scenarios nifi.security.allow.anonymous.authentication will control whether the

request is authenticated or rejected. In all three of these scenarios if the request is authenticated it will subsequently be subjected to normal

authorization based on the requested resource.

| NiFi does not perform user authentication over HTTP. Using HTTP, all users will be granted all roles. |

Single User

The default Single User Login Identity Provider supports automated generation of username and password credentials.

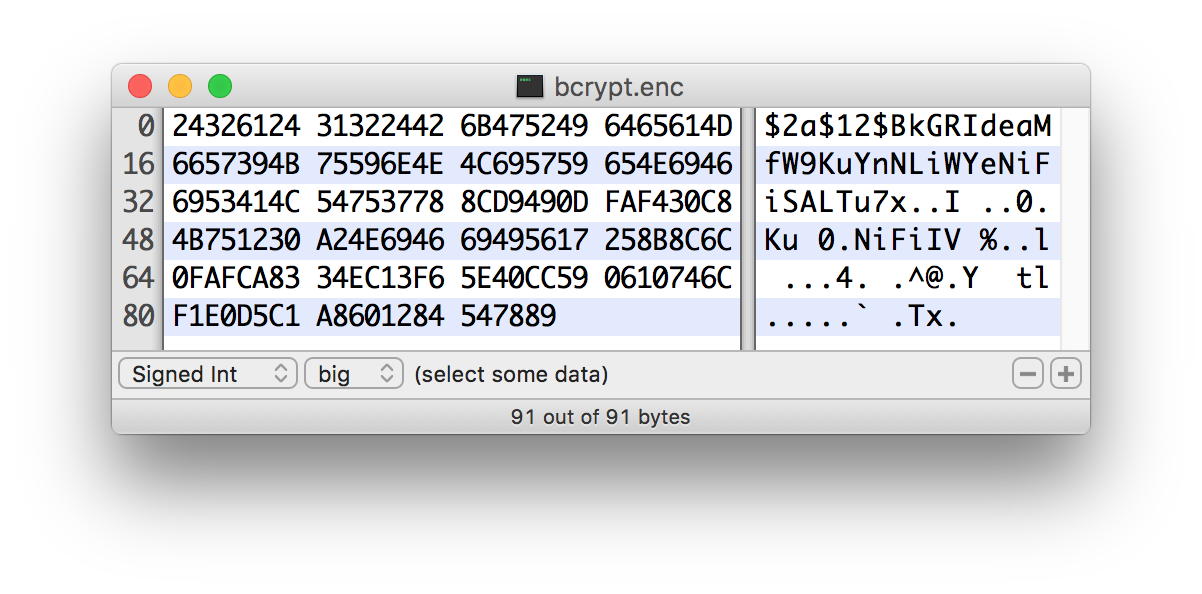

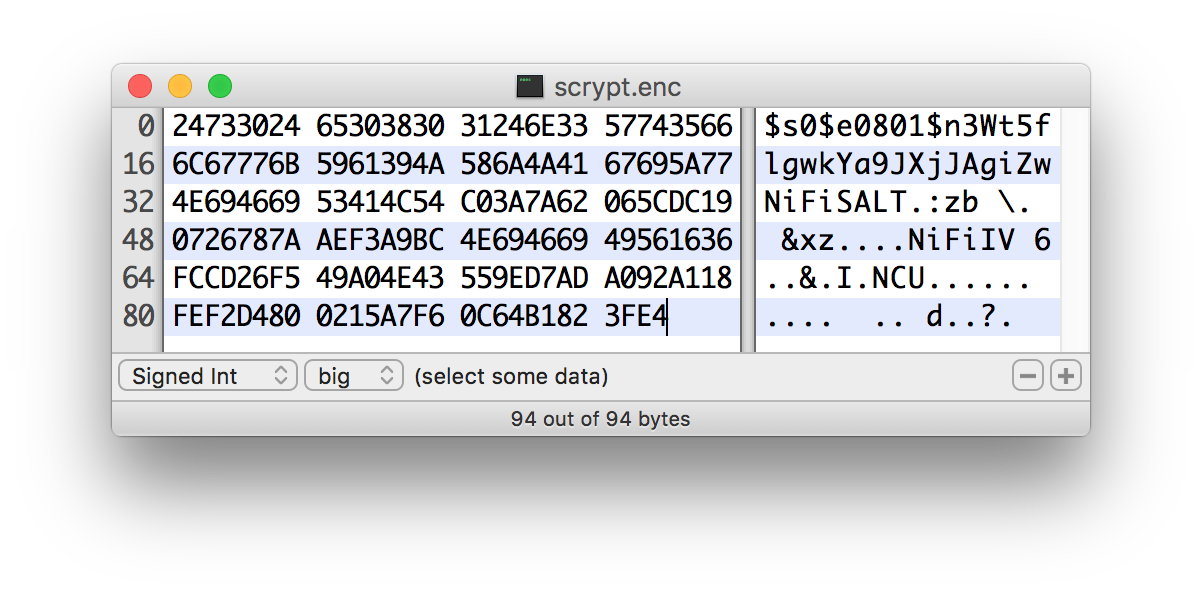

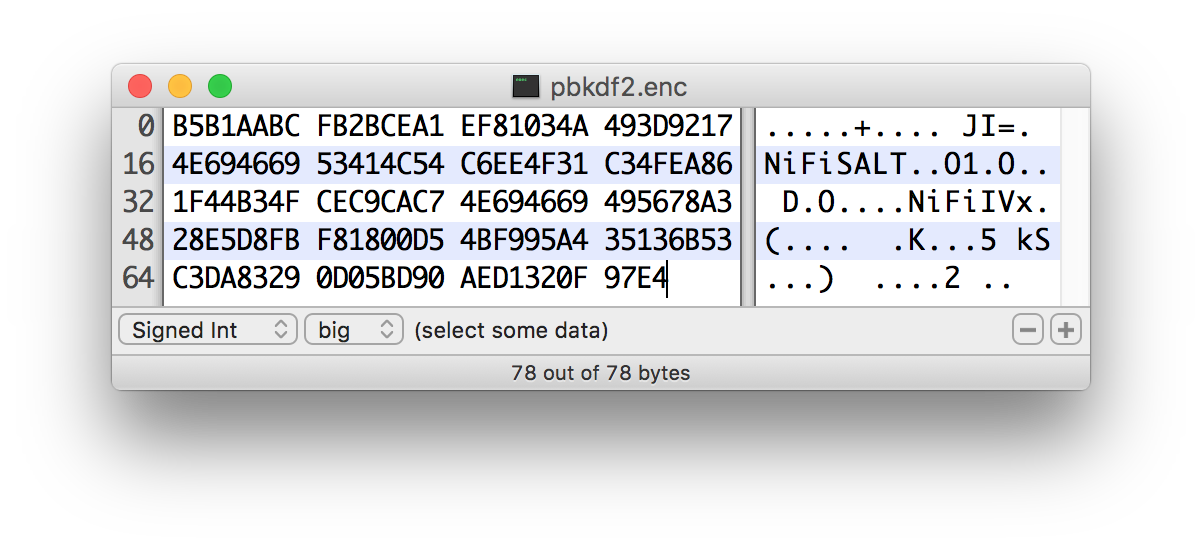

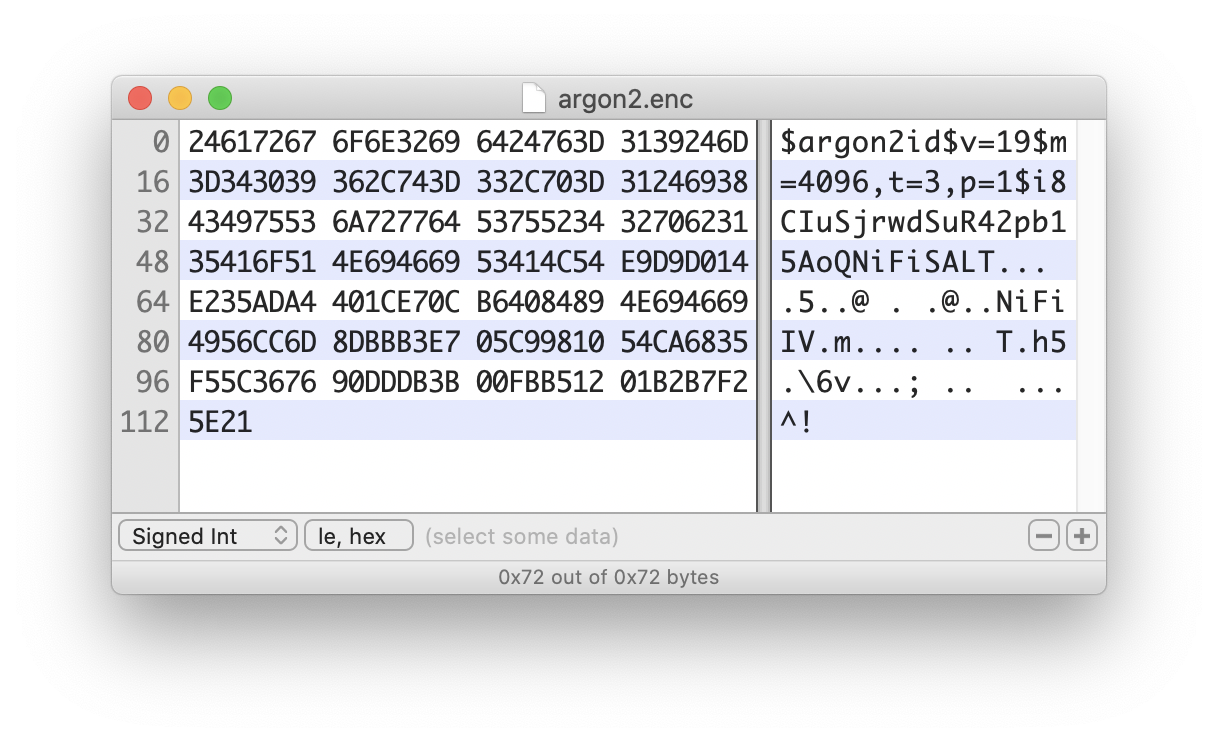

The generated username will be a random UUID consisting of 36 characters. The generated password will be a random string consisting of 32 characters and stored using bcrypt hashing.

The default configuration in nifi.properties enables Single User authentication:

nifi.security.user.login.identity.provider=single-user-provider

The default login-identity-providers.xml includes a blank provider definition:

<provider> <identifier>single-user-provider</identifier> <class>org.apache.nifi.authentication.single.user.SingleUserLoginIdentityProvider</class> <property name="Username"/> <property name="Password"/> </provider>

The following command can be used to change the Username and Password:

$ ./bin/nifi.sh set-single-user-credentials <username> <password>Lightweight Directory Access Protocol (LDAP)

Below is an example and description of configuring a Login Identity Provider that integrates with a Directory Server to authenticate users.

Set the following in nifi.properties to enable LDAP username/password authentication:

nifi.security.user.login.identity.provider=ldap-provider

Modify login-identity-providers.xml to enable the ldap-provider. Here is the sample provided in the file:

<provider>

<identifier>ldap-provider</identifier>

<class>org.apache.nifi.ldap.LdapProvider</class>

<property name="Authentication Strategy">START_TLS</property>

<property name="Manager DN"></property>

<property name="Manager Password"></property>

<property name="TLS - Keystore"></property>

<property name="TLS - Keystore Password"></property>

<property name="TLS - Keystore Type"></property>

<property name="TLS - Truststore"></property>

<property name="TLS - Truststore Password"></property>

<property name="TLS - Truststore Type"></property>

<property name="TLS - Client Auth"></property>

<property name="TLS - Protocol"></property>

<property name="TLS - Shutdown Gracefully"></property>

<property name="Referral Strategy">FOLLOW</property>

<property name="Connect Timeout">10 secs</property>

<property name="Read Timeout">10 secs</property>

<property name="Url"></property>

<property name="User Search Base"></property>

<property name="User Search Filter"></property>

<property name="Identity Strategy">USE_DN</property>

<property name="Authentication Expiration">12 hours</property>

</provider>

The ldap-provider has the following properties:

| Property Name | Description |

|---|---|

|

How the connection to the LDAP server is authenticated. Possible values are |

|

The DN of the manager that is used to bind to the LDAP server to search for users. |

|

The password of the manager that is used to bind to the LDAP server to search for users. |

|

Path to the Keystore that is used when connecting to LDAP using LDAPS or START_TLS. |

|

Password for the Keystore that is used when connecting to LDAP using LDAPS or START_TLS. |

|

Type of the Keystore that is used when connecting to LDAP using LDAPS or START_TLS (i.e. |

|

Path to the Truststore that is used when connecting to LDAP using LDAPS or START_TLS. |

|

Password for the Truststore that is used when connecting to LDAP using LDAPS or START_TLS. |

|

Type of the Truststore that is used when connecting to LDAP using LDAPS or START_TLS (i.e. |

|

Client authentication policy when connecting to LDAP using LDAPS or START_TLS. Possible values are |

|

Protocol to use when connecting to LDAP using LDAPS or START_TLS. (i.e. |

|

Specifies whether the TLS should be shut down gracefully before the target context is closed. Defaults to false. |

|

Strategy for handling referrals. Possible values are |

|

Duration of connect timeout. (i.e. |

|

Duration of read timeout. (i.e. |

|

Space-separated list of URLs of the LDAP servers (i.e. |

|

Base DN for searching for users (i.e. |

|

Filter for searching for users against the |

|

Strategy to identify users. Possible values are |

|

The duration of how long the user authentication is valid for. If the user never logs out, they will be required to log back in following this duration. |

| For changes to nifi.properties and login-identity-providers.xml to take effect, NiFi needs to be restarted. If NiFi is clustered, configuration files must be the same on all nodes. |

Kerberos

| The Kerberos Provider is deprecated for removal in subsequent releases. |

Below is an example and description of configuring a Login Identity Provider that integrates with a Kerberos Key Distribution Center (KDC) to authenticate users.

Set the following in nifi.properties to enable Kerberos username/password authentication:

nifi.security.user.login.identity.provider=kerberos-provider

Modify login-identity-providers.xml to enable the kerberos-provider. Here is the sample provided in the file:

<provider>

<identifier>kerberos-provider</identifier>

<class>org.apache.nifi.kerberos.KerberosProvider</class>

<property name="Default Realm">NIFI.APACHE.ORG</property>

<property name="Authentication Expiration">12 hours</property>

</provider>

The kerberos-provider has the following properties:

| Property Name | Description |

|---|---|

|

Default realm to provide when user enters incomplete user principal (i.e. |

|

The duration of how long the user authentication is valid for. If the user never logs out, they will be required to log back in following this duration. |

See also [kerberos_service] to allow single sign-on access via client Kerberos tickets.

| For changes to nifi.properties and login-identity-providers.xml to take effect, NiFi needs to be restarted. If NiFi is clustered, configuration files must be the same on all nodes. |

OpenID Connect

OpenID Connect integration provides single sign-on using a specified Authorization Server. The implementation supports the Authorization Code Grant Type as described in RFC 6749 Section 4.1 and OpenID Connect Core Section 3.1.1.

The Authorization Code Grant Type implementation supports RFC 7636 Proof

Key for Code Exchange as part of the authentication process. PKCE support uses the S256 code challenge method.

After successful authentication with the Authorization Server, NiFi generates an application Bearer Token with an expiration based on the OAuth2 Access Token expiration. NiFi stores authorized tokens using the local State Provider and encrypts serialized information using the application Sensitive Properties Key.

The implementation enables

OpenID Connect RP-Initiated Logout 1.0 when the

Authorization Server includes an end_session_endpoint element in the OpenID Discovery configuration.

OpenID Connect integration supports using Refresh Tokens as described in OpenID Connect Core Section 12. NiFi tracks the expiration of the application Bearer Token and uses the stored Refresh Token to renew access prior to Bearer Token expiration, based on the configured token refresh window. NiFi does not require OpenID Connect Providers to support Refresh Tokens. When an OpenID Connect Provider does not return a Refresh Token, NiFi requires the user to initiate a new session when the application Bearer Token expires.

The Refresh Token implementation allows the NiFi session to continue as long as the Refresh Token is valid and the user agent presents a valid Bearer Token. The default value for the token refresh window is 60 seconds. For an Access Token with an expiration of one hour, NiFi will attempt to renew access using the Refresh Token when receiving an HTTP request 59 minutes after authenticating the Access Token. Revoked Refresh Tokens or expired application Bearer Tokens result in standard session timeout behavior, requiring the user to initiate a new session.

The OpenID Connect implementation supports OAuth 2.0 Token Revocation as defined in

RFC 7009. OpenID Connect Discovery configuration must include a

revocation_endpoint element that supports RFC 7009 standards. The application sends revocation requests for Refresh

Tokens when the authenticated Resource Owner initiates the logout process.

The implementation includes a scheduled process for removing and revoking expired Refresh Tokens when the corresponding Access Token has expired, indicating that the Resource Owner has terminated the application session. Scheduled session termination occurs when the user closes the browser without initiating the logout process. The scheduled process avoids extended storage of Refresh Tokens for users who are no longer interacting with the application.

The OpenID Connect implementation also supports the OAuth 2 Client Credentials Grant Type as described in

RFC 6749 Section 4.4. With OpenID Connect integration enabled,

NiFi evaluates the JSON Web Token Issuer Claim named iss and delegates to either the configured Authorization Server

or internal processing for signature verification. When the iss claim value matches the issuer from the OpenID

Connect Discovery Configuration, NiFi uses the JSON Web Keys from the Authorization Server for signature verification.

In all other cases, NiFi verifies JSON Web Token signatures using an internal public key.

The Client Credentials Grant Type enables machine-to-machine authentication and requires token request processing outside

of NiFi itself to obtain an Access Token. NiFi must also be configured to authorize requests based on the identity

defined in a signed Access Token. Access Tokens obtained using the Client Credentials Grant Type do not include the

standard email, which requires configuring a fallback claim to identify the machine user. The most common claim for

identification is the Subject Claim named sub, which contains the Client ID.

OpenID Connect integration supports the following settings in nifi.properties.

| Property Name | Description |

|---|---|

|

The Discovery Configuration URL

for the OpenID Connect Provider. Supports URLs with |

|

Socket Connect timeout when communicating with the OpenID Connect Provider. The default value is |

|

Socket Read timeout when communicating with the OpenID Connect Provider. The default value is |

|

The Client ID for NiFi registered with the OpenID Connect Provider |

|

The Client Secret for NiFi registered with the OpenID Connect Provider |

|

The preferred algorithm for validating identity tokens. If this value is blank, it will default to |

|

Comma separated scopes that are sent to OpenID Connect Provider in addition to |

|

Claim that identifies the authenticated user. The default value is |

|

Comma-separated list of possible fallback claims used to identify the user when the |

|

Name of the ID token claim that contains an array of group names of which the

user is a member. Application groups must be supplied from a User Group Provider with matching names in order for the

authorization process to use ID token claim groups. The default value is |

|

HTTPS Certificate Trust Store Strategy defines the source of certificate authorities that NiFi uses when communicating with the OpenID Connect Provider.

The value of |

|

The Token Refresh Window specifies the amount of time before the NiFi authorization session expires when the application will attempt to renew access using a cached Refresh Token. The default is |

OpenID Connect REST Resources

OpenID Connect authentication enables the following REST resources for integration with an OpenID Connect 1.0 Authorization Server:

| Resource Path | Description |

|---|---|

/nifi-api/access/oidc/callback/consumer |

Process OIDC 1.0 Login Authentication Responses from an Authentication Server. |

/nifi/logout-complete |

Path for redirect after successful OIDC RP-Initiated Logout 1.0 processing |

SAML

To enable authentication via SAML the following properties must be configured in nifi.properties.

Configuring a Metadata URL and an Entity Identifier enables Apache NiFi to act as a SAML 2.0 Relying Party, allowing users to authenticate using an account managed through a SAML 2.0 Asserting Party.

| Property Name | Description |

|---|---|

|

The URL for obtaining the identity provider’s metadata. The metadata can be retrieved from the identity provider via |

|

The entity id of the service provider (i.e. NiFi). This value will be used as the |

|

The name of a SAML assertion attribute containing the user’sidentity. This property is optional and if not specified, or if the attribute is not found, then the NameID of the Subject will be used. |

|

The name of a SAML assertion attribute containing group names the user belongs to. This property is optional, but if populated the groups will be passed along to the authorization process. |

|

Controls the value of |

|

Controls the value of |

|

The algorithm to use when signing SAML messages. Reference the Open SAML Signature Constants for a list of valid values. If not specified, a default of SHA-256 will be used. The default value is |

|

The expiration of the NiFi JWT that will be produced from a successful SAML authentication response. The default value is |

|

Enables SAML SingleLogout which causes a logout from NiFi to logout of the identity provider. By default, a logout of NiFi will only remove the NiFi JWT. The default value is |

|

The truststore strategy when the IDP metadata URL begins with https. A value of |

|

The connection timeout when communicating with the SAML IDP. The default value is |

|

The read timeout when communicating with the SAML IDP. The default value is |

SAML REST Resources

SAML authentication enables the following REST API resources for integration with a SAML 2.0 Asserting Party:

| Resource Path | Description |

|---|---|

/nifi-api/access/saml/local-logout/request |

Complete SAML 2.0 Logout processing without communicating with the Asserting Party |

/nifi-api/access/saml/login/consumer |

Process SAML 2.0 Login Requests assertions using HTTP-POST or HTTP-REDIRECT binding |

/nifi-api/access/saml/metadata |

Retrieve SAML 2.0 entity descriptor metadata as XML |

/nifi-api/access/saml/single-logout/consumer |

Process SAML 2.0 Single Logout Request assertions using HTTP-POST or HTTP-REDIRECT binding. Requires Single Logout to be enabled. |

/nifi-api/access/saml/single-logout/request |

Complete SAML 2.0 Single Logout processing initiating a request to the Asserting Party. Requires Single Logout to be enabled. |

JSON Web Tokens

NiFi uses JSON Web Tokens to provide authenticated access after the initial login process. Generated JSON Web Tokens include the authenticated user identity as well as the issuer and expiration from the configured Login Identity Provider.

NiFi uses generated Ed25519 Key Pairs to support the EdDSA algorithm for JSON Web Signatures. The system stores Ed25519

Public Keys using the configured local State Provider and retains the Private Key in memory. This approach supports signature verification

for the expiration configured in the Login Identity Provider without persisting the private key.

JSON Web Token support includes revocation on logout using JSON Web Token Identifiers. The system denies access for expired tokens based on the Login Identity Provider configuration, but revocation invalidates the token prior to expiration. The system stores revoked identifiers using the configured local State Provider and runs a scheduled command to delete revoked identifiers after the associated expiration.

The following settings can be configured in nifi.properties to control JSON Web Token signing.

| Property Name | Description |

|---|---|

|

JSON Web Signature Key Rotation Period defines how often the system generates a new RSA Key Pair, expressed as an ISO 8601 duration. The default is one hour: |

X.509 Client Certificates

NiFi supports authentication using mutual TLS with X.509 client certificates as part of the standard configuration when running with HTTPS enabled. Client certificate authentication is required for communication between NiFi nodes in a clustered deployment and cannot be disabled.

NiFi sends a certificate request during the TLS handshake as described in RFC 8446 Section 4.3.2 for TLS 1.3. When configured for authentication using a Login Identity Provider or Single Sign-On, NiFi sends a certificate request but does not require the client to respond. In absence of other authentication strategies, NiFi requires the client to present a certificate during the TLS handshake process. The NiFi security trust store properties define the certificate authorities accepted as issuers of client certificates.

Proxied Entities Chain

NiFi supports proxied entity access in conjunction with X.509 client certificate authentication. Clients that present trusted certificates for mutual TLS authentication can send proxied identity information through specified HTTP request headers. The client certificate subject principal must be authorized to send a proxy request, based on the configured Authorizer.

Authorized proxies can present one or more proxied identities using an HTTP request header and a value delimited using angle bracket characters.

-

Header Name:

X-ProxiedEntitiesChain -

Value:

<user-identity>

Multiple proxied entities can be specified to indicate a chain of proxy services.

-

Header Name:

X-ProxiedEntitiesChain -

Value:

<user-identity><proxy-server-identity>

Proxied identities that contain characters outside of US-ASCII must be encoded using Base64 and wrapped with additional angle brackets.

-

Header Name:

X-ProxiedEntitiesChain -

Value:

<<dXNlci1pZGVudGl0eQ>>

NiFi includes an HTTP response header on successful authentication of HTTP requests with proxied entities.

-

Header Name:

X-ProxiedEntitiesAccepted -

Value:

true

NiFi includes an HTTP response header on failed authentication of proxied entities describing the error.

-

Header Name:

X-ProxiedEntitiesDetails -

Value: error message

Proxied Entity Groups

NiFi supports passing group membership information together with proxied identity information from clients that present authorized X.509 client certificates.

Authorized proxies can pass one or more group names using an HTTP request header and values delimited using angle bracket characters.

-

Header Name:

X-ProxiedEntityGroups -

Value:

<first-group><second-group>

Proxied group names follow the same encoding standards as proxied entities, requiring Base64 encoding for characters outside of US-ASCII.

Cross-Site Request Forgery Protection

NiFi 1.15.0 introduced Cross-Site Request Forgery protection as part of user interface access based on session cookies. CSRF protection builds on standard Spring Security features and implements the double submit cookie strategy. The implementation strategy relies on the server generating and sending a random request token cookie at the beginning of the session. The client browser stores the cookie, JavaScript application code reads the cookie, and sets the value in a custom HTTP header on subsequent requests.

NiFi applies the SameSite attribute with a value of Strict to session cookies, which instructs supporting web

browsers to avoid sending the cookie on requests that a third party initiates. These protections mitigate a number of

potential threats.

Cookie names are not considered part of the public REST API and are subject to change in minor release

versions. Programmatic HTTP requests to the NiFi REST API should use the standard HTTP Authorization header when

sending access tokens instead of the session cookie that the NiFi user interface uses.

NiFi deployments that include HTTP load balanced access with Session Affinity depend on custom HTTP cookies, requiring custom programmatic clients to store and send cookies for the duration of an authenticated session. Programmatic clients in these scenarios should limit cookie storage to cookie names specific to the HTTP load balancer to avoid HTTP 403 Forbidden errors related to CSRF filtering.

The CSRF implementation sends the following HTTP cookie to set the random request token for the session:

-

Cookie Name:

__Secure-Request-Token -

Value: Random UUID

The CSRF security filter expects the following HTTP request header on non-idempotent methods such as POST or PUT:

-

Header Name:

Request-Token -

Value: UUID matching the

__Secure-Request-Tokencookie header

Multi-Tenant Authorization

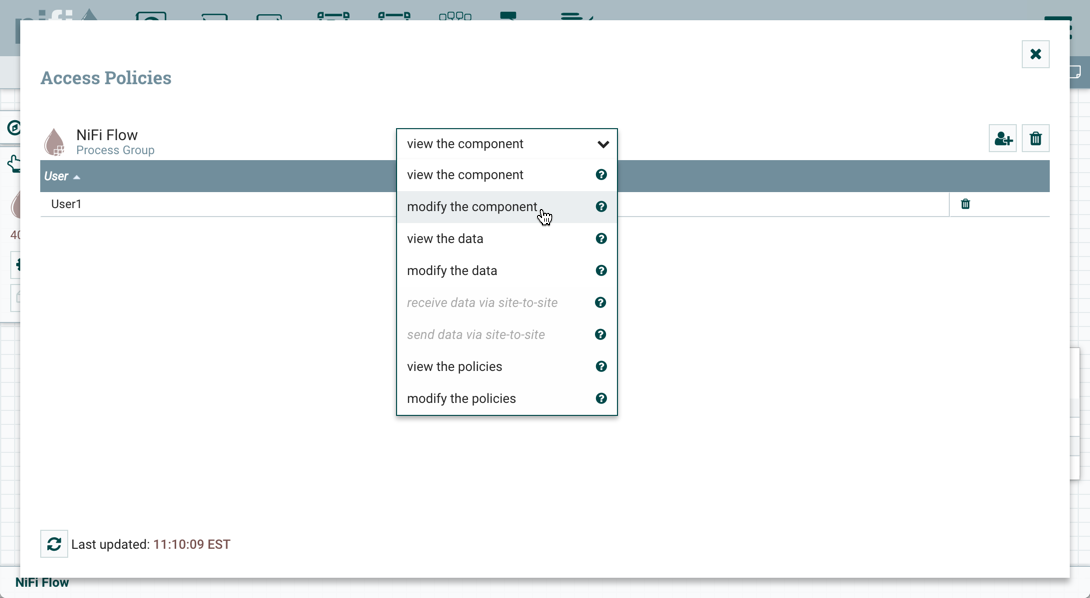

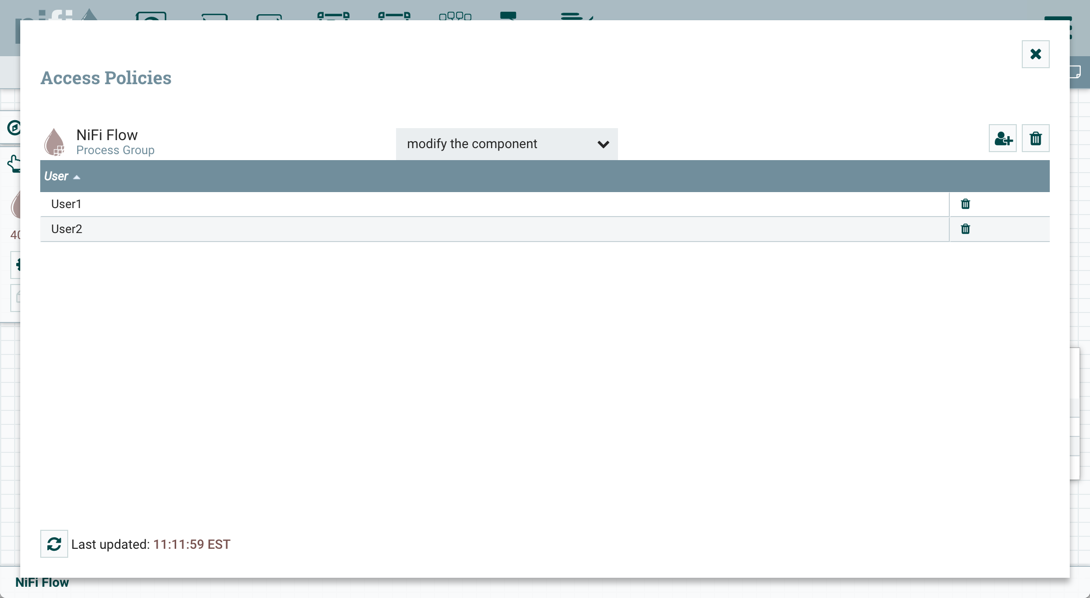

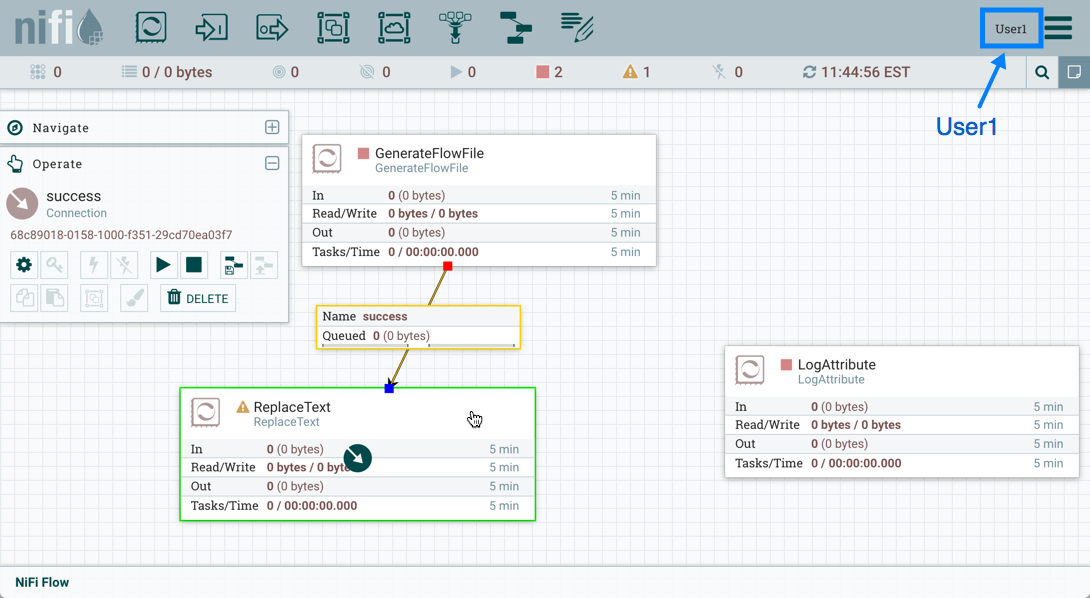

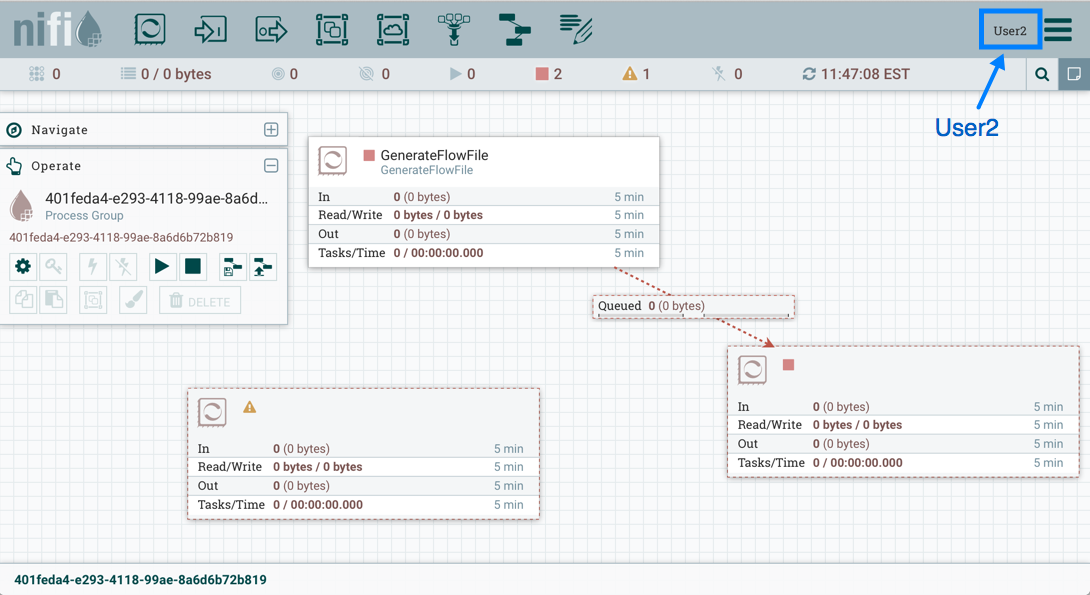

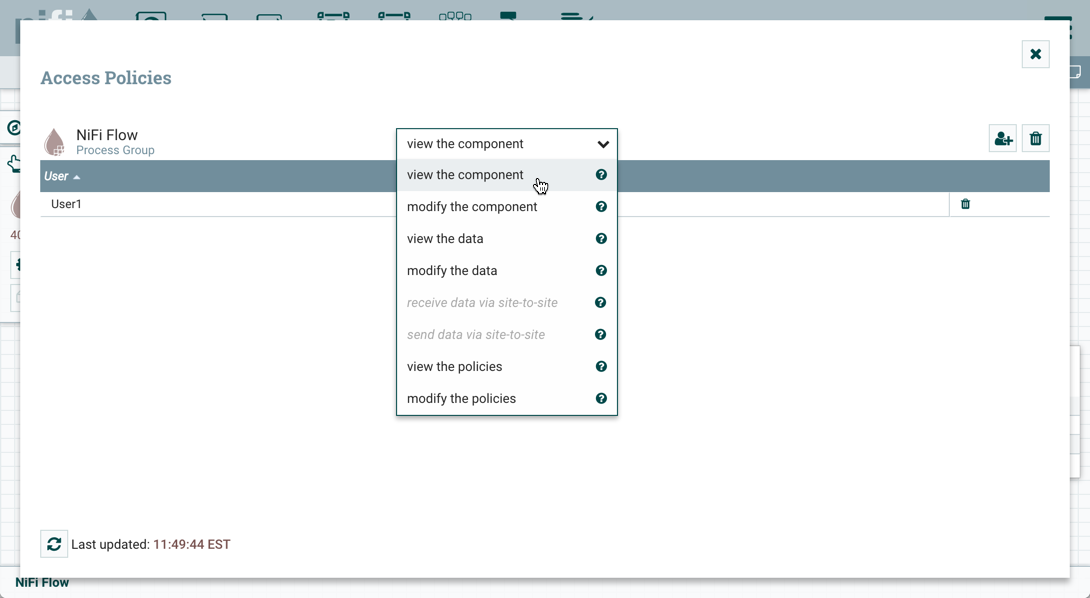

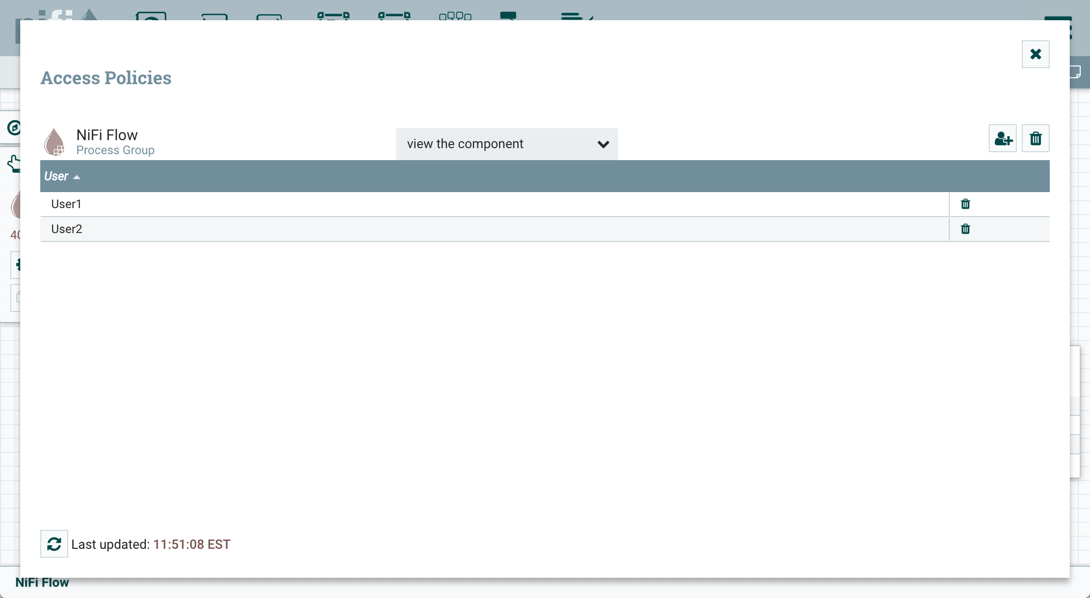

After you have configured NiFi to run securely and with an authentication mechanism, you must configure who has access to the system, and the level of their access. You can do this using 'multi-tenant authorization'. Multi-tenant authorization enables multiple groups of users (tenants) to command, control, and observe different parts of the dataflow, with varying levels of authorization. When an authenticated user attempts to view or modify a NiFi resource, the system checks whether the user has privileges to perform that action. These privileges are defined by policies that you can apply system-wide or to individual components.

Authorizer Configuration

An 'authorizer' grants users the privileges to manage users and policies by creating preliminary authorizations at startup.

Authorizers are configured using two properties in the nifi.properties file:

-

The

nifi.authorizer.configuration.fileproperty specifies the configuration file where authorizers are defined. By default, the authorizers.xml file located in the root installation conf directory is selected. -

The

nifi.security.user.authorizerproperty indicates which of the configured authorizers in the authorizers.xml file to use.

Authorizers.xml Setup

The authorizers.xml file is used to define and configure available authorizers. The default authorizer is the StandardManagedAuthorizer. The managed authorizer is comprised of a UserGroupProvider and a AccessPolicyProvider. The users, group, and access policies will be loaded and optionally configured through these providers. The managed authorizer will make all access decisions based on these provided users, groups, and access policies.

During startup there is a check to ensure that there are no two users/groups with the same identity/name. This check is executed regardless of the configured implementation. This is necessary because this is how users/groups are identified and authorized during access decisions.

FileUserGroupProvider

The default UserGroupProvider is the FileUserGroupProvider, however, you can develop additional UserGroupProviders as extensions. The FileUserGroupProvider has the following properties:

-

Users File - The file where the FileUserGroupProvider stores users and groups. By default, the users.xml in the

confdirectory is chosen. -

Legacy Authorized Users File - The full path to an existing authorized-users.xml that will be automatically be used to load the users and groups into the Users File.

-

Initial User Identity - The identity of a user or system to seed the Users File. The name of each property must be unique, for example: "Initial User Identity A", "Initial User Identity B", "Initial User Identity C" or "Initial User Identity 1", "Initial User Identity 2", "Initial User Identity 3"

-

Initial Group Identity - The identity of a user group to seed the Users File. The name of each property must be unique, for example: "Initial Group Identity A", "Initial Group Identity B", "Initial Group Identity C" or "Initial Group Identity 1", "Initial Group Identity 2", "Initial Group Identity 3"

LdapUserGroupProvider

Another option for the UserGroupProvider is the LdapUserGroupProvider. By default, this option is commented out but can be configured in lieu of the FileUserGroupProvider. This will sync users and groups from a directory server and will present them in the NiFi UI in read only form.

The LdapUserGroupProvider has the following properties:

| Property Name | Description |

|---|---|

|

How the connection to the LDAP server is authenticated. Possible values are |

|

The DN of the manager that is used to bind to the LDAP server to search for users. |

|

The password of the manager that is used to bind to the LDAP server to search for users. |

|

Path to the Keystore that is used when connecting to LDAP using LDAPS or START_TLS. |

|

Password for the Keystore that is used when connecting to LDAP using LDAPS or START_TLS. |

|

Type of the Keystore that is used when connecting to LDAP using LDAPS or START_TLS (i.e. |

|

Path to the Truststore that is used when connecting to LDAP using LDAPS or START_TLS. |

|

Password for the Truststore that is used when connecting to LDAP using LDAPS or START_TLS. |

|

Type of the Truststore that is used when connecting to LDAP using LDAPS or START_TLS (i.e. |

|

Client authentication policy when connecting to LDAP using LDAPS or START_TLS. Possible values are |

|

Protocol to use when connecting to LDAP using LDAPS or START_TLS. (i.e. |

|

Specifies whether the TLS should be shut down gracefully before the target context is closed. Defaults to false. |

|

Strategy for handling referrals. Possible values are |

|

Duration of connect timeout. (i.e. |

|

Duration of read timeout. (i.e. |

|

Space-separated list of URLs of the LDAP servers (i.e. |

|

Sets the page size when retrieving users and groups. If not specified, no paging is performed. |

|

Sets whether group membership decisions are case sensitive. When a user or group is inferred (by not specifying or user or group search base or user identity attribute or group name attribute) case sensitivity is enforced since the value to use for the user identity or group name would be ambiguous. Defaults to false. |

|

Duration of time between syncing users and groups. (i.e. |

|

Base DN for searching for users (i.e. |

|

Object class for identifying users (i.e. |

|

Search scope for searching users ( |

|

Filter for searching for users against the |

|

Attribute to use to extract user identity (i.e. |

|

Attribute to use to define group membership (i.e. |

|

If blank, the value of the attribute defined in |

|

Base DN for searching for groups (i.e. |

|

Object class for identifying groups (i.e. |

|

Search scope for searching groups ( |

|

Filter for searching for groups against the |

|

Attribute to use to extract group name (i.e. |

|

Attribute to use to define group membership (i.e. |

|

If blank, the value of the attribute defined in |

| Any identity mapping rules specified in nifi.properties will also be applied to the user identities. Group names are not mapped. |

Composite Implementations

Another option for the UserGroupProvider are composite implementations. This means that multiple sources/implementations can be configured and composed. For instance, an admin can configure users/groups to be loaded from a file and a directory server. There are two composite implementations, one that supports multiple UserGroupProviders and one that supports multiple UserGroupProviders and a single configurable UserGroupProvider.

The CompositeUserGroupProvider will provide support for retrieving users and groups from multiple sources. The CompositeUserGroupProvider has the following property:

| Property Name | Description |

|---|---|

|

The identifier of user group providers to load from. The name of each property must be unique, for example: "User Group Provider A", "User Group Provider B", "User Group Provider C" or "User Group Provider 1", "User Group Provider 2", "User Group Provider 3" |

| Any identity mapping rules specified in nifi.properties are not applied in this implementation. This behavior would need to be applied by the base implementation. |

The CompositeConfigurableUserGroupProvider will provide support for retrieving users and groups from multiple sources. Additionally, a single configurable user group provider is required. Users from the configurable user group provider are configurable, however users loaded from one of the User Group Provider [unique key] will not be. The CompositeConfigurableUserGroupProvider has the following properties:

| Property Name | Description |

|---|---|

|

A configurable user group provider. |

|

The identifier of user group providers to load from. The name of each property must be unique, for example: "User Group Provider A", "User Group Provider B", "User Group Provider C" or "User Group Provider 1", "User Group Provider 2", "User Group Provider 3" |

FileAccessPolicyProvider

The default AccessPolicyProvider is the FileAccessPolicyProvider, however, you can develop additional AccessPolicyProvider as extensions. The FileAccessPolicyProvider has the following properties:

| Property Name | Description |

|---|---|

|

The identifier for an User Group Provider defined above that will be used to access users and groups for use in the managed access policies. |

|

The file where the FileAccessPolicyProvider will store policies. |

|

The identity of an initial admin user that will be granted access to the UI and given the ability to create additional users, groups, and policies. The value of this property could be a DN when using certificates or LDAP, or a Kerberos principal. This property will only be used when there are no other policies defined. If this property is specified then a Legacy Authorized Users File can not be specified. If the property |

|

The identity of an initial admin group that will be granted access to the UI and given the ability to create additional users, groups, and policies. The value of this property could be a DN when using certificates or LDAP, or a Kerberos principal. This property will only be used when there are no other policies defined. If this property is specified then a Legacy Authorized Users File can not be specified. |

|

The full path to an existing authorized-users.xml that will be automatically converted to the new authorizations model. If this property is specified then an Initial Admin Identity or Initial Admin Group can not be specified, and this property will only be used when there are no other users, groups, and policies defined. |

|

The identity of a NiFi cluster node. When clustered, a property for each node should be defined, so that every node knows about every other node. If not clustered these properties can be ignored. The name of each property must be unique, for example for a three node cluster: "Node Identity A", "Node Identity B", "Node Identity C" or "Node Identity 1", "Node Identity 2", "Node Identity 3" |

|

The name of a group containing NiFi cluster nodes. The typical use for this is when nodes are dynamically added/removed from the cluster. |

| The identities configured in the Initial Admin Identity, Initial Admin Group, the Node Identity properties, or discovered in a Legacy Authorized Users File must be available in the configured User Group Provider. |

| Any users in the legacy users file must be found in the configured User Group Provider. |

| Any identity mapping rules specified in nifi.properties will also be applied to the node identities, so the values should be the unmapped identities (i.e. full DN from a certificate). This identity must be found in the configured User Group Provider. |

StandardManagedAuthorizer

The default authorizer is the StandardManagedAuthorizer, however, you can develop additional authorizers as extensions. The StandardManagedAuthorizer has the following property:

| Property Name | Description |

|---|---|

|

The identifier for an Access Policy Provider defined above. |

FileAuthorizer

The FileAuthorizer has been replaced with the more granular StandardManagedAuthorizer approach described above. However, it is still available for backwards compatibility reasons. The FileAuthorizer has the following properties:

| Property Name | Description |

|---|---|

|

The file where the FileAuthorizer stores policies. By default, the authorizations.xml in the |

|

The file where the FileAuthorizer stores users and groups. By default, the users.xml in the |

|

The identity of an initial admin user that is granted access to the UI and given the ability to create additional users, groups, and policies. This property is only used when there are no other users, groups, and policies defined. |

|

The full path to an existing authorized-users.xml that is automatically converted to the multi-tenant authorization model. This property is only used when there are no other users, groups, and policies defined. |

|

The identity of a NiFi cluster node. When clustered, a property for each node should be defined, so that every node knows about every other node. If not clustered, these properties can be ignored. |

| Any identity mapping rules specified in nifi.properties will also be applied to the initial admin identity, so the value should be the unmapped identity. |

| Any identity mapping rules specified in nifi.properties will also be applied to the node identities, so the values should be the unmapped identities (i.e. full DN from a certificate). |

Initial Admin Identity (New NiFi Instance)

If you are setting up a secured NiFi instance for the first time, you must manually designate an “Initial Admin Identity” in the authorizers.xml file. This initial admin user is granted access to the UI and given the ability to create additional users, groups, and policies. The value of this property could be a DN (when using certificates or LDAP) or a Kerberos principal. If you are the NiFi administrator, add yourself as the “Initial Admin Identity”. Alternatively, specifying an “Initial Admin Group” grants administrative access to a group of users, mitigating dependence on a single person or the need for a shared account.

After you have edited and saved the authorizers.xml file, restart NiFi. The “Initial Admin Identity” user and administrative policies are added to the users.xml and authorizations.xml files during restart. Once NiFi starts, the “Initial Admin Identity” user is able to access the UI and begin managing users, groups, and policies.

| For a brand new secure flow, providing the "Initial Admin Identity" gives that user access to get into the UI and to manage users, groups and policies. But if that user wants to start modifying the flow, they need to grant themselves policies for the root process group. The system is unable to do this automatically because in a new flow the UUID of the root process group is not permanent until the flow.json.gz is generated. If the NiFi instance is an upgrade from an existing flow.json.gz or a 1.x instance going from unsecure to secure, then the "Initial Admin Identity" user is automatically given the privileges to modify the flow. |

Some common use cases are described below.

File-based (LDAP Authentication)

Here is an example LDAP entry using the name John Smith:

<authorizers>

<userGroupProvider>

<identifier>file-user-group-provider</identifier>

<class>org.apache.nifi.authorization.FileUserGroupProvider</class>

<property name="Users File">./conf/users.xml</property>

<property name="Legacy Authorized Users File"></property>

<property name="Initial User Identity 1">cn=John Smith,ou=people,dc=example,dc=com</property>

</userGroupProvider>

<accessPolicyProvider>

<identifier>file-access-policy-provider</identifier>

<class>org.apache.nifi.authorization.FileAccessPolicyProvider</class>

<property name="User Group Provider">file-user-group-provider</property>

<property name="Authorizations File">./conf/authorizations.xml</property>

<property name="Initial Admin Identity">cn=John Smith,ou=people,dc=example,dc=com</property>

<property name="Legacy Authorized Users File"></property>

<property name="Node Identity 1"></property>

</accessPolicyProvider>

<authorizer>

<identifier>managed-authorizer</identifier>

<class>org.apache.nifi.authorization.StandardManagedAuthorizer</class>

<property name="Access Policy Provider">file-access-policy-provider</property>

</authorizer>

</authorizers>

File-based (Kerberos Authentication)

Here is an example Kerberos entry using the name John Smith and realm NIFI.APACHE.ORG:

<authorizers>

<userGroupProvider>

<identifier>file-user-group-provider</identifier>

<class>org.apache.nifi.authorization.FileUserGroupProvider</class>

<property name="Users File">./conf/users.xml</property>

<property name="Legacy Authorized Users File"></property>

<property name="Initial User Identity 1">johnsmith@NIFI.APACHE.ORG</property>

</userGroupProvider>

<accessPolicyProvider>

<identifier>file-access-policy-provider</identifier>

<class>org.apache.nifi.authorization.FileAccessPolicyProvider</class>

<property name="User Group Provider">file-user-group-provider</property>

<property name="Authorizations File">./conf/authorizations.xml</property>

<property name="Initial Admin Identity">johnsmith@NIFI.APACHE.ORG</property>

<property name="Legacy Authorized Users File"></property>

<property name="Node Identity 1"></property>

</accessPolicyProvider>

<authorizer>

<identifier>managed-authorizer</identifier>

<class>org.apache.nifi.authorization.StandardManagedAuthorizer</class>

<property name="Access Policy Provider">file-access-policy-provider</property>

</authorizer>

</authorizers>

LDAP-based Users/Groups Referencing User DN

Here is an example loading users and groups from LDAP. Group membership will be driven through the member attribute of each group. Authorization will still use file-based access policies:

dn: cn=User 1,ou=users,o=nifi

objectClass: organizationalPerson

objectClass: person

objectClass: inetOrgPerson

objectClass: top

cn: User 1

sn: User1

uid: user1

dn: cn=User 2,ou=users,o=nifi

objectClass: organizationalPerson

objectClass: person

objectClass: inetOrgPerson

objectClass: top

cn: User 2

sn: User2

uid: user2

dn: cn=admins,ou=groups,o=nifi

objectClass: groupOfNames

objectClass: top

cn: admins

member: cn=User 1,ou=users,o=nifi

member: cn=User 2,ou=users,o=nifi

<authorizers>

<userGroupProvider>

<identifier>ldap-user-group-provider</identifier>

<class>org.apache.nifi.ldap.tenants.LdapUserGroupProvider</class>

<property name="Authentication Strategy">ANONYMOUS</property>

<property name="Manager DN"></property>

<property name="Manager Password"></property>

<property name="TLS - Keystore"></property>

<property name="TLS - Keystore Password"></property>

<property name="TLS - Keystore Type"></property>

<property name="TLS - Truststore"></property>

<property name="TLS - Truststore Password"></property>

<property name="TLS - Truststore Type"></property>

<property name="TLS - Client Auth"></property>

<property name="TLS - Protocol"></property>

<property name="TLS - Shutdown Gracefully"></property>

<property name="Referral Strategy">FOLLOW</property>

<property name="Connect Timeout">10 secs</property>

<property name="Read Timeout">10 secs</property>

<property name="Url">ldap://localhost:10389</property>

<property name="Page Size"></property>

<property name="Sync Interval">30 mins</property>

<property name="Group Membership - Enforce Case Sensitivity">false</property>

<property name="User Search Base">ou=users,o=nifi</property>

<property name="User Object Class">person</property>

<property name="User Search Scope">ONE_LEVEL</property>

<property name="User Search Filter"></property>

<property name="User Identity Attribute">cn</property>

<property name="User Group Name Attribute"></property>

<property name="User Group Name Attribute - Referenced Group Attribute"></property>

<property name="Group Search Base">ou=groups,o=nifi</property>

<property name="Group Object Class">groupOfNames</property>

<property name="Group Search Scope">ONE_LEVEL</property>

<property name="Group Search Filter"></property>

<property name="Group Name Attribute">cn</property>

<property name="Group Member Attribute">member</property>

<property name="Group Member Attribute - Referenced User Attribute"></property>

</userGroupProvider>

<accessPolicyProvider>

<identifier>file-access-policy-provider</identifier>

<class>org.apache.nifi.authorization.FileAccessPolicyProvider</class>

<property name="User Group Provider">ldap-user-group-provider</property>

<property name="Authorizations File">./conf/authorizations.xml</property>

<property name="Initial Admin Identity">John Smith</property>

<property name="Legacy Authorized Users File"></property>

<property name="Node Identity 1"></property>

</accessPolicyProvider>

<authorizer>

<identifier>managed-authorizer</identifier>

<class>org.apache.nifi.authorization.StandardManagedAuthorizer</class>

<property name="Access Policy Provider">file-access-policy-provider</property>

</authorizer>

</authorizers>

The Initial Admin Identity value would have loaded from the cn from John Smith’s entry based on the User Identity Attribute value.

LDAP-based Users/Groups Referencing User Attribute

Here is an example loading users and groups from LDAP. Group membership will be driven through the member uid attribute of each group. Authorization will still use file-based access policies:

dn: uid=User 1,ou=Users,dc=local

objectClass: inetOrgPerson

objectClass: posixAccount

objectClass: shadowAccount

uid: user1

cn: User 1

dn: uid=User 2,ou=Users,dc=local

objectClass: inetOrgPerson

objectClass: posixAccount

objectClass: shadowAccount

uid: user2

cn: User 2

dn: cn=Managers,ou=Groups,dc=local

objectClass: posixGroup

cn: Managers

memberUid: user1

memberUid: user2

<authorizers>

<userGroupProvider>

<identifier>ldap-user-group-provider</identifier>

<class>org.apache.nifi.ldap.tenants.LdapUserGroupProvider</class>

<property name="Authentication Strategy">ANONYMOUS</property>

<property name="Manager DN"></property>

<property name="Manager Password"></property>

<property name="TLS - Keystore"></property>

<property name="TLS - Keystore Password"></property>

<property name="TLS - Keystore Type"></property>

<property name="TLS - Truststore"></property>

<property name="TLS - Truststore Password"></property>

<property name="TLS - Truststore Type"></property>

<property name="TLS - Client Auth"></property>

<property name="TLS - Protocol"></property>

<property name="TLS - Shutdown Gracefully"></property>

<property name="Referral Strategy">FOLLOW</property>

<property name="Connect Timeout">10 secs</property>

<property name="Read Timeout">10 secs</property>

<property name="Url">ldap://localhost:10389</property>

<property name="Page Size"></property>

<property name="Sync Interval">30 mins</property>

<property name="Group Membership - Enforce Case Sensitivity">false</property>

<property name="User Search Base">ou=Users,dc=local</property>

<property name="User Object Class">posixAccount</property>

<property name="User Search Scope">ONE_LEVEL</property>

<property name="User Search Filter"></property>

<property name="User Identity Attribute">cn</property>

<property name="User Group Name Attribute"></property>

<property name="User Group Name Attribute - Referenced Group Attribute"></property>

<property name="Group Search Base">ou=Groups,dc=local</property>

<property name="Group Object Class">posixGroup</property>

<property name="Group Search Scope">ONE_LEVEL</property>

<property name="Group Search Filter"></property>

<property name="Group Name Attribute">cn</property>

<property name="Group Member Attribute">memberUid</property>

<property name="Group Member Attribute - Referenced User Attribute">uid</property>

</userGroupProvider>

<accessPolicyProvider>

<identifier>file-access-policy-provider</identifier>

<class>org.apache.nifi.authorization.FileAccessPolicyProvider</class>

<property name="User Group Provider">ldap-user-group-provider</property>

<property name="Authorizations File">./conf/authorizations.xml</property>

<property name="Initial Admin Identity">John Smith</property>

<property name="Legacy Authorized Users File"></property>

<property name="Node Identity 1"></property>

</accessPolicyProvider>

<authorizer>

<identifier>managed-authorizer</identifier>

<class>org.apache.nifi.authorization.StandardManagedAuthorizer</class>

<property name="Access Policy Provider">file-access-policy-provider</property>

</authorizer>

</authorizers>

Composite - File and LDAP-based Users/Groups

Here is an example composite implementation loading users and groups from LDAP and a local file. Group membership will be driven through the member attribute of each group. The users from LDAP will be read only while the users loaded from the file will be configurable in UI.

dn: cn=User 1,ou=users,o=nifi

objectClass: organizationalPerson

objectClass: person

objectClass: inetOrgPerson

objectClass: top

cn: User 1

sn: User1

uid: user1

dn: cn=User 2,ou=users,o=nifi

objectClass: organizationalPerson

objectClass: person

objectClass: inetOrgPerson

objectClass: top

cn: User 2

sn: User2

uid: user2

dn: cn=admins,ou=groups,o=nifi

objectClass: groupOfNames

objectClass: top

cn: admins

member: cn=User 1,ou=users,o=nifi

member: cn=User 2,ou=users,o=nifi

<authorizers>

<userGroupProvider>

<identifier>file-user-group-provider</identifier>

<class>org.apache.nifi.authorization.FileUserGroupProvider</class>

<property name="Users File">./conf/users.xml</property>

<property name="Legacy Authorized Users File"></property>

<property name="Initial User Identity 1">cn=nifi-node1,ou=servers,dc=example,dc=com</property>

<property name="Initial User Identity 2">cn=nifi-node2,ou=servers,dc=example,dc=com</property>

</userGroupProvider>

<userGroupProvider>

<identifier>ldap-user-group-provider</identifier>

<class>org.apache.nifi.ldap.tenants.LdapUserGroupProvider</class>

<property name="Authentication Strategy">ANONYMOUS</property>

<property name="Manager DN"></property>

<property name="Manager Password"></property>

<property name="TLS - Keystore"></property>

<property name="TLS - Keystore Password"></property>

<property name="TLS - Keystore Type"></property>

<property name="TLS - Truststore"></property>

<property name="TLS - Truststore Password"></property>

<property name="TLS - Truststore Type"></property>

<property name="TLS - Client Auth"></property>

<property name="TLS - Protocol"></property>

<property name="TLS - Shutdown Gracefully"></property>

<property name="Referral Strategy">FOLLOW</property>

<property name="Connect Timeout">10 secs</property>

<property name="Read Timeout">10 secs</property>

<property name="Url">ldap://localhost:10389</property>

<property name="Page Size"></property>

<property name="Sync Interval">30 mins</property>

<property name="Group Membership - Enforce Case Sensitivity">false</property>

<property name="User Search Base">ou=users,o=nifi</property>

<property name="User Object Class">person</property>

<property name="User Search Scope">ONE_LEVEL</property>

<property name="User Search Filter"></property>

<property name="User Identity Attribute">cn</property>

<property name="User Group Name Attribute"></property>

<property name="User Group Name Attribute - Referenced Group Attribute"></property>

<property name="Group Search Base">ou=groups,o=nifi</property>

<property name="Group Object Class">groupOfNames</property>

<property name="Group Search Scope">ONE_LEVEL</property>

<property name="Group Search Filter"></property>

<property name="Group Name Attribute">cn</property>

<property name="Group Member Attribute">member</property>

<property name="Group Member Attribute - Referenced User Attribute"></property>

</userGroupProvider>

<userGroupProvider>

<identifier>composite-user-group-provider</identifier>

<class>org.apache.nifi.authorization.CompositeConfigurableUserGroupProvider</class>

<property name="Configurable User Group Provider">file-user-group-provider</property>

<property name="User Group Provider 1">ldap-user-group-provider</property>

</userGroupProvider>

<accessPolicyProvider>

<identifier>file-access-policy-provider</identifier>

<class>org.apache.nifi.authorization.FileAccessPolicyProvider</class>

<property name="User Group Provider">composite-user-group-provider</property>

<property name="Authorizations File">./conf/authorizations.xml</property>

<property name="Initial Admin Identity">John Smith</property>

<property name="Legacy Authorized Users File"></property>

<property name="Node Identity 1">cn=nifi-node1,ou=servers,dc=example,dc=com</property>

<property name="Node Identity 2">cn=nifi-node2,ou=servers,dc=example,dc=com</property>

</accessPolicyProvider>

<authorizer>

<identifier>managed-authorizer</identifier>

<class>org.apache.nifi.authorization.StandardManagedAuthorizer</class>

<property name="Access Policy Provider">file-access-policy-provider</property>

</authorizer>

</authorizers>

In this example, the users and groups are loaded from LDAP but the servers are managed in a local file. The Initial Admin Identity value came from an attribute in a LDAP entry based on the User Identity Attribute. The Node Identity values are established in the local file using the Initial User Identity properties.

Legacy Authorized Users (NiFi Instance Upgrade)

If you are upgrading from a 0.x NiFi instance, you can convert your previously configured users and roles to the multi-tenant authorization model. In the authorizers.xml file, specify the location of your existing authorized-users.xml file in the Legacy Authorized Users File property.

Here is an example entry:

<authorizers>

<userGroupProvider>

<identifier>file-user-group-provider</identifier>

<class>org.apache.nifi.authorization.FileUserGroupProvider</class>

<property name="Users File">./conf/users.xml</property>

<property name="Legacy Authorized Users File">/Users/johnsmith/config_files/authorized-users.xml</property>

<property name="Initial User Identity 1"></property>

</userGroupProvider>

<accessPolicyProvider>

<identifier>file-access-policy-provider</identifier>

<class>org.apache.nifi.authorization.FileAccessPolicyProvider</class>

<property name="User Group Provider">file-user-group-provider</property>

<property name="Authorizations File">./conf/authorizations.xml</property>

<property name="Initial Admin Identity"></property>

<property name="Legacy Authorized Users File">/Users/johnsmith/config_files/authorized-users.xml</property>

<property name="Node Identity 1"></property>

</accessPolicyProvider>

<authorizer>

<identifier>managed-authorizer</identifier>

<class>org.apache.nifi.authorization.StandardManagedAuthorizer</class>

<property name="Access Policy Provider">file-access-policy-provider</property>

</authorizer>

</authorizers>

After you have edited and saved the authorizers.xml file, restart NiFi. Users and roles from the authorized-users.xml file are converted and added as identities and policies in the users.xml and authorizations.xml files. Once the application starts, users who previously had a legacy Administrator role can access the UI and begin managing users, groups, and policies.

The following tables summarize the global and component policies assigned to each legacy role if the NiFi instance has an existing flow.json.gz:

Global Access Policies

| Admin | DFM | Monitor | Provenance | NiFi | Proxy | |

|---|---|---|---|---|---|---|

view the UI |

* |

* |

* |

|||

access the controller - view |

* |

* |

* |

* |

||

access the controller - modify |

* |

|||||

access parameter contexts - view |

||||||

access parameter contexts - modify |

||||||

query provenance |

* |

|||||

access restricted components |

* |

|||||

access all policies - view |

* |

|||||

access all policies - modify |

* |

|||||

access users/user groups - view |

* |

|||||

access users/user groups - modify |

* |

|||||

retrieve site-to-site details |

* |

|||||

view system diagnostics |

* |

* |

||||

proxy user requests |

* |

|||||

access counters |

Component Access Policies on the Root Process Group

| Admin | DFM | Monitor | Provenance | NiFi | Proxy | |

|---|---|---|---|---|---|---|

view the component |

* |

* |

* |

|||

modify the component |

* |

|||||

view the data |

* |

* |

* |

|||

modify the data |

* |

* |

||||

view provenance |

* |

For details on the individual policies in the table, see Access Policies.

NiFi fails to restart if values exist for both the Initial Admin Identity (or Initial Admin Group) and Legacy Authorized Users File properties. You can specify only one of these values to initialize authorizations.

|

| Do not manually edit the authorizations.xml file. Create authorizations only during initial setup and afterwards using the NiFi UI. |

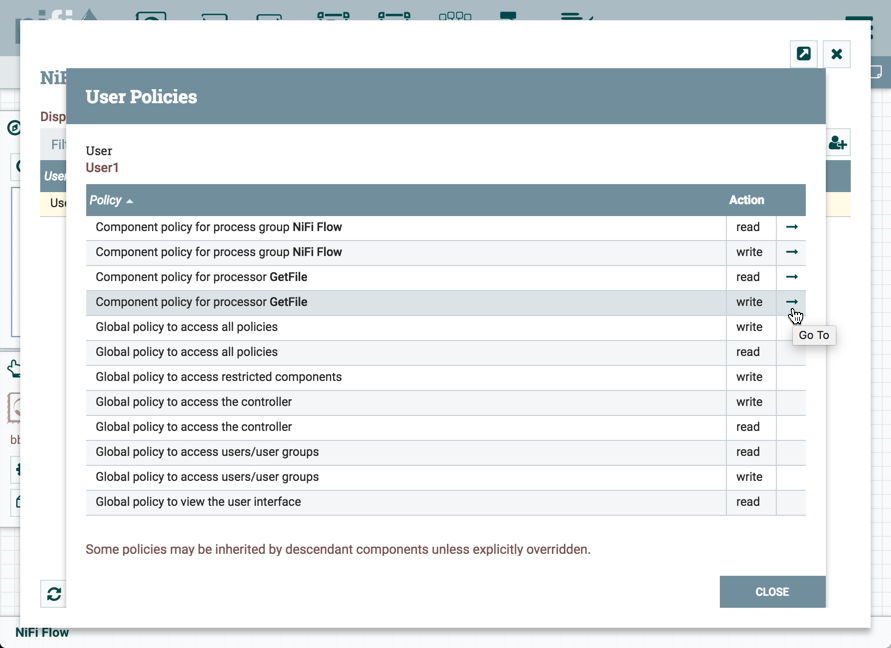

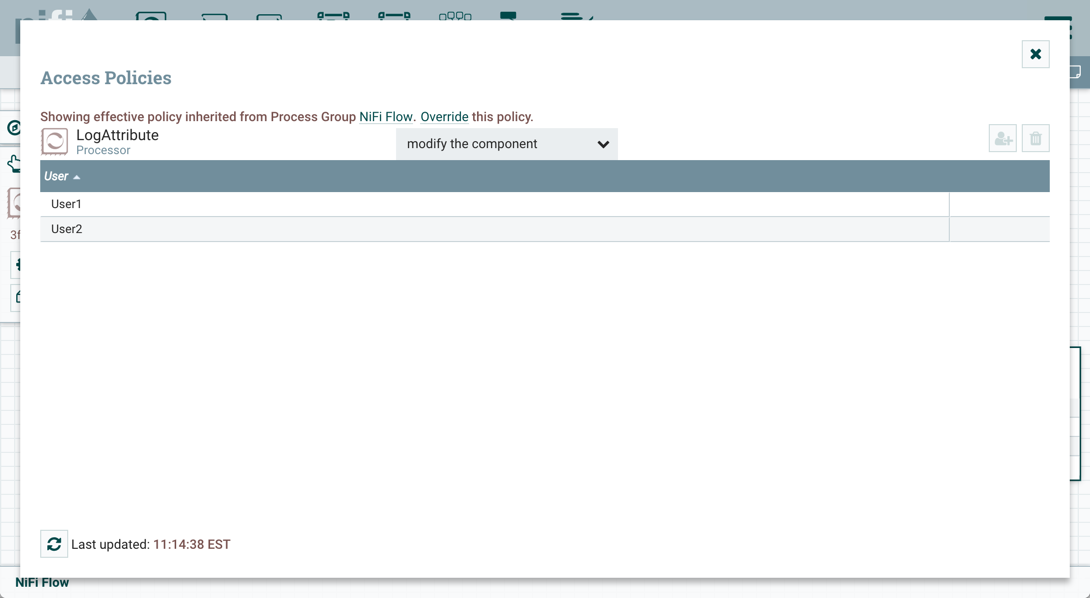

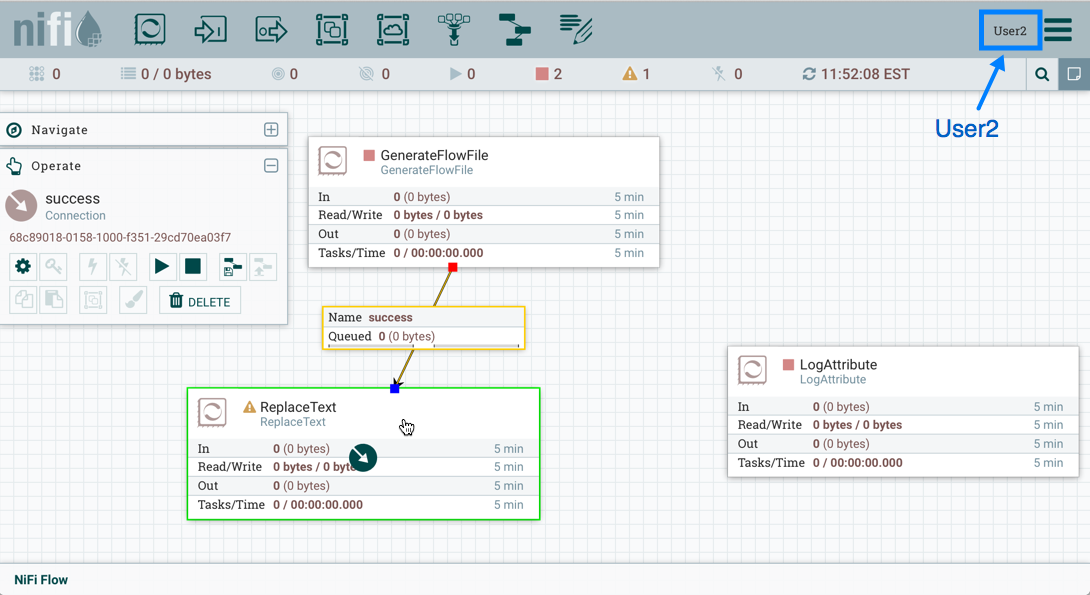

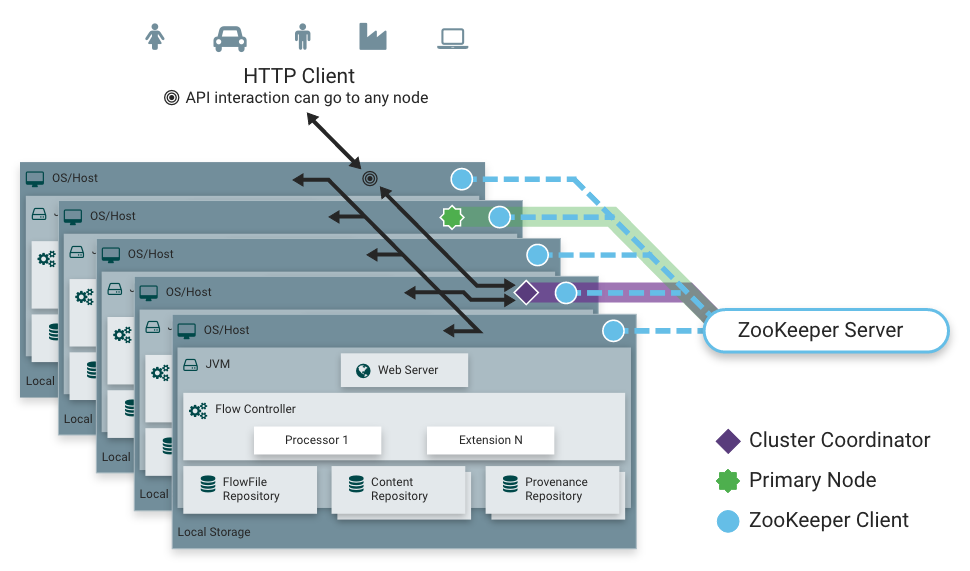

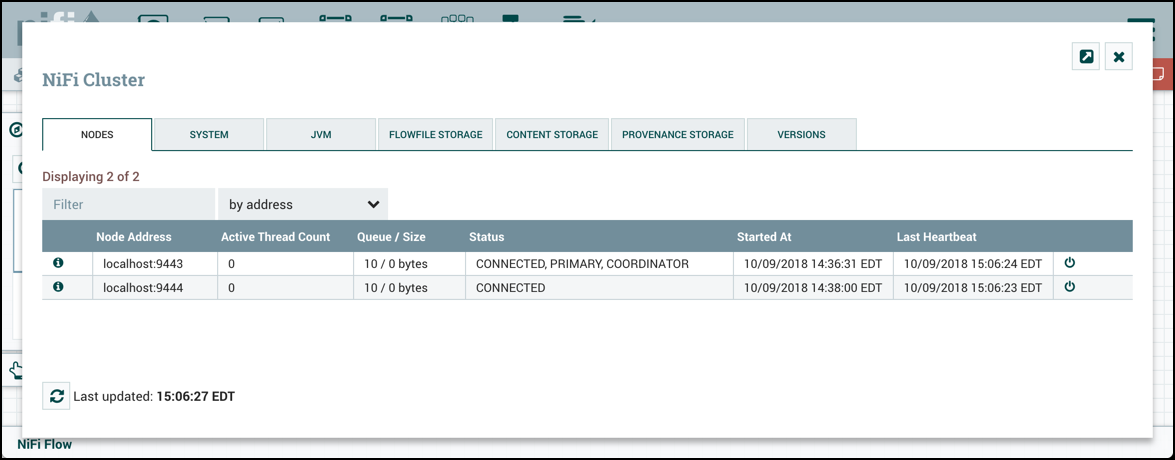

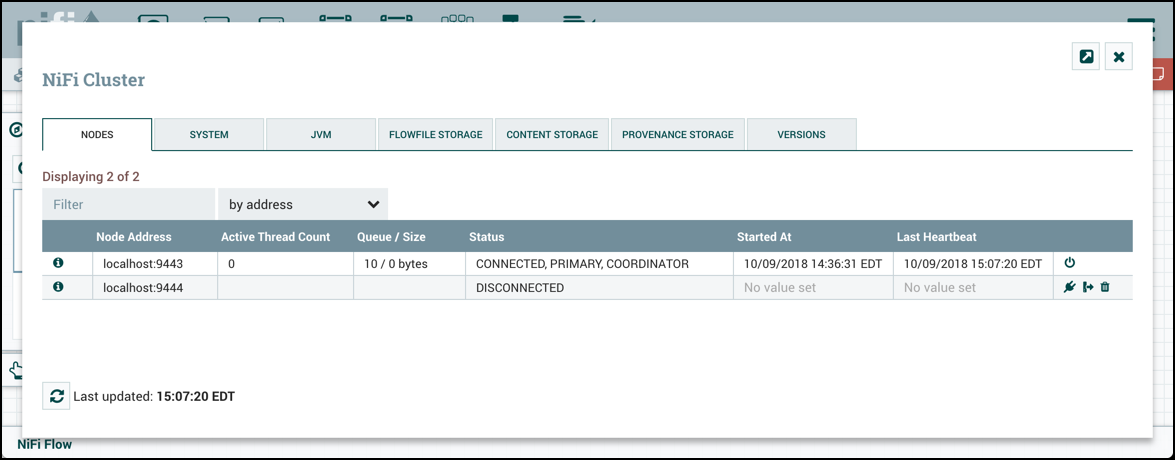

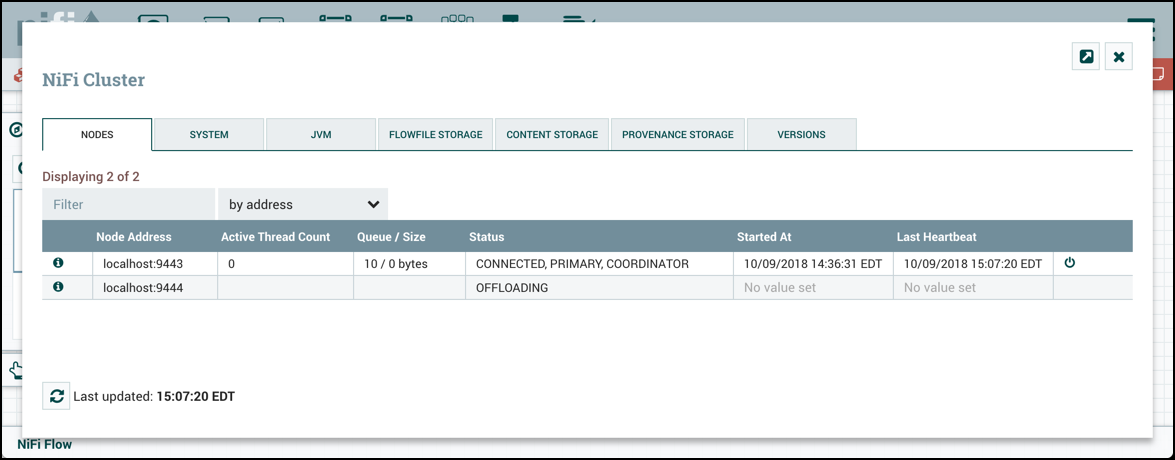

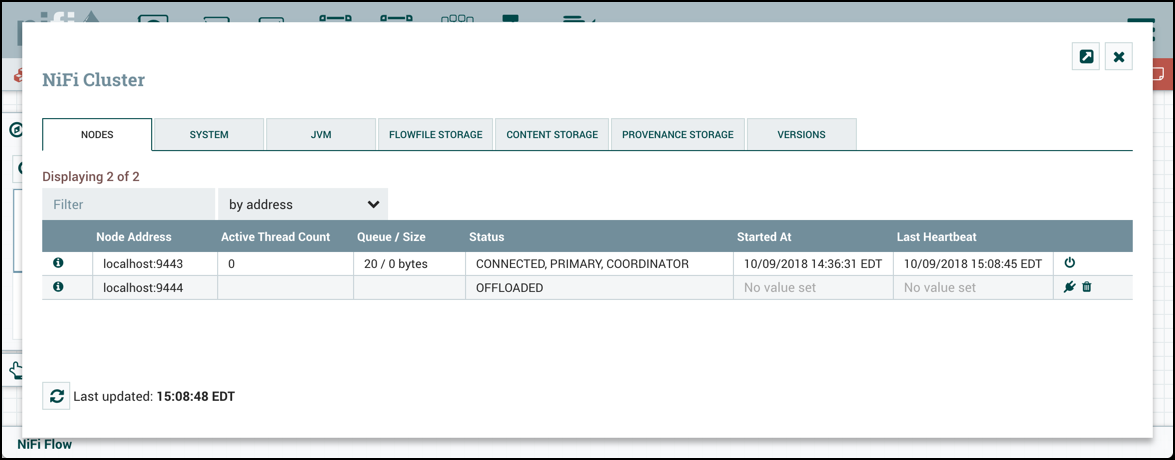

Cluster Node Identities